higher fashions, bigger context home windows, and extra succesful brokers. However most real-world failures don’t come from mannequin functionality — they arrive from how context is constructed, handed, and maintained.

This can be a laborious drawback. The house is transferring quick and strategies are nonetheless evolving. A lot of it stays an experimental science and depends upon the context (pun meant), constraints and setting you’re working in.

In my work constructing multi-agent programs, a recurring sample has emerged: efficiency is way much less about how a lot context you give a mannequin, and way more about how exactly you form it.

This piece is an try and distill my learnings into one thing you need to use.

It focuses on ideas for managing context as a constrained useful resource — deciding what to incorporate, what to exclude, and construction data in order that brokers stay coherent, environment friendly, and dependable over time.

As a result of on the finish of the day, the strongest brokers aren’t those that see probably the most. They’re those that see the precise issues, in the precise type, on the proper time.

Terminology

Context engineering

Context engineering is the artwork of offering the precise data, instruments and format to an LLM for it to finish a activity. Good context engineering means discovering the smallest attainable set of excessive sign tokens that give the LLM the very best chance of manufacturing an excellent consequence.

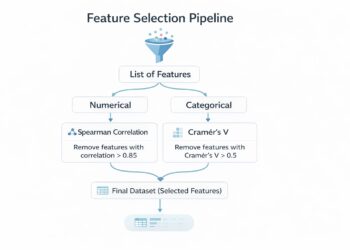

In apply, good context engineering often comes right down to 4 strikes. You offload data to exterior programs (context offloading) so the mannequin doesn’t want to hold the whole lot in-band. You retrieve data dynamically as an alternative of front-loading all of it (context retrieval). You isolate context so one subtask doesn’t contaminate one other (context isolation). And also you cut back historical past when wanted, however solely in ways in which protect what the agent will nonetheless want later (context discount).

A typical failure mode on the opposite facet is context air pollution: the presence of an excessive amount of pointless, conflicting or redundant data that it distracts the LLM.

Context rot

Context rot is a scenario the place an LLM’s efficiency degrades because the context window fills up, even whether it is throughout the established restrict. The LLM nonetheless has room to learn extra, however its reasoning begins to blur.

You’ll have observed that the efficient context window, the place the mannequin performs at top quality, is commonly a lot smaller than what the mannequin technically is able to.

There are two elements to this. First, a mannequin doesn’t keep excellent recall throughout it’s total context window. Data firstly and the tip is extra reliably recalled than issues within the center.

Second, bigger context home windows don’t resolve issues for enterprise programs. Enterprise information is successfully unbounded and steadily up to date that even when the mannequin might ingest the whole lot, that may not imply it might keep a coherent understanding over it.

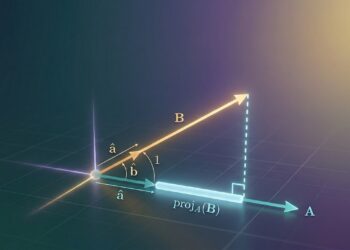

Identical to people have a restricted working reminiscence capability, each new token launched to the LLM depletes this consideration funds it has by some quantity. The eye shortage stems from architectural constraints within the transformer, the place each token attends to each different token. This results in a n² interplay sample for n tokens. Because the context grows, the mannequin is compelled to unfold its consideration thinner throughout extra relationships.

Context compaction

Context compaction is the overall reply to context rot.

When the mannequin is nearing the restrict of it’s context window, it summarises it’s contents and reinitiates a brand new context window with the earlier abstract. That is particularly helpful for lengthy operating duties to permit the mannequin to proceed to work with out an excessive amount of efficiency degradation.

Latest work on context folding affords a distinct method — brokers actively handle their working context. An agent can department off to deal with a subtask after which fold it upon completion, collapsing the intermediate steps whereas retaining a concise abstract of the result.

The issue, nonetheless, shouldn’t be in summarising, however in deciding what survives. Some issues ought to stay steady and practically immutable, reminiscent of the target of the duty and laborious constraints. Others may be safely discarded. The problem is that the significance of knowledge is commonly solely revealed later.

Good compaction subsequently must protect details that proceed to constrain future actions: which approaches already failed, which recordsdata had been created, which assumptions had been invalidated, which handles may be revisited, and which uncertainties stay unresolved. In any other case you get a neat, concise abstract that reads properly to a human and is ineffective to an agent.

Agent harness

A mannequin shouldn’t be an agent. The harness is what turns a mannequin into one.

By harness, I imply the whole lot across the mannequin that decides how context is assembled and maintained: immediate serialization, instrument routing, retry insurance policies, the foundations governing what’s preserved between steps, and so forth.

When you take a look at actual agent programs this fashion, plenty of supposed “mannequin failures” now look completely different. I’ve encountered a lot of such at work. These are literally harness failures: the agent forgot as a result of nothing persevered the precise state; it repeated work as a result of the harness surfaced no sturdy artefact of prior failure; it selected the improper instrument as a result of the harness overloaded the motion house; and so forth.

An excellent harness is, in some sense, a deterministic shell wrapped round a stochastic core. It makes the context legible, steady, and recoverable sufficient that the mannequin can spend its restricted reasoning funds on the duty quite than on reconstructing its personal state from a messy hint.

Communication between brokers

As duties get extra complicated, groups have defaulted in direction of multi-agent programs.

The error is to imagine that extra brokers means extra shared context. In apply, dumping an enormous shared transcript into each sub-agent typically creates precisely the other of specialisation. Now each agent is studying the whole lot, inheriting everybody else’s errors, and paying the identical context invoice time and again.

If just some context is shared, a brand new drawback seems. What is taken into account authoritative when brokers disagree? What stays native, and the way are conflicts reconciled?

The way in which out is to deal with communication not as shared reminiscence, however as state switch by way of well-defined interfaces.

For discrete duties with clear inputs and outputs, brokers ought to often talk by way of artefacts quite than uncooked traces. An internet-search agent, for example, doesn’t must move alongside its total looking historical past. It solely must floor the fabric that downstream brokers can really use.

Which means that intermediate reasoning, failed makes an attempt, and exploration traces keep non-public except explicitly wanted. What will get handed ahead are distilled outputs: extracted details, validated findings, or selections that constrain the following step.

For extra tightly coupled duties, like a debugging agent the place downstream reasoning genuinely depends upon prior makes an attempt, a restricted type of hint sharing may be launched. However this ought to be deliberate and scoped, not the default.

KV cache penalty

When AI fashions generate textual content, they typically repeat most of the similar calculations. KV caching is an inference time optimisation approach that quickens this course of by remembering necessary data from earlier steps as an alternative of recomputing the whole lot once more.

Nevertheless, in multi-agent programs, if each agent shares the identical context, you confuse the mannequin with a ton of irrelevant particulars and pay a large KV-cache penalty. A number of brokers engaged on the identical activity want to speak with one another, however this shouldn’t be through sharing reminiscence.

This is the reason brokers ought to talk by way of minimal, structured outputs in a managed method.

Maintain the agent’s toolset small and related

Device selection is a context drawback disguised as a functionality drawback.

As an agent accumulates extra instruments, the motion house will get tougher to navigate. There’s now a better chance of the mannequin taking place the improper motion and taking an inefficient route.

This has penalties. Device schemas should be way more distinct than most individuals realise. Instruments should be properly understood and have minimal overlap in performance. It ought to be very clear on what their meant use is and have clear enter parameters which are unambiguous.

One widespread failure mode that I observed even in my crew is that we are likely to have very bloated units of instruments which are added over time. This results in unclear resolution making on which instruments to make use of.

Agentic reminiscence

This can be a a way the place the agent usually writes notes persevered to reminiscence exterior of the context window. These notes get pulled again into the context window at later occasions.

The toughest half is deciding what deserves promotion into reminiscence. My rule of thumb is that sturdy reminiscence ought to include issues that proceed to constrain future reasoning: persistent preferences. Every little thing else ought to have a really excessive bar. Storing an excessive amount of is simply one other route again to context air pollution, solely now you might have made it persistent.

However reminiscence with out revision is a entice. As soon as brokers persist notes throughout steps or classes, additionally they want mechanisms for battle decision, deletion, and demotion. In any other case long-term reminiscence turns into a landfill of outdated beliefs.

To sum up

Context engineering remains to be evolving, and there’s no single appropriate solution to do it. A lot of it stays empirical, formed by the programs we construct and the constraints we function below.

Left unchecked, context grows, drifts, and ultimately collapses below its personal weight.

If well-managed, context turns into the distinction between an agent that merely responds and one that may motive, adapt, and keep coherent throughout lengthy and sophisticated duties.