Determination Tree algorithms have at all times fascinated me. They’re straightforward to implement and obtain good outcomes on numerous classification and regression duties. Mixed with boosting, determination timber are nonetheless state-of-the-art in lots of functions.

Frameworks equivalent to sklearn, Lightgbm, xgboost and catboost have finished an excellent job till as we speak. Nonetheless, prior to now few months, I’ve been lacking help for arrow datasets. Whereas lightgbm has lately added help for that, it’s nonetheless lacking in most different frameworks. The arrow knowledge format may very well be an ideal match for determination timber because it has a columnar construction optimized for environment friendly knowledge processing. Pandas already added help for that and in addition polars makes use of the benefits.

Polars has proven some vital efficiency benefits over most different knowledge frameworks. It makes use of the info effectively and avoids copying the info unnecessarily. It additionally supplies a streaming engine that permits the processing of bigger knowledge than reminiscence. For this reason I made a decision to make use of polars as a backend for constructing a call tree from scratch.

The aim is to discover the benefits of utilizing polars for determination timber when it comes to reminiscence and runtime. And, after all, studying extra about polars, effectively defining expressions, and the streaming engine.

The code for the implementation may be discovered on this repository.

Code overview

To get a primary overview of the code, I’ll present the construction of the DecisionTreeClassifier first:

The primary essential factor may be seen within the imports. It was essential for me to maintain the import part clear and with as few dependencies as attainable. This was profitable with solely having dependencies to polars, pickle, and typing.

The init technique permits to outline if the polars streaming engine needs to be used. Additionally, the max_depth of the tree may be set right here. One other characteristic within the definition of categorical columns. These are dealt with differently than numerical options utilizing a goal encoding.

It’s attainable to avoid wasting and cargo the choice tree mannequin. It’s represented as a nested dict and may be saved to disk as a pickled file.

The polars magic occurs within the match() and build_tree() strategies. These settle for each LazyFrames and DataFrames to have help for in-memory processing and streaming.

There are two prediction strategies out there, predict() and predict_many(). The predict() technique can be utilized on a small instance measurement, and the info must be offered as a dict. If we’ve got a giant take a look at set, it’s extra environment friendly to make use of the predict_many() technique. Right here, the info may be offered as a Polars Dataframe or LazyFrame.

import pickle

from typing import Iterable, Listing, Union

import polars as pl

class DecisionTreeClassifier:

def __init__(self, streaming=False, max_depth=None, categorical_columns=None):

...

def save_model(self, path: str) -> None:

...

def load_model(self, path: str) -> None:

...

def apply_categorical_mappings(self, knowledge: Union[pl.DataFrame, pl.LazyFrame]) -> Union[pl.DataFrame, pl.LazyFrame]:

...

def match(self, knowledge: Union[pl.DataFrame, pl.LazyFrame], target_name: str) -> None:

...

def predict_many(self, knowledge: Union[pl.DataFrame, pl.LazyFrame]) -> Listing[Union[int, float]]:

...

def predict(self, knowledge: Iterable[dict]):

...

def get_majority_class(self, df: Union[pl.DataFrame, pl.LazyFrame], target_name: str) -> str:

...

def _build_tree(

self,

knowledge: Union[pl.DataFrame, pl.LazyFrame],

feature_names: record[str],

target_name: str,

unique_targets: record[int],

depth: int,

) -> dict:

...Becoming the tree

To coach the choice tree classifier, the match() technique must be used.

def match(self, knowledge: Union[pl.DataFrame, pl.LazyFrame], target_name: str) -> None:

"""

Match technique to coach the choice tree.

:param knowledge: Polars DataFrame or LazyFrame containing the coaching knowledge.

:param target_name: Title of the goal column

"""

columns = knowledge.collect_schema().names()

feature_names = [col for col in columns if col != target_name]

# Shrink dtypes

knowledge = knowledge.choose(pl.all().shrink_dtype()).with_columns(

pl.col(target_name).solid(pl.UInt64).shrink_dtype().alias(target_name)

)

# Put together categorical columns with goal encoding

if self.categorical_columns:

categorical_mappings = {}

for categorical_column in self.categorical_columns:

categorical_mappings[categorical_column] = {

worth: index

for index, worth in enumerate(

knowledge.lazy()

.group_by(categorical_column)

.agg(pl.col(target_name).imply().alias("avg"))

.type("avg")

.accumulate(streaming=self.streaming)[categorical_column]

)

}

self.categorical_mappings = categorical_mappings

knowledge = self.apply_categorical_mappings(knowledge)

unique_targets = knowledge.choose(target_name).distinctive()

if isinstance(unique_targets, pl.LazyFrame):

unique_targets = unique_targets.accumulate(streaming=self.streaming)

unique_targets = unique_targets[target_name].to_list()

self.tree = self._build_tree(knowledge, feature_names, target_name, unique_targets, depth=0)It receives a polars LazyFrame or DataFrame that incorporates all options and the goal column. To establish the goal column, the target_name must be offered.

Polars supplies a handy method to optimize the reminiscence utilization of the info.

knowledge.choose(pl.all().shrink_dtype())With that, all columns are chosen and evaluated. It can convert the dtype to the smallest attainable worth.

The specific encoding

To encode categorical values, a goal encoding is used. For that, all situations of a categorical characteristic might be aggregated, and the typical goal worth might be calculated. Then, the situations are sorted by the typical goal worth, and a rank is assigned. This rank might be used because the illustration of the characteristic worth.

(

knowledge.lazy()

.group_by(categorical_column)

.agg(pl.col(target_name).imply().alias("avg"))

.type("avg")

.accumulate(streaming=self.streaming)[categorical_column]

)Since it’s attainable to offer polars DataFrames and LazyFrames, I exploit knowledge.lazy() first. If the given knowledge is a DataFrame, it is going to be transformed to a LazyFrame. Whether it is already a LazyFrame, it solely returns self. With that trick, it’s attainable to make sure that the info is processed in the identical means for LazyFrames and DataFrames and that the accumulate() technique can be utilized, which is simply out there for LazyFrames.

For instance the end result of the calculations within the totally different steps of the becoming course of, I apply it to a dataset for coronary heart illness prediction. It may be discovered on Kaggle and is revealed below the Database Contents License.

Right here is an instance of the specific characteristic illustration for the glucose ranges:

┌──────┬──────┬──────────┐

│ rank ┆ gluc ┆ avg │

│ --- ┆ --- ┆ --- │

│ u32 ┆ i8 ┆ f64 │

╞══════╪══════╪══════════╡

│ 0 ┆ 1 ┆ 0.476139 │

│ 1 ┆ 2 ┆ 0.586319 │

│ 2 ┆ 3 ┆ 0.620972 │

└──────┴──────┴──────────┘For every of the glucose ranges, the chance of getting a coronary heart illness is calculated. That is sorted after which ranked so that every of the degrees is mapped to a rank worth.

Getting the goal values

Because the final a part of the match() technique, the distinctive goal values are decided.

unique_targets = knowledge.choose(target_name).distinctive()

if isinstance(unique_targets, pl.LazyFrame):

unique_targets = unique_targets.accumulate(streaming=self.streaming)

unique_targets = unique_targets[target_name].to_list()

self.tree = self._build_tree(knowledge, feature_names, target_name, unique_targets, depth=0)This serves because the final preparation earlier than calling the _build_tree() technique recursively.

Constructing the tree

After the info is ready within the match() technique, the _build_tree() technique is known as. That is finished recursively till a stopping criterion is met, e.g., the max depth of the tree is reached. The primary name is executed from the match() technique with a depth of zero.

def _build_tree(

self,

knowledge: Union[pl.DataFrame, pl.LazyFrame],

feature_names: record[str],

target_name: str,

unique_targets: record[int],

depth: int,

) -> dict:

"""

Builds the choice tree recursively.

If max_depth is reached, returns a leaf node with the bulk class.

In any other case, finds the most effective break up and creates inside nodes for left and proper youngsters.

:param knowledge: The dataframe to guage.

:param feature_names: Title of the characteristic columns.

:param target_name: Title of the goal column.

:param unique_targets: distinctive goal values.

:param depth: The present depth of the tree.

:return: A dictionary representing the node.

"""

if self.max_depth will not be None and depth >= self.max_depth:

return {"sort": "leaf", "worth": self.get_majority_class(knowledge, target_name)}

# Make knowledge lazy right here to keep away from that it's evaluated in every loop iteration.

knowledge = knowledge.lazy()

# Consider entropy per characteristic:

information_gain_dfs = []

for feature_name in feature_names:

feature_data = knowledge.choose([feature_name, target_name]).filter(pl.col(feature_name).is_not_null())

feature_data = feature_data.rename({feature_name: "feature_value"})

# No streaming (but)

information_gain_df = (

feature_data.group_by("feature_value")

.agg(

[

pl.col(target_name)

.filter(pl.col(target_name) == target_value)

.len()

.alias(f"class_{target_value}_count")

for target_value in unique_targets

]

+ [pl.col(target_name).len().alias("count_examples")]

)

.type("feature_value")

.choose(

[

pl.col(f"class_{target_value}_count").cum_sum().alias(f"cum_sum_class_{target_value}_count")

for target_value in unique_targets

]

+ [

pl.col(f"class_{target_value}_count").sum().alias(f"sum_class_{target_value}_count")

for target_value in unique_targets

]

+ [

pl.col("count_examples").cum_sum().alias("cum_sum_count_examples"),

pl.col("count_examples").sum().alias("sum_count_examples"),

]

+ [

# From previous select

pl.col("feature_value"),

]

)

.filter(

# A minimum of one instance out there

pl.col("sum_count_examples")

> pl.col("cum_sum_count_examples")

)

.choose(

[

(pl.col(f"cum_sum_class_{target_value}_count") / pl.col("cum_sum_count_examples")).alias(

f"left_proportion_class_{target_value}"

)

for target_value in unique_targets

]

+ [

(

(pl.col(f"sum_class_{target_value}_count") - pl.col(f"cum_sum_class_{target_value}_count"))

/ (pl.col("sum_count_examples") - pl.col("cum_sum_count_examples"))

).alias(f"right_proportion_class_{target_value}")

for target_value in unique_targets

]

+ [

(pl.col(f"sum_class_{target_value}_count") / pl.col("sum_count_examples")).alias(

f"parent_proportion_class_{target_value}"

)

for target_value in unique_targets

]

+ [

# From previous select

pl.col("cum_sum_count_examples"),

pl.col("sum_count_examples"),

pl.col("feature_value"),

]

)

.choose(

(

-1

* pl.sum_horizontal(

[

(

pl.col(f"left_proportion_class_{target_value}")

* pl.col(f"left_proportion_class_{target_value}").log(base=2)

).fill_nan(0.0)

for target_value in unique_targets

]

)

).alias("left_entropy"),

(

-1

* pl.sum_horizontal(

[

(

pl.col(f"right_proportion_class_{target_value}")

* pl.col(f"right_proportion_class_{target_value}").log(base=2)

).fill_nan(0.0)

for target_value in unique_targets

]

)

).alias("right_entropy"),

(

-1

* pl.sum_horizontal(

[

(

pl.col(f"parent_proportion_class_{target_value}")

* pl.col(f"parent_proportion_class_{target_value}").log(base=2)

).fill_nan(0.0)

for target_value in unique_targets

]

)

).alias("parent_entropy"),

# From earlier choose

pl.col("cum_sum_count_examples"),

pl.col("sum_count_examples"),

pl.col("feature_value"),

)

.choose(

(

pl.col("cum_sum_count_examples") / pl.col("sum_count_examples") * pl.col("left_entropy")

+ (pl.col("sum_count_examples") - pl.col("cum_sum_count_examples"))

/ pl.col("sum_count_examples")

* pl.col("right_entropy")

).alias("child_entropy"),

# From earlier choose

pl.col("parent_entropy"),

pl.col("feature_value"),

)

.choose(

(pl.col("parent_entropy") - pl.col("child_entropy")).alias("information_gain"),

# From earlier choose

pl.col("parent_entropy"),

pl.col("feature_value"),

)

.filter(pl.col("information_gain").is_not_nan())

.type("information_gain", descending=True)

.head(1)

.with_columns(characteristic=pl.lit(feature_name))

)

information_gain_dfs.append(information_gain_df)

if isinstance(information_gain_dfs[0], pl.LazyFrame):

information_gain_dfs = pl.collect_all(information_gain_dfs, streaming=self.streaming)

information_gain_dfs = pl.concat(information_gain_dfs, how="vertical_relaxed").type(

"information_gain", descending=True

)

information_gain = 0

if len(information_gain_dfs) > 0:

best_params = information_gain_dfs.row(0, named=True)

information_gain = best_params["information_gain"]

if information_gain > 0:

left_mask = knowledge.choose(filter=pl.col(best_params["feature"]) <= best_params["feature_value"])

if isinstance(left_mask, pl.LazyFrame):

left_mask = left_mask.accumulate(streaming=self.streaming)

left_mask = left_mask["filter"]

# Cut up knowledge

left_df = knowledge.filter(left_mask)

right_df = knowledge.filter(~left_mask)

left_subtree = self._build_tree(left_df, feature_names, target_name, unique_targets, depth + 1)

right_subtree = self._build_tree(right_df, feature_names, target_name, unique_targets, depth + 1)

if isinstance(knowledge, pl.LazyFrame):

target_distribution = (

knowledge.choose(target_name)

.accumulate(streaming=self.streaming)[target_name]

.value_counts()

.type(target_name)["count"]

.to_list()

)

else:

target_distribution = knowledge[target_name].value_counts().type(target_name)["count"].to_list()

return {

"sort": "node",

"characteristic": best_params["feature"],

"threshold": best_params["feature_value"],

"information_gain": best_params["information_gain"],

"entropy": best_params["parent_entropy"],

"target_distribution": target_distribution,

"left": left_subtree,

"proper": right_subtree,

}

else:

return {"sort": "leaf", "worth": self.get_majority_class(knowledge, target_name)}This technique is the guts of constructing the timber and I’ll clarify it step-by-step. First, when coming into the strategy, it’s checked if the max depth stopping criterion is met.

if self.max_depth will not be None and depth >= self.max_depth:

return {"sort": "leaf", "worth": self.get_majority_class(knowledge, target_name)}If the present depth is the same as or higher than the max_depth, a node of the sort leaf might be returned. The worth of the leaf corresponds to the bulk class of the info. That is calculated as follows:

def get_majority_class(self, df: Union[pl.DataFrame, pl.LazyFrame], target_name: str) -> str:

"""

Returns the bulk class of a dataframe.

:param df: The dataframe to guage.

:param target_name: Title of the goal column.

:return: majority class.

"""

majority_class = df.group_by(target_name).len().filter(pl.col("len") == pl.col("len").max()).choose(target_name)

if isinstance(majority_class, pl.LazyFrame):

majority_class = majority_class.accumulate(streaming=self.streaming)

return majority_class[target_name][0]To get the bulk class, the depend of rows per goal is decided by grouping over the goal column and aggregating with len(). The goal occasion, which is current in a lot of the rows, is returned as the bulk class.

Data Acquire as Splitting Standards

To discover a good break up of the info, the data acquire is used.

To get the data acquire, the father or mother entropy and baby entropy must be calculated.

Calculating The Data Acquire in Polars

The data acquire is calculated for every characteristic worth that’s current in a characteristic column.

information_gain_df = (

feature_data.group_by("feature_value")

.agg(

[

pl.col(target_name)

.filter(pl.col(target_name) == target_value)

.len()

.alias(f"class_{target_value}_count")

for target_value in unique_targets

]

+ [pl.col(target_name).len().alias("count_examples")]

)

.type("feature_value")The characteristic values are grouped, and the depend of every of the goal values is assigned to it. Moreover, the whole depend of rows for that characteristic worth is saved as count_examples. Within the final step, the info is sorted by feature_value. That is wanted to calculate the splits within the subsequent step.

For the guts illness dataset, after the primary calculation step, the info seems like this:

┌───────────────┬───────────────┬───────────────┬────────────────┐

│ feature_value ┆ class_0_count ┆ class_1_count ┆ count_examples │

│ --- ┆ --- ┆ --- ┆ --- │

│ i8 ┆ u32 ┆ u32 ┆ u32 │

╞═══════════════╪═══════════════╪═══════════════╪════════════════╡

│ 29 ┆ 2 ┆ 0 ┆ 2 │

│ 30 ┆ 1 ┆ 0 ┆ 1 │

│ 39 ┆ 1068 ┆ 331 ┆ 1399 │

│ 40 ┆ 975 ┆ 263 ┆ 1238 │

│ 41 ┆ 1052 ┆ 438 ┆ 1490 │

│ … ┆ … ┆ … ┆ … │

│ 60 ┆ 1054 ┆ 1460 ┆ 2514 │

│ 61 ┆ 695 ┆ 1408 ┆ 2103 │

│ 62 ┆ 566 ┆ 1125 ┆ 1691 │

│ 63 ┆ 572 ┆ 1517 ┆ 2089 │

│ 64 ┆ 479 ┆ 1217 ┆ 1696 │

└───────────────┴───────────────┴───────────────┴────────────────┘Right here, the characteristic age_years is processed. Class 0 stands for “no coronary heart illness,” and sophistication 1 stands for “coronary heart illness.” The information is sorted by the age of years characteristic, and the columns comprise the depend of class 0, class 1, and the whole depend of examples with the respective characteristic worth.

Within the subsequent step, the cumulative sum over the depend of courses is calculated for every characteristic worth.

.choose(

[

pl.col(f"class_{target_value}_count").cum_sum().alias(f"cum_sum_class_{target_value}_count")

for target_value in unique_targets

]

+ [

pl.col(f"class_{target_value}_count").sum().alias(f"sum_class_{target_value}_count")

for target_value in unique_targets

]

+ [

pl.col("count_examples").cum_sum().alias("cum_sum_count_examples"),

pl.col("count_examples").sum().alias("sum_count_examples"),

]

+ [

# From previous select

pl.col("feature_value"),

]

)

.filter(

# A minimum of one instance out there

pl.col("sum_count_examples")

> pl.col("cum_sum_count_examples")

)The instinct behind it’s that when a break up is executed over a selected characteristic worth, it contains the depend of goal values from smaller characteristic values. To have the ability to calculate the proportion, the whole sum of the goal values is calculated. The identical process is repeated for count_examples, the place the cumulative sum and the whole sum are calculated as nicely.

After the calculation, the info seems like this:

┌──────────────┬─────────────┬─────────────┬─────────────┬─────────────┬─────────────┬─────────────┐

│ cum_sum_clas ┆ cum_sum_cla ┆ sum_class_0 ┆ sum_class_1 ┆ cum_sum_cou ┆ sum_count_e ┆ feature_val │

│ s_0_count ┆ ss_1_count ┆ _count ┆ _count ┆ nt_examples ┆ xamples ┆ ue │

│ --- ┆ --- ┆ --- ┆ --- ┆ --- ┆ --- ┆ --- │

│ u32 ┆ u32 ┆ u32 ┆ u32 ┆ u32 ┆ u32 ┆ i8 │

╞══════════════╪═════════════╪═════════════╪═════════════╪═════════════╪═════════════╪═════════════╡

│ 3 ┆ 0 ┆ 27717 ┆ 26847 ┆ 3 ┆ 54564 ┆ 29 │

│ 4 ┆ 0 ┆ 27717 ┆ 26847 ┆ 4 ┆ 54564 ┆ 30 │

│ 1097 ┆ 324 ┆ 27717 ┆ 26847 ┆ 1421 ┆ 54564 ┆ 39 │

│ 2090 ┆ 595 ┆ 27717 ┆ 26847 ┆ 2685 ┆ 54564 ┆ 40 │

│ 3155 ┆ 1025 ┆ 27717 ┆ 26847 ┆ 4180 ┆ 54564 ┆ 41 │

│ … ┆ … ┆ … ┆ … ┆ … ┆ … ┆ … │

│ 24302 ┆ 20162 ┆ 27717 ┆ 26847 ┆ 44464 ┆ 54564 ┆ 59 │

│ 25356 ┆ 21581 ┆ 27717 ┆ 26847 ┆ 46937 ┆ 54564 ┆ 60 │

│ 26046 ┆ 23020 ┆ 27717 ┆ 26847 ┆ 49066 ┆ 54564 ┆ 61 │

│ 26615 ┆ 24131 ┆ 27717 ┆ 26847 ┆ 50746 ┆ 54564 ┆ 62 │

│ 27216 ┆ 25652 ┆ 27717 ┆ 26847 ┆ 52868 ┆ 54564 ┆ 63 │

└──────────────┴─────────────┴─────────────┴─────────────┴─────────────┴─────────────┴─────────────┘Within the subsequent step, the proportions are calculated for every characteristic worth.

.choose(

[

(pl.col(f"cum_sum_class_{target_value}_count") / pl.col("cum_sum_count_examples")).alias(

f"left_proportion_class_{target_value}"

)

for target_value in unique_targets

]

+ [

(

(pl.col(f"sum_class_{target_value}_count") - pl.col(f"cum_sum_class_{target_value}_count"))

/ (pl.col("sum_count_examples") - pl.col("cum_sum_count_examples"))

).alias(f"right_proportion_class_{target_value}")

for target_value in unique_targets

]

+ [

(pl.col(f"sum_class_{target_value}_count") / pl.col("sum_count_examples")).alias(

f"parent_proportion_class_{target_value}"

)

for target_value in unique_targets

]

+ [

# From previous select

pl.col("cum_sum_count_examples"),

pl.col("sum_count_examples"),

pl.col("feature_value"),

]

)To calculate the proportions, the outcomes from the earlier step can be utilized. For the left proportion, the cumulative sum of every goal worth is split by the cumulative sum of the instance depend. For the precise proportion, we have to know what number of examples we’ve got on the precise facet for every goal worth. That’s calculated by subtracting the whole sum for the goal worth from the cumulative sum of the goal worth. The identical calculation is used to find out the whole depend of examples on the precise facet by subtracting the sum of the instance depend from the cumulative sum of the instance depend. Moreover, the father or mother proportion is calculated. That is finished by dividing the sum of the goal values counts by the whole depend of examples.

That is the outcome knowledge after this step:

┌───────────┬───────────┬───────────┬───────────┬───┬───────────┬───────────┬───────────┬──────────┐

│ left_prop ┆ left_prop ┆ right_pro ┆ right_pro ┆ … ┆ parent_pr ┆ cum_sum_c ┆ sum_count ┆ feature_ │

│ ortion_cl ┆ ortion_cl ┆ portion_c ┆ portion_c ┆ ┆ oportion_ ┆ ount_exam ┆ _examples ┆ worth │

│ ass_0 ┆ ass_1 ┆ lass_0 ┆ lass_1 ┆ ┆ class_1 ┆ ples ┆ --- ┆ --- │

│ --- ┆ --- ┆ --- ┆ --- ┆ ┆ --- ┆ --- ┆ u32 ┆ i8 │

│ f64 ┆ f64 ┆ f64 ┆ f64 ┆ ┆ f64 ┆ u32 ┆ ┆ │

╞═══════════╪═══════════╪═══════════╪═══════════╪═══╪═══════════╪═══════════╪═══════════╪══════════╡

│ 1.0 ┆ 0.0 ┆ 0.506259 ┆ 0.493741 ┆ … ┆ 0.493714 ┆ 3 ┆ 54564 ┆ 29 │

│ 1.0 ┆ 0.0 ┆ 0.50625 ┆ 0.49375 ┆ … ┆ 0.493714 ┆ 4 ┆ 54564 ┆ 30 │

│ 0.754902 ┆ 0.245098 ┆ 0.499605 ┆ 0.500395 ┆ … ┆ 0.493714 ┆ 1428 ┆ 54564 ┆ 39 │

│ 0.765596 ┆ 0.234404 ┆ 0.492739 ┆ 0.507261 ┆ … ┆ 0.493714 ┆ 2709 ┆ 54564 ┆ 40 │

│ 0.741679 ┆ 0.258321 ┆ 0.486929 ┆ 0.513071 ┆ … ┆ 0.493714 ┆ 4146 ┆ 54564 ┆ 41 │

│ … ┆ … ┆ … ┆ … ┆ … ┆ … ┆ … ┆ … ┆ … │

│ 0.545735 ┆ 0.454265 ┆ 0.333563 ┆ 0.666437 ┆ … ┆ 0.493714 ┆ 44419 ┆ 54564 ┆ 59 │

│ 0.539065 ┆ 0.460935 ┆ 0.305025 ┆ 0.694975 ┆ … ┆ 0.493714 ┆ 46922 ┆ 54564 ┆ 60 │

│ 0.529725 ┆ 0.470275 ┆ 0.297071 ┆ 0.702929 ┆ … ┆ 0.493714 ┆ 49067 ┆ 54564 ┆ 61 │

│ 0.523006 ┆ 0.476994 ┆ 0.282551 ┆ 0.717449 ┆ … ┆ 0.493714 ┆ 50770 ┆ 54564 ┆ 62 │

│ 0.513063 ┆ 0.486937 ┆ 0.296188 ┆ 0.703812 ┆ … ┆ 0.493714 ┆ 52859 ┆ 54564 ┆ 63 │

└───────────┴───────────┴───────────┴───────────┴───┴───────────┴───────────┴───────────┴──────────┘Now that the proportions can be found, the entropy may be calculated.

.choose(

(

-1

* pl.sum_horizontal(

[

(

pl.col(f"left_proportion_class_{target_value}")

* pl.col(f"left_proportion_class_{target_value}").log(base=2)

).fill_nan(0.0)

for target_value in unique_targets

]

)

).alias("left_entropy"),

(

-1

* pl.sum_horizontal(

[

(

pl.col(f"right_proportion_class_{target_value}")

* pl.col(f"right_proportion_class_{target_value}").log(base=2)

).fill_nan(0.0)

for target_value in unique_targets

]

)

).alias("right_entropy"),

(

-1

* pl.sum_horizontal(

[

(

pl.col(f"parent_proportion_class_{target_value}")

* pl.col(f"parent_proportion_class_{target_value}").log(base=2)

).fill_nan(0.0)

for target_value in unique_targets

]

)

).alias("parent_entropy"),

# From earlier choose

pl.col("cum_sum_count_examples"),

pl.col("sum_count_examples"),

pl.col("feature_value"),

)For the calculation of the entropy, Equation 2 is used. The left entropy is calculated utilizing the left proportion, and the precise entropy makes use of the precise proportion. For the father or mother entropy, the father or mother proportion is used. On this implementation, pl.sum_horizontal() is used to calculate the sum of the proportions to utilize attainable optimizations from polars. This will also be changed with the python-native sum() technique.

The information with the entropy values look as follows:

┌──────────────┬───────────────┬────────────────┬─────────────────┬────────────────┬───────────────┐

│ left_entropy ┆ right_entropy ┆ parent_entropy ┆ cum_sum_count_e ┆ sum_count_exam ┆ feature_value │

│ --- ┆ --- ┆ --- ┆ xamples ┆ ples ┆ --- │

│ f64 ┆ f64 ┆ f64 ┆ --- ┆ --- ┆ i8 │

│ ┆ ┆ ┆ u32 ┆ u32 ┆ │

╞══════════════╪═══════════════╪════════════════╪═════════════════╪════════════════╪═══════════════╡

│ -0.0 ┆ 0.999854 ┆ 0.999853 ┆ 3 ┆ 54564 ┆ 29 │

│ -0.0 ┆ 0.999854 ┆ 0.999853 ┆ 4 ┆ 54564 ┆ 30 │

│ 0.783817 ┆ 1.0 ┆ 0.999853 ┆ 1427 ┆ 54564 ┆ 39 │

│ 0.767101 ┆ 0.999866 ┆ 0.999853 ┆ 2694 ┆ 54564 ┆ 40 │

│ 0.808516 ┆ 0.999503 ┆ 0.999853 ┆ 4177 ┆ 54564 ┆ 41 │

│ … ┆ … ┆ … ┆ … ┆ … ┆ … │

│ 0.993752 ┆ 0.918461 ┆ 0.999853 ┆ 44483 ┆ 54564 ┆ 59 │

│ 0.995485 ┆ 0.890397 ┆ 0.999853 ┆ 46944 ┆ 54564 ┆ 60 │

│ 0.997367 ┆ 0.880977 ┆ 0.999853 ┆ 49106 ┆ 54564 ┆ 61 │

│ 0.99837 ┆ 0.859431 ┆ 0.999853 ┆ 50800 ┆ 54564 ┆ 62 │

│ 0.999436 ┆ 0.872346 ┆ 0.999853 ┆ 52877 ┆ 54564 ┆ 63 │

└──────────────┴───────────────┴────────────────┴─────────────────┴────────────────┴───────────────┘Virtually there! The ultimate step is lacking, which is calculating the kid entropy and utilizing that to get the data acquire.

.choose(

(

pl.col("cum_sum_count_examples") / pl.col("sum_count_examples") * pl.col("left_entropy")

+ (pl.col("sum_count_examples") - pl.col("cum_sum_count_examples"))

/ pl.col("sum_count_examples")

* pl.col("right_entropy")

).alias("child_entropy"),

# From earlier choose

pl.col("parent_entropy"),

pl.col("feature_value"),

)

.choose(

(pl.col("parent_entropy") - pl.col("child_entropy")).alias("information_gain"),

# From earlier choose

pl.col("parent_entropy"),

pl.col("feature_value"),

)

.filter(pl.col("information_gain").is_not_nan())

.type("information_gain", descending=True)

.head(1)

.with_columns(characteristic=pl.lit(feature_name))

)

information_gain_dfs.append(information_gain_df)For the kid entropy, the left and proper entropy are weighted by the depend of examples for the characteristic values. The sum of each weighted entropy values is used as baby entropy. To calculate the data acquire, we merely have to subtract the kid entropy from the father or mother entropy, as may be seen in Equation 1. The very best characteristic worth is decided by sorting the info by info acquire and choosing the primary row. It’s appended to a listing that gathers all the most effective characteristic values from all options.

Earlier than making use of .head(1), the info seems as follows:

┌──────────────────┬────────────────┬───────────────┐

│ information_gain ┆ parent_entropy ┆ feature_value │

│ --- ┆ --- ┆ --- │

│ f64 ┆ f64 ┆ i8 │

╞══════════════════╪════════════════╪═══════════════╡

│ 0.028388 ┆ 0.999928 ┆ 54 │

│ 0.027719 ┆ 0.999928 ┆ 52 │

│ 0.027283 ┆ 0.999928 ┆ 53 │

│ 0.026826 ┆ 0.999928 ┆ 50 │

│ 0.026812 ┆ 0.999928 ┆ 51 │

│ … ┆ … ┆ … │

│ 0.010928 ┆ 0.999928 ┆ 62 │

│ 0.005872 ┆ 0.999928 ┆ 39 │

│ 0.004155 ┆ 0.999928 ┆ 63 │

│ 0.000072 ┆ 0.999928 ┆ 30 │

│ 0.000054 ┆ 0.999928 ┆ 29 │

└──────────────────┴────────────────┴───────────────┘Right here, it may be seen that the age characteristic worth of 54 has the best info acquire. This characteristic worth might be collected for the age characteristic and must compete towards the opposite options.

Deciding on Greatest Cut up and Outline Sub Bushes

To pick out the most effective break up, the best info acquire must be discovered throughout all options.

if isinstance(information_gain_dfs[0], pl.LazyFrame):

information_gain_dfs = pl.collect_all(information_gain_dfs, streaming=self.streaming)

information_gain_dfs = pl.concat(information_gain_dfs, how="vertical_relaxed").type(

"information_gain", descending=True

)For that, the pl.collect_all() technique is used on information_gain_dfs. This evaluates all LazyFrames in parallel, which makes the processing very environment friendly. The result’s a listing of polars DataFrames, that are concatenated and sorted by info acquire.

For the guts illness instance, the info seems like this:

┌──────────────────┬────────────────┬───────────────┬─────────────┐

│ information_gain ┆ parent_entropy ┆ feature_value ┆ characteristic │

│ --- ┆ --- ┆ --- ┆ --- │

│ f64 ┆ f64 ┆ f64 ┆ str │

╞══════════════════╪════════════════╪═══════════════╪═════════════╡

│ 0.138032 ┆ 0.999909 ┆ 129.0 ┆ ap_hi │

│ 0.09087 ┆ 0.999909 ┆ 85.0 ┆ ap_lo │

│ 0.029966 ┆ 0.999909 ┆ 0.0 ┆ ldl cholesterol │

│ 0.028388 ┆ 0.999909 ┆ 54.0 ┆ age_years │

│ 0.01968 ┆ 0.999909 ┆ 27.435041 ┆ bmi │

│ … ┆ … ┆ … ┆ … │

│ 0.000851 ┆ 0.999909 ┆ 0.0 ┆ energetic │

│ 0.000351 ┆ 0.999909 ┆ 156.0 ┆ peak │

│ 0.000223 ┆ 0.999909 ┆ 0.0 ┆ smoke │

│ 0.000098 ┆ 0.999909 ┆ 0.0 ┆ alco │

│ 0.000031 ┆ 0.999909 ┆ 0.0 ┆ gender │

└──────────────────┴────────────────┴───────────────┴─────────────┘Out of all options, the ap_hi (Systolic blood strain) characteristic worth of 129 leads to the most effective info acquire and thus might be chosen for the primary break up.

information_gain = 0

if len(information_gain_dfs) > 0:

best_params = information_gain_dfs.row(0, named=True)

information_gain = best_params["information_gain"]In some instances, information_gain_dfs may be empty, for instance, when all splits lead to having solely examples on the left or proper facet. If that is so, the data acquire is zero. In any other case, we get the characteristic worth with the best info acquire.

if information_gain > 0:

left_mask = knowledge.choose(filter=pl.col(best_params["feature"]) <= best_params["feature_value"])

if isinstance(left_mask, pl.LazyFrame):

left_mask = left_mask.accumulate(streaming=self.streaming)

left_mask = left_mask["filter"]

# Cut up knowledge

left_df = knowledge.filter(left_mask)

right_df = knowledge.filter(~left_mask)

left_subtree = self._build_tree(left_df, feature_names, target_name, unique_targets, depth + 1)

right_subtree = self._build_tree(right_df, feature_names, target_name, unique_targets, depth + 1)

if isinstance(knowledge, pl.LazyFrame):

target_distribution = (

knowledge.choose(target_name)

.accumulate(streaming=self.streaming)[target_name]

.value_counts()

.type(target_name)["count"]

.to_list()

)

else:

target_distribution = knowledge[target_name].value_counts().type(target_name)["count"].to_list()

return {

"sort": "node",

"characteristic": best_params["feature"],

"threshold": best_params["feature_value"],

"information_gain": best_params["information_gain"],

"entropy": best_params["parent_entropy"],

"target_distribution": target_distribution,

"left": left_subtree,

"proper": right_subtree,

}

else:

return {"sort": "leaf", "worth": self.get_majority_class(knowledge, target_name)}When the data acquire is larger than zero, the sub-trees are outlined. For that, the left masks is outlined utilizing the characteristic worth that resulted in the most effective info acquire. The masks is utilized to the father or mother knowledge to get the left knowledge body. The negation of the left masks is used to outline the precise knowledge body. Each left and proper knowledge frames are used to name the _build_tree() technique once more with an elevated depth+1. Because the final step, the goal distribution is calculated. That is used as further info on the node and might be seen when plotting the tree together with the opposite info.

When info acquire is zero, a leaf occasion might be returned. This incorporates the bulk class of the given knowledge.

Make predictions

It’s attainable to make predictions in two other ways. If the enter knowledge is small, the predict() technique can be utilized.

def predict(self, knowledge: Iterable[dict]):

def _predict_sample(node, pattern):

if node["type"] == "leaf":

return node["value"]

if pattern[node["feature"]] <= node["threshold"]:

return _predict_sample(node["left"], pattern)

else:

return _predict_sample(node["right"], pattern)

predictions = [_predict_sample(self.tree, sample) for sample in data]

return predictionsRight here, the info may be offered as an iterable of dicts. Every dict incorporates the characteristic names as keys and the characteristic values as values. By utilizing the _predict_sample() technique, the trail within the tree is adopted till a leaf node is reached. This incorporates the category that’s assigned to the respective instance.

def predict_many(self, knowledge: Union[pl.DataFrame, pl.LazyFrame]) -> Listing[Union[int, float]]:

"""

Predict technique.

:param knowledge: Polars DataFrame or LazyFrame.

:return: Listing of predicted goal values.

"""

if self.categorical_mappings:

knowledge = self.apply_categorical_mappings(knowledge)

def _predict_many(node, temp_data):

if node["type"] == "node":

left = _predict_many(node["left"], temp_data.filter(pl.col(node["feature"]) <= node["threshold"]))

proper = _predict_many(node["right"], temp_data.filter(pl.col(node["feature"]) > node["threshold"]))

return pl.concat([left, right], how="diagonal_relaxed")

else:

return temp_data.choose(pl.col("temp_prediction_index"), pl.lit(node["value"]).alias("prediction"))

knowledge = knowledge.with_row_index("temp_prediction_index")

predictions = _predict_many(self.tree, knowledge).type("temp_prediction_index").choose(pl.col("prediction"))

# Convert predictions to a listing

if isinstance(predictions, pl.LazyFrame):

# Regardless of the execution plans says there isn't a streaming, utilizing streaming right here considerably

# will increase the efficiency and reduces the reminiscence meals print.

predictions = predictions.accumulate(streaming=True)

predictions = predictions["prediction"].to_list()

return predictionsIf a giant instance set needs to be predicted, it’s extra environment friendly to make use of the predict_many() technique. This makes use of the benefits that polars supplies when it comes to parallel processing and reminiscence effectivity.

The information may be offered as a polars DataFrame or LazyFrame. Equally to the _build_tree() technique within the coaching course of, a _predict_many() technique is known as recursively. All examples within the knowledge are filtered into sub-trees till the leaf node is reached. Examples that went the identical path to the leaf node get the identical prediction worth assigned. On the finish of the method, all sub-frames of examples are concatenated once more. Because the order cannot be preserved with that, a short lived prediction index is ready in the beginning of the method. When all predictions are finished, the unique order is restored with sorting by that index.

Utilizing the classifier on a dataset

A utilization instance for the choice tree classifier may be discovered right here. The choice tree is educated on a coronary heart illness dataset. A prepare and take a look at set is outlined to check the efficiency of the implementation. After the coaching, the tree is plotted and saved to a file.

With a max depth of 4, the ensuing tree seems as follows:

It achieves a prepare and take a look at accuracy of 73% on the given knowledge.

Runtime comparability

One aim of utilizing polars as a backend for determination timber is to discover the runtime and reminiscence utilization and evaluate it to different frameworks. For that, I created a reminiscence profiling script that may be discovered right here.

The script compares this implementation, which is known as “efficient-trees” towards sklearn and lightgbm. For efficient-trees, the lazy streaming variant and non-lazy in-memory variant are examined.

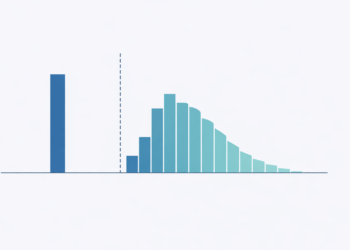

Within the graph, it may be seen that lightgbm is the quickest and most memory-efficient framework. Because it launched the potential of utilizing arrow datasets some time in the past, the info may be processed effectively. Nonetheless, because the entire dataset nonetheless must be loaded and may’t be streamed, there are nonetheless potential scaling points.

The following greatest framework is efficient-trees with out and with streaming. Whereas efficient-trees with out streaming has a greater runtime, the streaming variant makes use of much less reminiscence.

The sklearn implementation achieves the worst outcomes when it comes to reminiscence utilization and runtime. Because the knowledge must be offered as a numpy array, the reminiscence utilization grows lots. The runtime may be defined through the use of just one CPU core. Help for multi-threading or multi-processing doesn’t exist but.

Deep dive: Streaming in polars

As may be seen within the comparability of the frameworks, the potential of streaming the info as a substitute of getting it in reminiscence makes a distinction to all different frameworks. Nonetheless, the streaming engine remains to be thought-about an experimental characteristic, and never all operations are appropriate with streaming but.

To get a greater understanding of what occurs within the background, a glance into the execution plan is helpful. Let’s leap again into the coaching course of and get the execution plan for the next operation:

def match(self, knowledge: Union[pl.DataFrame, pl.LazyFrame], target_name: str) -> None:

"""

Match technique to coach the choice tree.

:param knowledge: Polars DataFrame or LazyFrame containing the coaching knowledge.

:param target_name: Title of the goal column

"""

columns = knowledge.collect_schema().names()

feature_names = [col for col in columns if col != target_name]

# Shrink dtypes

knowledge = knowledge.choose(pl.all().shrink_dtype()).with_columns(

pl.col(target_name).solid(pl.UInt64).shrink_dtype().alias(target_name)

)The execution plan for knowledge may be created with the next command:

knowledge.clarify(streaming=True)This returns the execution plan for the LazyFrame.

WITH_COLUMNS:

[col("cardio").strict_cast(UInt64).shrink_dtype().alias("cardio")]

SELECT [col("gender").shrink_dtype(), col("height").shrink_dtype(), col("weight").shrink_dtype(), col("ap_hi").shrink_dtype(), col("ap_lo").shrink_dtype(), col("cholesterol").shrink_dtype(), col("gluc").shrink_dtype(), col("smoke").shrink_dtype(), col("alco").shrink_dtype(), col("active").shrink_dtype(), col("cardio").shrink_dtype(), col("age_years").shrink_dtype(), col("bmi").shrink_dtype()] FROM

STREAMING:

DF ["gender", "height", "weight", "ap_hi"]; PROJECT 13/13 COLUMNS; SELECTION: NoneThe key phrase that’s essential right here is STREAMING. It may be seen that the preliminary dataset loading occurs within the streaming mode, however when shrinking the dtypes, the entire dataset must be loaded into reminiscence. Because the dtype shrinking will not be a essential half, I take away it quickly to discover till what operation streaming is supported.

The following problematic operation is assigning the specific options.

def apply_categorical_mappings(self, knowledge: Union[pl.DataFrame, pl.LazyFrame]) -> Union[pl.DataFrame, pl.LazyFrame]:

"""

Apply categorical mappings on enter body.

:param knowledge: Polars DataFrame or LazyFrame with categorical columns.

:return: Polars DataFrame or LazyFrame with mapped categorical columns

"""

return knowledge.with_columns(

[pl.col(col).replace(self.categorical_mappings[col]).solid(pl.UInt32) for col in self.categorical_columns]

)The exchange expression doesn’t help the streaming mode. Even after eradicating the solid, streaming will not be used which may be seen within the execution plan.

WITH_COLUMNS:

[col("gender").replace([Series, Series]), col("ldl cholesterol").exchange([Series, Series]), col("gluc").exchange([Series, Series]), col("smoke").exchange([Series, Series]), col("alco").exchange([Series, Series]), col("energetic").exchange([Series, Series])]

STREAMING:

DF ["gender", "height", "weight", "ap_hi"]; PROJECT */13 COLUMNS; SELECTION: NoneTransferring on, I additionally take away the help for categorical options. What occurs subsequent is the calculation of the data acquire.

information_gain_df = (

feature_data.group_by("feature_value")

.agg(

[

pl.col(target_name)

.filter(pl.col(target_name) == target_value)

.len()

.alias(f"class_{target_value}_count")

for target_value in unique_targets

]

+ [pl.col(target_name).len().alias("count_examples")]

)

.type("feature_value")

)Sadly, already within the first a part of calculating, the streaming mode will not be supported anymore. Right here, utilizing pl.col().filter() prevents us from streaming the info.

SORT BY [col("feature_value")]

AGGREGATE

[col("cardio").filter([(col("cardio")) == (1)]).depend().alias("class_1_count"), col("cardio").filter([(col("cardio")) == (0)]).depend().alias("class_0_count"), col("cardio").depend().alias("count_examples")] BY [col("feature_value")] FROM

STREAMING:

RENAME

easy π 2/2 ["gender", "cardio"]

DF ["gender", "height", "weight", "ap_hi"]; PROJECT 2/13 COLUMNS; SELECTION: col("gender").is_not_null()Since this isn’t really easy to alter, I’ll cease the exploration right here. It may be concluded that within the determination tree implementation with polars backend, the total potential of streaming can’t be used but since essential operators are nonetheless lacking streaming help. Because the streaming mode is below energetic improvement, it may be attainable to run a lot of the operators and even the entire calculation of the choice tree within the streaming mode sooner or later.

Conclusion

On this weblog put up, I offered my customized implementation of a call tree utilizing polars as a backend. I confirmed implementation particulars and in contrast it to different determination tree frameworks. The comparability exhibits that this implementation can outperform sklearn when it comes to runtime and reminiscence utilization. However there are nonetheless different frameworks like lightgbm that present a greater runtime and extra environment friendly processing. There’s loads of potential within the streaming mode when utilizing polars backend. Presently, some operators forestall an end-to-end streaming method as a consequence of a scarcity of streaming help, however that is below energetic improvement. When polars makes progress with that, it’s value revisiting this implementation and evaluating it to different frameworks once more.