This November 30 marks the second anniversary of ChatGPT’s launch, an occasion that despatched shockwaves by expertise, society, and the financial system. The house opened by this milestone has not all the time made it simple — or maybe even potential — to separate actuality from expectations. For instance, this yr Nvidia turned probably the most invaluable public firm on the earth throughout a surprising bullish rally. The corporate, which manufactures {hardware} utilized by fashions like ChatGPT, is now value seven occasions what it was two years in the past. The apparent query for everybody is: Is it actually value that a lot, or are we within the midst of collective delusion? This query — and never its eventual reply — defines the present second.

AI is making waves not simply within the inventory market. Final month, for the primary time in historical past, distinguished figures in synthetic intelligence have been awarded the Nobel Prizes in Physics and Chemistry. John J. Hopfield and Geoffrey E. Hinton obtained the Physics Nobel for his or her foundational contributions to neural community growth. In Chemistry, Demis Hassabis and John Jumper have been acknowledged for AlphaFold’s advances in protein design utilizing synthetic intelligence. These awards generated shock on one hand and comprehensible disappointment amongst conventional scientists on the opposite, as computational strategies took middle stage.

On this context, I purpose to evaluation what has occurred since that November, reflecting on the tangible and potential impression of generative AI thus far, contemplating which guarantees have been fulfilled, which stay within the operating, and which appear to have fallen by the wayside.

Let’s start by recalling the day of the launch. ChatGPT 3.5 was a chatbot far superior to something beforehand identified when it comes to discourse and intelligence capabilities. The distinction between what was potential on the time and what ChatGPT might do generated huge fascination and the product went viral quickly: it reached 100 million customers in simply two months, far surpassing many functions thought of viral (TikTok, Instagram, Pinterest, Spotify, and so forth.). It additionally entered mass media and public debate: AI landed within the mainstream, and abruptly everybody was speaking about ChatGPT. To prime it off, just some months later, OpenAI launched GPT-4, a mannequin vastly superior to three.5 in intelligence and likewise able to understanding photos.

The scenario sparked debates in regards to the many prospects and issues inherent to this particular expertise, together with copyright, misinformation, productiveness, and labor market points. It additionally raised considerations in regards to the medium- and long-term dangers of advancing AI analysis, comparable to existential threat (the “Terminator” situation), the top of labor, and the potential for synthetic consciousness. On this broad and passionate dialogue, we heard a variety of opinions. Over time, I consider the controversy started to mature and mood. It took us some time to adapt to this product as a result of ChatGPT’s development left us all considerably offside. What has occurred since then?

So far as expertise corporations are involved, these previous two years have been a curler coaster. The looks on the scene of OpenAI, with its futuristic advances and its CEO with a “startup” spirit and look, raised questions on Google’s technological management, which till then had been undisputed. Google, for its half, did all the pieces it might to verify these doubts, repeatedly humiliating itself in public. First got here the embarrassment of Bard’s launch — the chatbot designed to compete with ChatGPT. Within the demo video, the mannequin made a factual error: when requested in regards to the James Webb House Telescope, it claimed it was the primary telescope to {photograph} planets outdoors the photo voltaic system, which is fake. This misstep triggered Google’s inventory to drop by 9% within the following week. Later, in the course of the presentation of its new Gemini mannequin — one other competitor, this time to GPT-4 — Google misplaced credibility once more when it was revealed that the unimaginable capabilities showcased within the demo (which might have positioned it on the slicing fringe of analysis) have been, in actuality, fabricated, based mostly on rather more restricted capabilities.

In the meantime, Microsoft — the archaic firm of Invoice Gates that produced the previous Home windows 95 and was as hated by younger folks as Google was liked — reappeared and allied with the small David, integrating ChatGPT into Bing and presenting itself as agile and defiant. “I would like folks to know we made them dance,” stated Satya Nadella, Microsoft’s CEO, referring to Google. In 2023, Microsoft rejuvenated whereas Google aged.

This example endured, and OpenAI remained for a while the undisputed chief in each technical evaluations and subjective person suggestions (often called “vibe checks”), with GPT-4 on the forefront. However over time, this modified and simply as GPT-4 had achieved distinctive management by late 2022, by mid-2024 its shut successor (GPT-4o) was competing with others of its caliber: Google’s Gemini 1.5 Professional, Anthropic’s Claude Sonnet 3.5, and xAI’s Grok 2. What innovation provides, innovation takes away.

This situation may very well be shifting once more with OpenAI’s latest announcement of o1 in September 2024 and rumors of latest launches in December. For now, nevertheless, no matter how good o1 could also be (we’ll discuss it shortly), it doesn’t appear to have triggered the identical seismic impression as ChatGPT or conveyed the identical sense of an unbridgeable hole with the remainder of the aggressive panorama.

To spherical out the scene of hits, falls, and epic comebacks, we should speak in regards to the open-source world. This new AI period started with two intestine punches to the open-source group. First, OpenAI, regardless of what its title implies, was a pioneer in halting the general public disclosure of basic technological developments. Earlier than OpenAI, the norms of synthetic intelligence analysis — at the least in the course of the golden period earlier than 2022 — entailed detailed publication of analysis findings. Throughout that interval, main companies fostered a optimistic suggestions loop with academia and revealed papers, one thing beforehand unusual. Certainly, ChatGPT and the generative AI revolution as an entire are based mostly on a 2017 paper from Google, the well-known Consideration Is All You Want, which launched the Transformer neural community structure. This structure underpins all present language fashions and is the “T” in GPT. In a dramatic plot twist, OpenAI leveraged this public discovery by Google to achieve a bonus and commenced pursuing closed-door analysis, with GPT-4’s launch marking the turning level between these two eras: OpenAI disclosed nothing in regards to the interior workings of this superior mannequin. From that second, many closed fashions, comparable to Gemini 1.5 Professional and Claude Sonnet, started to emerge, essentially shifting the analysis ecosystem for the more severe.

The second blow to the open-source group was the sheer scale of the brand new fashions. Till GPT-2, a modest GPU was adequate to coach deep studying fashions. Beginning with GPT-3, infrastructure prices skyrocketed, and coaching fashions turned inaccessible to people or most establishments. Basic developments fell into the arms of some main gamers.

However after these blows, and with everybody anticipating a knockout, the open-source world fought again and proved itself able to rising to the event. For everybody’s profit, it had an sudden champion. Mark Zuckerberg, probably the most hated reptilian android on the planet, made a radical change of picture by positioning himself because the flagbearer of open supply and freedom within the generative AI area. Meta, the conglomerate that controls a lot of the digital communication material of the West based on its personal design and can, took on the duty of bringing open supply into the LLM period with its LLaMa mannequin line. It’s undoubtedly a nasty time to be an ethical absolutist. The LLaMa line started with timid open licenses and restricted capabilities (though the group made important efforts to consider in any other case). Nevertheless, with the latest releases of LLaMa 3.1 and three.2, the hole with personal fashions has begun to slender considerably. This has allowed the open-source world and public analysis to stay on the forefront of technological innovation.

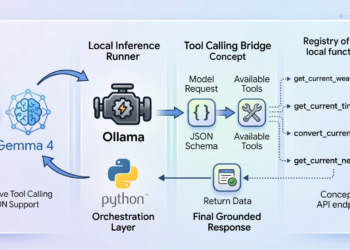

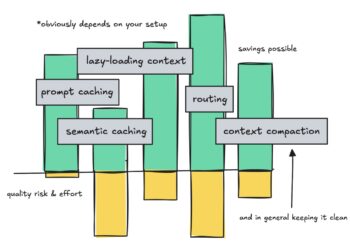

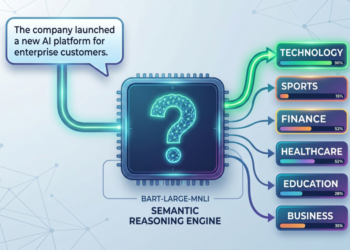

Over the previous two years, analysis into ChatGPT-like fashions, often called giant language fashions (LLMs), has been prolific. The primary basic development, now taken with no consideration, is that corporations managed to extend the context home windows of fashions (what number of phrases they will learn as enter and generate as output) whereas dramatically decreasing prices per phrase. We’ve additionally seen fashions develop into multimodal, accepting not solely textual content but in addition photos, audio, and video as enter. Moreover, they’ve been enabled to make use of instruments — most notably, web search — and have steadily improved in total capability.

On one other entrance, numerous quantization and distillation methods have emerged, enabling the compression of huge fashions into smaller variations, even to the purpose of operating language fashions on desktop computer systems (albeit typically at the price of unacceptable efficiency reductions). This optimization development seems to be on a optimistic trajectory, bringing us nearer to small language fashions (SLMs) that might ultimately run on smartphones.

On the draw back, no important progress has been made in controlling the notorious hallucinations — false but plausible-sounding outputs generated by fashions. As soon as a quaint novelty, this problem now appears confirmed as a structural characteristic of the expertise. For these of us who use this expertise in our day by day work, it’s irritating to depend on a instrument that behaves like an skilled more often than not however commits gross errors or outright fabricates data roughly one out of each ten occasions. On this sense, Yann LeCun, the top of Meta AI and a serious determine in AI, appears vindicated, as he had adopted a extra deflationary stance on LLMs in the course of the 2023 hype peak.

Nevertheless, mentioning the restrictions of LLMs doesn’t imply the controversy is settled about what they’re able to or the place they could take us. As an illustration, Sam Altman believes the present analysis program nonetheless has a lot to supply earlier than hitting a wall, and the market, as we’ll see shortly, appears to agree. Most of the developments we’ve seen over the previous two years assist this optimism. OpenAI launched its voice assistant and an improved model able to near-real-time interplay with interruptions — like human conversations fairly than turn-taking. Extra just lately, we’ve seen the primary superior makes an attempt at LLMs having access to and management over customers’ computer systems, as demonstrated within the GPT-4o demo (not but launched) and in Claude 3.5, which is offered to finish customers. Whereas these instruments are nonetheless of their infancy, they provide a glimpse of what the close to future might seem like, with LLMs having larger company. Equally, there have been quite a few breakthroughs in automating software program engineering, highlighted by debatable milestones like Devin, the primary “synthetic software program engineer.” Whereas its demo was closely criticized, this space — regardless of the hype — has proven simple, impactful progress. For instance, within the SWE-bench benchmark, used to judge AI fashions’ talents to resolve software program engineering issues, the most effective fashions in the beginning of the yr might remedy lower than 13% of workouts. As of now, that determine exceeds 49%, justifying confidence within the present analysis program to reinforce LLMs’ planning and sophisticated task-solving capabilities.

Alongside the identical traces, OpenAI’s latest announcement of the o1 mannequin indicators a brand new line of analysis with important potential, regardless of the at the moment launched model (o1-preview) not being far forward from what’s already identified. The truth is, o1 is predicated on a novel concept: leveraging inference time — not coaching time — to enhance the standard of generated responses. With this method, the mannequin doesn’t instantly produce probably the most possible subsequent phrase however has the flexibility to “pause to suppose” earlier than responding. One of many firm’s researchers steered that, ultimately, these fashions might use hours and even days of computation earlier than producing a response. Preliminary outcomes have sparked excessive expectations, as utilizing inference time to optimize high quality was not beforehand thought of viable. We now await subsequent fashions on this line (o2, o3, o4) to verify whether or not it’s as promising because it at the moment appears.

Past language fashions, these two years have seen huge developments in different areas. First, we should point out picture technology. Textual content-to-image fashions started to achieve traction even earlier than chatbots and have continued growing at an accelerated tempo, increasing into video technology. This area reached a excessive level with the introduction of OpenAI’s Sora, a mannequin able to producing extraordinarily high-quality movies, although it was not launched. Barely much less identified however equally spectacular are advances in music technology, with platforms like Suno and Udio, and in voice technology, which has undergone a revolution and achieved terribly high-quality requirements, led by Eleven Labs.

It has undoubtedly been two intense years of outstanding technological progress and virtually day by day improvements for these of us concerned within the area.

If we flip our consideration to the monetary side of this phenomenon, we’ll see huge quantities of capital being poured into the world of AI in a sustained and rising method. We’re at the moment within the midst of an AI gold rush, and nobody needs to be disregarded of a expertise that its inventors, modestly, have introduced as equal to the steam engine, the printing press, or the web.

It could be telling that the corporate that has capitalized probably the most on this frenzy doesn’t promote AI however fairly the {hardware} that serves as its infrastructure, aligning with the previous adage that in a gold rush, a great way to get wealthy is by promoting shovels and picks. As talked about earlier, Nvidia has positioned itself as probably the most invaluable firm on the earth, reaching a market capitalization of $3.5 trillion. For context, $3,500,000,000,000 is a determine far larger than France’s GDP.

Then again, if we take a look at the record of publicly traded corporations with the highest market worth, we see tech giants linked partially or totally to AI guarantees dominating the rostrum. Apple, Nvidia, Microsoft, and Google are the highest 4 as of the date of this writing, with a mixed capitalization exceeding $12 trillion. For reference, in November 2022, the mixed capitalization of those 4 corporations was lower than half of this worth. In the meantime, generative AI startups in Silicon Valley are elevating record-breaking investments. The AI market is bullish.

Whereas the expertise advances quick, the enterprise mannequin for generative AI — past the foremost LLM suppliers and some particular instances — stays unclear. As this bullish frenzy continues, some voices, together with latest economics Nobel laureate Daron Acemoglu, have expressed skepticism about AI’s means to justify the huge quantities of cash being poured into it. As an illustration, in this Bloomberg interview, Acemoglu argues that present generative AI will solely be capable to automate lower than 5% of current duties within the subsequent decade, making it unlikely to spark the productiveness revolution traders anticipate.

Is that this AI fever or fairly AI feverish delirium? For now, the bullish rally exhibits no indicators of stopping, and like all bubble, will probably be simple to acknowledge in hindsight. However whereas we’re in it, it’s unclear if there can be a correction and, in that case, when it would occur. Are we in a bubble about to burst, as Acemoglu believes, or, as one investor steered, is Nvidia on its technique to changing into a $50 trillion firm inside a decade? That is the million-dollar query and, sadly, pricey reader, I have no idea the reply. All the pieces appears to point that, similar to within the dot com bubble, we’ll emerge from this case with some corporations driving the wave and plenty of underwater.

Let’s now talk about the broader social impression of generative AI’s arrival. The leap in high quality represented by ChatGPT, in comparison with the socially identified technological horizon earlier than its launch, triggered important commotion, opening debates in regards to the alternatives and dangers of this particular expertise, in addition to the potential alternatives and dangers of extra superior technological developments.

The issue of the longer term

The controversy over the proximity of synthetic normal intelligence (AGI) — AI reaching human or superhuman capabilities — gained public relevance when Geoffrey Hinton (now a Physics Nobel laureate) resigned from his place at Google to warn in regards to the dangers such growth might pose. Existential threat — the chance {that a} super-capable AI might spiral uncontrolled and both annihilate or subjugate humanity — moved out of the realm of fiction to develop into a concrete political problem. We noticed distinguished figures, with reasonable and non-alarmist profiles, categorical concern in public debates and even in U.S. Senate hearings. They warned of the potential for AGI arriving inside the subsequent ten years and the large issues this could entail.

The urgency that surrounded this debate now appears to have light, and in hindsight, AGI seems additional away than it did in 2023. It’s frequent to overestimate achievements instantly after they happen, simply because it’s frequent to underestimate them over time. This latter phenomenon even has a reputation: the AI Impact, the place main developments within the area lose their preliminary luster over time and stop to be thought of “true intelligence.” If as we speak the flexibility to generate coherent discourse — like the flexibility to play chess — is now not stunning, this could not distract us from the timeline of progress on this expertise. In 1996, the Deep Blue mannequin defeated chess champion Garry Kasparov. In 2016, AlphaGo defeated Go grasp Lee Sedol. And in 2022, ChatGPT produced high-quality, articulated speech, even difficult the well-known Turing Check as a benchmark for figuring out machine intelligence. I consider it’s necessary to maintain discussions about future dangers even after they now not appear imminent or pressing. In any other case, cycles of worry and calm stop mature debate. Whether or not by the analysis route opened by o1 or new pathways, it’s possible that inside a number of years, we’ll see one other breakthrough on the size of ChatGPT in 2022, and it could be clever to deal with the related discussions earlier than that occurs.

A separate chapter on AGI and AI security entails the company drama at OpenAI, worthy of prime-time tv. In late 2023, Sam Altman was abruptly eliminated by the board of administrators. Though the complete particulars have been by no means clarified, Altman’s detractors pointed to an alleged tradition of secrecy and disagreements over issues of safety in AI growth. The choice sparked an instantaneous riot amongst OpenAI staff and drew the eye of Microsoft, the corporate’s largest investor. In a dramatic twist, Altman was reinstated, and the board members who eliminated him have been dismissed. This battle left a rift inside OpenAI: Jan Leike, the top of AI security analysis, joined Anthropic, whereas Ilya Sutskever, OpenAI’s co-founder and a central determine in its AI growth, departed to create Protected Superintelligence Inc. This appears to verify that the unique dispute centered across the significance positioned on security. To conclude, latest rumors recommend OpenAI might lose its nonprofit standing and grant shares to Altman, triggering one other wave of resignations inside the firm’s management and intensifying a way of instability.

From a technical perspective, we noticed a major breakthrough in AI security from Anthropic. The corporate achieved a basic milestone in LLM interpretability, serving to to higher perceive the “black field” nature of those fashions. By way of their discovery of the polysemantic nature of neurons and a way for extracting neural activation patterns representing ideas, the first barrier to controlling Transformer fashions appears to have been damaged — at the least when it comes to their potential to deceive us. The flexibility to intentionally alter circuits actively modifying the observable conduct in these fashions can be promising and introduced some peace of thoughts concerning the hole between the capabilities of the fashions and our understanding of them.

The issues of the current

Setting apart the way forward for AI and its potential impacts, let’s concentrate on the tangible results of generative AI. In contrast to the arrival of the web or social media, this time society appeared to react rapidly, demonstrating concern in regards to the implications and challenges posed by this new expertise. Past the deep debate on existential dangers talked about earlier — centered on future technological growth and the tempo of progress — the impacts of current language fashions have additionally been broadly mentioned. The primary points with generative AI embody the worry of amplifying misinformation and digital air pollution, important issues with copyright and personal knowledge use, and the impression on productiveness and the labor market.

Relating to misinformation, this research means that, at the least for now, there hasn’t been a major improve in publicity to misinformation because of generative AI. Whereas that is tough to verify definitively, my private impressions align: though misinformation stays prevalent — and should have even elevated lately — it hasn’t undergone a major section change attributable to the emergence of generative AI. This doesn’t imply misinformation isn’t a crucial problem as we speak. The weaker thesis right here is that generative AI doesn’t appear to have considerably worsened the issue — at the least not but.

Nevertheless, we’ve got seen situations of deep fakes, comparable to latest instances involving AI-generated pornographic materials utilizing actual folks’s faces, and extra severely, instances in faculties the place minors — notably younger ladies — have been affected. These instances are extraordinarily severe, and it’s essential to bolster judicial and legislation enforcement methods to deal with them. Nevertheless, they seem, at the least preliminarily, to be manageable and, within the grand scheme, symbolize comparatively minor impacts in comparison with the speculative nightmare of misinformation fueled by generative AI. Maybe authorized methods will take longer than we wish, however there are indicators that establishments could also be as much as the duty at the least so far as deep fakes of underage porn are involved, as illustrated by the exemplary 18-year sentence obtained by an individual in the UK for creating and distributing this materials.

Secondly, regarding the impression on the labor market and productiveness — the flip aspect of the market increase — the controversy stays unresolved. It’s unclear how far this expertise will go in growing employee productiveness or in decreasing or growing jobs. On-line, one can discover a variety of opinions about this expertise’s impression. Claims like “AI replaces duties, not folks” or “AI gained’t exchange you, however an individual utilizing AI will” are made with nice confidence but with none supporting proof — one thing that sarcastically remembers the hallucinations of a language mannequin. It’s true that ChatGPT can not carry out advanced duties, and people of us who use it day by day know its important and irritating limitations. However it’s additionally true that duties like drafting skilled emails or reviewing giant quantities of textual content for particular data have develop into a lot quicker. In my expertise, productiveness in programming and knowledge science has elevated considerably with AI-assisted programming environments like Copilot or Cursor. In my staff, junior profiles have gained larger autonomy, and everybody produces code quicker than earlier than. That stated, the velocity in code manufacturing may very well be a double-edged sword, as some research recommend that code generated with generative AI assistants could also be of decrease high quality than code written by people with out such help.

If the impression of present LLMs isn’t totally clear, this uncertainty is compounded by important developments in related applied sciences, such because the analysis line opened by o1 or the desktop management anticipated by Claude 3.5. These developments improve the uncertainty in regards to the capabilities these applied sciences might obtain within the brief time period. And whereas the market is betting closely on a productiveness increase pushed by generative AI, many severe voices downplay the potential impression of this expertise on the labor market, as famous earlier within the dialogue of the monetary side of the phenomenon. In precept, probably the most important limitations of this expertise (e.g., hallucinations) haven’t solely remained unresolved however now appear more and more unlikely to be resolved. In the meantime, human establishments have confirmed much less agile and revolutionary than the expertise itself, cooling the dialog and dampening the passion of these envisioning a large and speedy impression.

In any case, the promise of a large revolution within the office, whether it is to materialize, has not but materialized in at the least these two years. Contemplating the accelerated adoption of this expertise (based on this research, greater than 24% of American staff as we speak use generative AI at the least as soon as every week) and assuming that the primary to undertake it are maybe those that discover the best advantages, we are able to suppose that we’ve got already seen sufficient of the productiveness impression of this expertise. By way of my skilled day-to-day and that of my staff, the productiveness impacts thus far, whereas noticeable, important, and visual, have additionally been modest.

One other main problem accompanying the rise of generative AI entails copyright points. Content material creators — together with artists, writers, and media corporations — have expressed dissatisfaction over their works getting used with out authorization to coach AI fashions, which they think about a violation of their mental property rights. On the flip aspect, AI corporations typically argue that utilizing protected materials to coach fashions is roofed beneath “honest use” and that the manufacturing of those fashions constitutes professional and inventive transformation fairly than replica.

This battle has resulted in quite a few lawsuits, comparable to Getty Photos suing Stability AI for the unauthorized use of photos to coach fashions, or lawsuits by artists and authors, like Sarah Silverman, towards OpenAI, Meta, and different AI corporations. One other notable case entails document corporations suing Suno and Udio, alleging copyright infringement for utilizing protected songs to coach generative music fashions.

On this futuristic reinterpretation of the age-old divide between inspiration and plagiarism, courts have but to decisively tip the scales by some means. Whereas some points of those lawsuits have been allowed to proceed, others have been dismissed, sustaining an environment of uncertainty. Latest authorized filings and company methods — comparable to Adobe, Google, and OpenAI indemnifying their shoppers — reveal that the difficulty stays unresolved, and for now, authorized disputes proceed and not using a definitive conclusion.

The regulatory framework for AI has additionally seen important progress, with probably the most notable growth on this aspect of the globe being the European Union’s approval of the AI Act in March 2024. This laws positioned Europe as the primary bloc on the earth to undertake a complete regulatory framework for AI, establishing a phased implementation system to make sure compliance, set to start in February 2025 and proceed progressively.

The AI Act classifies AI dangers, prohibiting instances of “unacceptable threat,” comparable to the usage of expertise for deception or social scoring. Whereas some provisions have been softened throughout discussions to make sure fundamental guidelines relevant to all fashions and stricter rules for functions in delicate contexts, the trade has voiced considerations in regards to the burden this framework represents. Though the AI Act wasn’t a direct consequence of ChatGPT and had been beneath dialogue beforehand, its approval was accelerated by the sudden emergence and impression of generative AI fashions.

With these tensions, alternatives, and challenges, it’s clear that the impression of generative AI marks the start of a brand new section of profound transformations throughout social, financial, and authorized spheres, the complete extent of which we’re solely starting to grasp.

I approached this text considering that the ChatGPT increase had handed and its ripple results have been now subsiding, calming. Reviewing the occasions of the previous two years satisfied me in any other case: they’ve been two years of nice progress and nice velocity.

These are occasions of pleasure and expectation — a real springtime for AI — with spectacular breakthroughs persevering with to emerge and promising analysis traces ready to be explored. Then again, these are additionally occasions of uncertainty. The suspicion of being in a bubble and the expectation of a major emotional and market correction are greater than cheap. However as with all market correction, the important thing isn’t predicting if it would occur however understanding precisely when.

What is going to occur in 2025? Will Nvidia’s inventory collapse, or will the corporate proceed its bullish rally, fulfilling the promise of changing into a $50 trillion firm inside a decade? And what’s going to occur to the AI inventory market typically? And what’s going to develop into of the reasoning mannequin analysis line initiated by o1? Will it hit a ceiling or begin displaying progress, simply because the GPT line superior by variations 1, 2, 3, and 4? How a lot will as we speak’s rudimentary LLM-based brokers that management desktops and digital environments enhance total?

We’ll discover out sooner fairly than later, as a result of that’s the place we’re headed.