DeepSeek-R1, OpenAI o1 & o3, Take a look at-Time Compute Scaling, Mannequin Submit-Coaching and the Transition to Reasoning Language Fashions (RLMs)

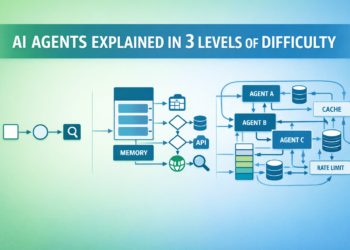

Over the previous yr generative AI adoption and AI Agent growth have skyrocketed. Stories from LangChain present that 51% of respondents are utilizing AI Brokers in manufacturing, whereas studies from Deloitte predict that in 2025 at the least 25% of firms utilizing Generative AI will launch AI agent pilots or proof of ideas. Regardless of the recognition and progress of AI Agent frameworks, anybody constructing these methods shortly runs into limitations of working with massive language fashions (LLMs), with mannequin reasoning skill usually on the high of the record. To beat reasoning limitations researchers and builders have explored a wide range of completely different strategies starting from completely different prompting strategies like ReAct or Chain of Thought (CoT) to constructing multi-agent methods with separate brokers devoted to planning and analysis, and now firms are releasing new fashions skilled particularly to enhance the mannequin’s built-in reasoning course of.

DeepSeek’s R1 and OpenAI’s o1 and o3 bulletins are shaking up the business by offering extra strong reasoning capabilities in comparison with conventional LLMs. These fashions are skilled to “assume” earlier than answering and have a self-contained reasoning course of permitting them to interrupt down duties into less complicated steps, work iteratively on the steps, acknowledge and proper errors earlier than returning a last reply. This differs from earlier fashions like GPT-4o which required customers to construct their very own reasoning logic by prompting the mannequin to assume step-by-step and creating loops for the mannequin to iteratively plan, work, and consider its progress on a job. One of many key variations in coaching Reasoning Language Fashions (RLMs) like o1, o3, and R1 lies within the give attention to post-training and test-time compute scaling.

On this article we’ll cowl the important thing variations between practice and check time compute scaling, post-training and find out how to practice a RLM like DeepSeek’s R1, and the affect of RLMs on AI Agent growth.

Overview

In a nutshell, train-time compute scaling applies to each pre-training the place a mannequin learns basic patterns and post-training the place a base-model undergoes extra coaching like Reinforcement Studying (RL) or Supervised Positive-Tuning (SFT) to be taught extra extra particular behaviors. In distinction, test-time compute scaling applies at inference time, when making a prediction, and supplies extra computational energy for the mannequin to “assume” by exploring a number of potential options earlier than producing a last reply.

It’s necessary to grasp that each test-time compute scaling and post-training can be utilized to assist a mannequin “assume” earlier than producing a last response however that these approaches are carried out in several methods.

Whereas post-training includes updating or creating a brand new mannequin, test-time compute scaling permits the exploration of a number of options at inference with out altering the mannequin itself. These approaches might be used collectively; in principle you could possibly take a mannequin that has undergone post-training for improved reasoning, like DeepSeek-R1, and permit it to additional improve it’s reasoning by performing extra searches at inference via test-time compute scaling.

Prepare-Time Compute: Pre-Coaching & Submit-Coaching

At this time, most LLMs & Basis Fashions are pre-trained on a considerable amount of information from sources just like the Frequent Crawl, which have a large and various illustration of human-written textual content. This pre-training part teaches the mannequin to foretell the following most definitely phrase or token in a given context. As soon as pre-training is full, most fashions endure a type of Supervised Positive Tuning (SFT) to optimize them for instruction following or chat primarily based use circumstances. For extra info on these coaching processes take a look at one in all my earlier articles.

General, this coaching course of is extremely useful resource intensive and requires many coaching runs every costing hundreds of thousands of {dollars} earlier than producing a mannequin like Claude 3.5 Sonnet, GPT-4o, Llama 3.1–405B, and many others. These fashions excel on basic goal duties as measured on a wide range of benchmarks throughout matters for logical reasoning, math, coding, studying comprehension and extra.

Nevertheless, regardless of their compelling efficiency on a myriad of downside varieties, getting a typical LLM to truly “assume” earlier than responding requires quite a lot of engineering from the consumer. Essentially, these fashions obtain an enter after which return an output as their last reply. You’ll be able to consider this just like the mannequin producing it’s greatest guess in a single step primarily based on both realized info from pre-training or via in context studying from instructions and data offered in a consumer’s immediate. This habits is why Agent frameworks, Chain-of-Thought (CoT) prompting, and tool-calling have all taken off. These patterns permit folks to construct methods round LLMs which allow a extra iterative, structured, and profitable workflow for LLM software growth.

Lately, fashions like DeepSeek-R1 have diverged from the standard pre-training and post-training patterns that optimize fashions for chat or instruction following. As an alternative DeepSeek-R1 used a multi-stage post-training pipeline to show the mannequin extra particular behaviors like find out how to produce Chain-of-Thought sequences which in flip enhance the mannequin’s general skill to “assume” and cause. We’ll cowl this intimately within the subsequent part utilizing the DeepSeek-R1 coaching course of for example.

Take a look at-Time Compute Scaling: Enabling “Considering” at Inference

What’s thrilling about test-time compute scaling and post-training is that reasoning and iterative downside fixing could be constructed into the fashions themselves or their inference pipelines. As an alternative of counting on the developer to information all the reasoning and iteration course of, there’s alternatives to permit the mannequin to discover a number of resolution paths, replicate on it’s progress, rank the most effective resolution paths, and customarily refine the general reasoning lifecycle earlier than sending a response to the consumer.

Take a look at-time compute scaling is particularly associated to optimizing efficiency at inference and doesn’t contain modifying the mannequin’s parameters. What this implies virtually is {that a} smaller mannequin like Llama 3.2–8b can compete with a lot bigger fashions by spending extra time “pondering” and dealing via quite a few potential options at inference time.

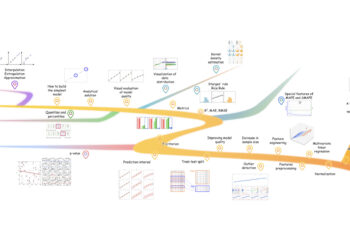

A number of the widespread test-time scaling methods embody self-refinement the place the mannequin iteratively refines it’s personal outputs and looking out towards a verifier the place a number of potential solutions are generated and a verifier selects the most effective path to maneuver ahead from. Frequent search towards verifier methods embody:

- Greatest-of-N the place quite a few responses are generated for every query, every reply is scored, and the reply with the very best rating wins.

- Beam Search which usually use a Course of Reward Mannequin (PRM) to attain a multi-step reasoning course of. This lets you begin by producing a number of resolution paths (beams), decide which paths are the most effective to proceed looking out on, then generate a brand new set of sub-paths and consider these, persevering with till an answer is reached.

- Numerous Verifier Tree Search (DVTS) is expounded to Beam Search however creates a separate tree for every of the preliminary paths (beams) created. Every tree is then expanded and the branches of the tree are scored utilizing PRM.

Figuring out which search technique is greatest remains to be an lively space of analysis, however there are quite a lot of nice sources on HuggingFace which offer examples for the way these search methods could be carried out on your use case.

OpenAI’s o1 mannequin introduced in September 2024 was one of many first fashions designed to “assume” earlier than responding to customers. Though it takes longer to get a response from o1 in comparison with fashions like GPT-4o, o1’s responses are usually higher for extra superior duties because it generates chain of thought sequences that assist it break down and resolve issues.

Working with o1 and o3 requires a special model of immediate engineering in comparison with earlier generations of fashions provided that these new reasoning targeted fashions function fairly in another way than their predecessors. For instance, telling o1 or o3 to “assume step-by-step” will probably be much less helpful than giving the identical directions to GPT-4o.

Given the closed-source nature of OpenAI’s o1 and o3 fashions it’s unimaginable to know precisely how the fashions had been developed; this can be a large cause why DeepSeek-R1 attracted a lot consideration. DeepSeek-R1 is the primary open-source mannequin to show comparable habits and efficiency to OpenAI’s o1. That is wonderful for the open-source neighborhood as a result of it means builders can modify R1 to their wants and, compute energy allowing, can replicate R1’s coaching methodology.

DeepSeek-R1 Coaching Course of:

- DeepSeek-R1-Zero: First, DeepSeek carried out Reinforcement Studying (RL) (post-training) on their base mannequin DeepSeek-V3. This resulted in DeepSeek-R1-Zero, a mannequin that realized find out how to cause, create chain-of-thought-sequences, and demonstrates capabilities like self-verification and reflection. The truth that a mannequin might be taught all these behaviors from RL alone is important for the AI business as an entire. Nevertheless, regardless of DeepSeek-R1-Zero’s spectacular skill to be taught, the mannequin had vital points like language mixing and customarily poor readability. This led the workforce to discover different paths to stabilize mannequin efficiency and create a extra production-ready mannequin.

- DeepSeek-R1: Creating DeepSeek-R1 concerned a multi-stage publish coaching pipeline alternating between SFT and RL steps. Researchers first carried out SFT on DeepSeek-V3 utilizing chilly begin information within the type of hundreds of instance CoT sequences, the purpose of this was to create a extra secure start line for RL and overcome the problems discovered with DeepSeek-R1-Zero. Second, researchers carried out RL and included rewards to advertise language consistency and improve reasoning on duties like science, coding, and math. Third, SFT is accomplished once more, this time together with non-reasoning targeted coaching examples to assist the mannequin retain extra general-purpose talents like writing and role-playing. Lastly, RL happens once more to assist enhance with alignment in the direction of human preferences. This resulted in a extremely succesful mannequin with 671B parameters.

- Distilled DeepSeek-R1 Fashions: The DeepSeek workforce additional demonstrated that DeepSeek-R1’s reasoning could be distilled into open-source smaller fashions utilizing SFT alone with out RL. They fine-tuned smaller fashions starting from 1.5B-70B parameters primarily based on each Qwen and Llama architectures leading to a set of lighter, extra environment friendly fashions with higher reasoning talents. This considerably improves accessibility for builders since many of those distilled fashions can run shortly on their machine.

As reasoning-first fashions and test-time compute scaling strategies proceed to advance, the system design, capabilities, and user-experience for interacting with AI brokers will change considerably.

Going ahead I imagine we’ll see extra streamlined agent groups. As an alternative of getting separate brokers and hyper use-case particular prompts and instruments we’ll seemingly see design patterns the place a single RLM manages all the workflow. This may also seemingly change how a lot background info the consumer wants to offer the agent if the agent is best outfitted to discover a wide range of completely different resolution paths.

Consumer interplay with brokers may also change. At this time many agent interfaces are nonetheless chat-focused with customers anticipating near-instant responses. On condition that it takes RLMs longer to reply I feel user-expectations and experiences will shift and we’ll see extra situations the place customers delegate duties that agent groups execute within the background. This execution time might take minutes or hours relying on the complexity of the duty however ideally will lead to thorough and extremely traceable outputs. This might allow folks to delegate many duties to a wide range of agent groups without delay and spend their time specializing in human-centric duties.

Regardless of their promising efficiency, many reasoning targeted fashions nonetheless lack tool-calling capabilities. Device-calling is essential for brokers because it permits them to work together with the world, collect info, and really execute duties on our behalf. Nevertheless, given the speedy tempo of innovation on this house I count on we’ll quickly see extra RLMs with built-in software calling.

In abstract, that is only the start of a brand new age of general-purpose reasoning fashions that may proceed to rework the way in which that we work and stay.