Intro

AI Brokers are autonomous packages that carry out duties, make choices, and talk with others. Usually, they use a set of instruments to assist full duties. In GenAI functions, these Brokers course of sequential reasoning and may use exterior instruments (like net searches or database queries) when the LLM information isn’t sufficient. Not like a primary chatbot, which generates random textual content when unsure, an AI Agent prompts instruments to offer extra correct, particular responses.

We’re shifting nearer and nearer to the idea of Agentic Ai: programs that exhibit the next stage of autonomy and decision-making capacity, with out direct human intervention. Whereas as we speak’s AI Brokers reply reactively to human inputs, tomorrow’s Agentic AIs proactively have interaction in problem-solving and may alter their habits primarily based on the state of affairs.

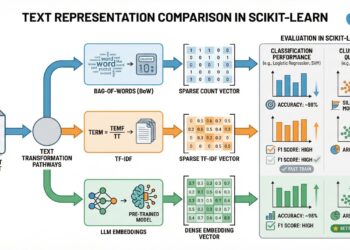

In the present day, constructing Brokers from scratch is turning into as straightforward as coaching a logistic regression mannequin 10 years in the past. Again then, Scikit-Study offered an easy library to rapidly prepare Machine Studying fashions with just some traces of code, abstracting away a lot of the underlying complexity.

On this tutorial, I’m going to point out find out how to construct from scratch various kinds of AI Brokers, from easy to extra superior programs. I’ll current some helpful Python code that may be simply utilized in different comparable instances (simply copy, paste, run) and stroll by each line of code with feedback to be able to replicate this instance.

Setup

As I stated, anybody can have a customized Agent operating regionally free of charge with out GPUs or API keys. The one mandatory library is Ollama (pip set up ollama==0.4.7), because it permits customers to run LLMs regionally, without having cloud-based providers, giving extra management over knowledge privateness and efficiency.

To begin with, you must obtain Ollama from the web site.

Then, on the immediate shell of your laptop computer, use the command to obtain the chosen LLM. I’m going with Alibaba’s Qwen, because it’s each good and lite.

After the obtain is accomplished, you may transfer on to Python and begin writing code.

import ollama

llm = "qwen2.5"Let’s take a look at the LLM:

stream = ollama.generate(mannequin=llm, immediate=""'what time is it?''', stream=True)

for chunk in stream:

print(chunk['response'], finish='', flush=True)Clearly, the LLM per se could be very restricted and it could actually’t do a lot apart from chatting. Subsequently, we have to present it the likelihood to take motion, or in different phrases, to activate Instruments.

Probably the most frequent instruments is the flexibility to search the Web. In Python, the simplest option to do it’s with the well-known personal browser DuckDuckGo (pip set up duckduckgo-search==6.3.5). You possibly can instantly use the unique library or import the LangChain wrapper (pip set up langchain-community==0.3.17).

With Ollama, so as to use a Instrument, the perform have to be described in a dictionary.

from langchain_community.instruments import DuckDuckGoSearchResults

def search_web(question: str) -> str:

return DuckDuckGoSearchResults(backend="information").run(question)

tool_search_web = {'kind':'perform', 'perform':{

'identify': 'search_web',

'description': 'Search the net',

'parameters': {'kind': 'object',

'required': ['query'],

'properties': {

'question': {'kind':'str', 'description':'the subject or topic to look on the internet'},

}}}}

## take a look at

search_web(question="nvidia")Web searches may very well be very broad, and I need to give the Agent the choice to be extra exact. Let’s say, I’m planning to make use of this Agent to study monetary updates, so I may give it a selected software for that subject, like looking out solely a finance web site as an alternative of the entire net.

def search_yf(question: str) -> str:

engine = DuckDuckGoSearchResults(backend="information")

return engine.run(f"web site:finance.yahoo.com {question}")

tool_search_yf = {'kind':'perform', 'perform':{

'identify': 'search_yf',

'description': 'Seek for particular monetary information',

'parameters': {'kind': 'object',

'required': ['query'],

'properties': {

'question': {'kind':'str', 'description':'the monetary subject or topic to look'},

}}}}

## take a look at

search_yf(question="nvidia")Easy Agent (WebSearch)

For my part, essentially the most primary Agent ought to not less than have the ability to select between one or two Instruments and re-elaborate the output of the motion to provide the consumer a correct and concise reply.

First, you must write a immediate to explain the Agent’s goal, the extra detailed the higher (mine could be very generic), and that would be the first message within the chat historical past with the LLM.

immediate=""'You might be an assistant with entry to instruments, you have to resolve when to make use of instruments to reply consumer message.'''

messages = [{"role":"system", "content":prompt}]With the intention to maintain the chat with the AI alive, I’ll use a loop that begins with consumer’s enter after which the Agent is invoked to reply (which generally is a textual content from the LLM or the activation of a Instrument).

whereas True:

## consumer enter

attempt:

q = enter('🙂 >')

besides EOFError:

break

if q == "stop":

break

if q.strip() == "":

proceed

messages.append( {"function":"consumer", "content material":q} )

## mannequin

agent_res = ollama.chat(

mannequin=llm,

instruments=[tool_search_web, tool_search_yf],

messages=messages)Up thus far, the chat historical past may look one thing like this:

If the mannequin desires to make use of a Instrument, the suitable perform must be run with the enter parameters recommended by the LLM in its response object:

So our code must get that info and run the Instrument perform.

## response

dic_tools = {'search_web':search_web, 'search_yf':search_yf}

if "tool_calls" in agent_res["message"].keys():

for software in agent_res["message"]["tool_calls"]:

t_name, t_inputs = software["function"]["name"], software["function"]["arguments"]

if f := dic_tools.get(t_name):

### calling software

print('🔧 >', f"x1b[1;31m{t_name} -> Inputs: {t_inputs}x1b[0m")

messages.append( {"role":"user", "content":"use tool '"+t_name+"' with inputs: "+str(t_inputs)} )

### tool output

t_output = f(**tool["function"]["arguments"])

print(t_output)

### last res

p = f'''Summarize this to reply consumer query, be as concise as doable: {t_output}'''

res = ollama.generate(mannequin=llm, immediate=q+". "+p)["response"]

else:

print('🤬 >', f"x1b[1;31m{t_name} -> NotFoundx1b[0m")

if agent_res['message']['content'] != '':

res = agent_res["message"]["content"]

print("👽 >", f"x1b[1;30m{res}x1b[0m")

messages.append( {"role":"assistant", "content":res} )Now, if we run the full code, we can chat with our Agent.

Advanced Agent (Coding)

LLMs know how to code by being exposed to a large corpus of both code and natural language text, where they learn patterns, syntax, and semantics of Programming languages. The model learns the relationships between different parts of the code by predicting the next token in a sequence. In short, LLMs can generate Python code but can’t execute it, Agents can.

I shall prepare a Tool allowing the Agent to execute code. In Python, you can easily create a shell to run code as a string with the native command exec().

import io

import contextlib

def code_exec(code: str) -> str:

output = io.StringIO()

with contextlib.redirect_stdout(output):

try:

exec(code)

except Exception as e:

print(f"Error: {e}")

return output.getvalue()

tool_code_exec = {'type':'function', 'function':{

'name': 'code_exec',

'description': 'execute python code',

'parameters': {'type': 'object',

'required': ['code'],

'properties': {

'code': {'kind':'str', 'description':'code to execute'},

}}}}

## take a look at

code_exec("a=1+1; print(a)")Similar to earlier than, I’ll write a immediate, however this time, at first of the chat-loop, I’ll ask the consumer to offer a file path.

immediate=""'You might be an knowledgeable knowledge scientist, and you've got instruments to execute python code.

To begin with, execute the next code precisely as it's: 'df=pd.read_csv(path); print(df.head())'

When you create a plot, ALWAYS add 'plt.present()' on the finish.

'''

messages = [{"role":"system", "content":prompt}]

begin = True

whereas True:

## consumer enter

attempt:

if begin is True:

path = enter('📁 Present a CSV path >')

q = "path = "+path

else:

q = enter('🙂 >')

besides EOFError:

break

if q == "stop":

break

if q.strip() == "":

proceed

messages.append( {"function":"consumer", "content material":q} )Since coding duties generally is a little trickier for LLMs, I’m going so as to add additionally reminiscence reinforcement. By default, throughout one session, there isn’t a real long-term reminiscence. LLMs have entry to the chat historical past, to allow them to keep in mind info quickly, and monitor the context and directions you’ve given earlier within the dialog. Nonetheless, reminiscence doesn’t at all times work as anticipated, particularly if the LLM is small. Subsequently, an excellent observe is to bolster the mannequin’s reminiscence by including periodic reminders within the chat historical past.

immediate=""'You might be an knowledgeable knowledge scientist, and you've got instruments to execute python code.

To begin with, execute the next code precisely as it's: 'df=pd.read_csv(path); print(df.head())'

When you create a plot, ALWAYS add 'plt.present()' on the finish.

'''

messages = [{"role":"system", "content":prompt}]

reminiscence = '''Use the dataframe 'df'.'''

begin = True

whereas True:

## consumer enter

attempt:

if begin is True:

path = enter('📁 Present a CSV path >')

q = "path = "+path

else:

q = enter('🙂 >')

besides EOFError:

break

if q == "stop":

break

if q.strip() == "":

proceed

## reminiscence

if begin is False:

q = reminiscence+"n"+q

messages.append( {"function":"consumer", "content material":q} )Please word that the default reminiscence size in Ollama is 2048 characters. In case your machine can deal with it, you may improve it by altering the quantity when the LLM is invoked:

## mannequin

agent_res = ollama.chat(

mannequin=llm,

instruments=[tool_code_exec],

choices={"num_ctx":2048},

messages=messages)On this usecase, the output of the Agent is usually code and knowledge, so I don’t need the LLM to re-elaborate the responses.

## response

dic_tools = {'code_exec':code_exec}

if "tool_calls" in agent_res["message"].keys():

for software in agent_res["message"]["tool_calls"]:

t_name, t_inputs = software["function"]["name"], software["function"]["arguments"]

if f := dic_tools.get(t_name):

### calling software

print('🔧 >', f"x1b[1;31m{t_name} -> Inputs: {t_inputs}x1b[0m")

messages.append( {"role":"user", "content":"use tool '"+t_name+"' with inputs: "+str(t_inputs)} )

### tool output

t_output = f(**tool["function"]["arguments"])

### last res

res = t_output

else:

print('🤬 >', f"x1b[1;31m{t_name} -> NotFoundx1b[0m")

if agent_res['message']['content'] != '':

res = agent_res["message"]["content"]

print("👽 >", f"x1b[1;30m{res}x1b[0m")

messages.append( {"role":"assistant", "content":res} )

start = FalseNow, if we run the full code, we can chat with our Agent.

Conclusion

This article has covered the foundational steps of creating Agents from scratch using only Ollama. With these building blocks in place, you are already equipped to start developing your own Agents for different use cases.

Stay tuned for Part 2, where we will dive deeper into more advanced examples.

Full code for this article: GitHub

I hope you enjoyed it! Feel free to contact me for questions and feedback or just to share your interesting projects.

👉 Let’s Connect 👈