fails in predictable methods. Retrieval returns unhealthy chunks; the mannequin hallucinates. You repair your chunking and transfer on. The debugging floor is small as a result of the structure is straightforward: retrieve as soon as, generate as soon as, carried out.

Agentic RAG fails otherwise as a result of the system form is completely different. It isn’t a pipeline. It’s a management loop: plan → retrieve → consider → resolve → retrieve once more. That loop is what makes it highly effective for complicated queries, and it’s precisely what makes it harmful in manufacturing. Each iteration is a brand new alternative for the agent to make a foul choice, and unhealthy selections compound.

Three failure modes present up repeatedly as soon as groups transfer agentic RAG previous prototyping:

- Retrieval Thrash: The agent retains looking out with out converging on a solution

- Software storms: extreme software calls that cascade and retry till budgets are gone

- Context bloat: the context window fills with low-signal content material till the mannequin stops following its personal directions

These failures virtually all the time current as ‘the mannequin received worse, however the root trigger will not be the bottom mannequin. It lacks budgets, weak stopping guidelines, and 0 observability of the agent’s choice loop.

This text breaks down every failure mode, why it occurs, the way to catch it early with particular indicators, and when to skip agentic RAG fully.

What Agentic RAG Is (and What Makes It Fragile)

Traditional RAG retrieves as soon as and solutions. If retrieval fails, the mannequin has no restoration mechanism. It generates the perfect output it could actually from no matter got here again. Agentic RAG provides a management layer on high. The system can consider its personal proof, establish gaps, and check out once more.

The agent loop runs roughly like this: parse the person query, construct a retrieval plan, execute retrieval or software calls, synthesise the outcomes, confirm whether or not they reply the query, then both cease and reply or loop again for one more cross. This is identical retrieve → motive → resolve sample described in ReAct-style architectures, and it really works effectively when queries require multi-hop reasoning or proof scattered throughout sources.

However the loop introduces a core fragility. The agent optimises domestically. At every step, it asks, “Do I’ve sufficient?” and when the reply is unsure, it defaults to “get extra”. With out arduous stopping guidelines, the default spirals. The agent retrieves, extra, escalates, retrieves once more, every cross burning tokens with out guaranteeing progress. LangGraph’s personal official agentic RAG tutorial had precisely this bug: an infinite retrieval loop that required a rewrite_count cap to repair. If the reference implementation can loop eternally, manufacturing techniques actually will.

The repair will not be a greater immediate. It’s budgeting, gating, and higher indicators.

Failure Mode Taxonomy: What Breaks and Why

Retrieval Thrash: The Loop That By no means Converges

Retrieval thrash is the agent repeatedly retrieving with out deciding on a solution. In traces, you see it clearly: near-duplicate queries, oscillating search phrases (broadening, then narrowing, then broadening once more), and reply high quality that stays flat throughout iterations.

A concrete situation. A person asks: “What’s our reimbursement coverage for distant staff in California?” The agent retrieves the overall reimbursement coverage. Its verifier flags the reply as incomplete as a result of it doesn’t point out California-specific guidelines. The agent reformulates: “California distant work reimbursement.” It retrieves a tangentially associated HR doc. Nonetheless not assured. It reformulates once more: “California labour code expense reimbursement.” Three extra iterations later, it has burned by its retrieval finances, and the reply is barely higher than after spherical one.

The basis causes are constant: weak stopping standards (the verifier rejects with out saying what’s particularly lacking), poor question reformulation (rewording quite than concentrating on a niche), low-signal retrieval outcomes (the corpus genuinely doesn’t include the reply, however the agent can’t recognise that), or a suggestions loop the place the verifier and retriever oscillate with out converging. Manufacturing steering from a number of groups converges on the identical quantity: three cap retrieval cycles. After three failed passes, return a best-effort reply with a confidence disclaimer.’

Software Storms and Context Bloat: When the Agent Floods Itself

Software storms and context bloat are likely to happen collectively, and every makes the opposite worse.

A software storm happens when the agent fires extreme software calls: cascading retries after timeouts, parallel calls returning redundant information, or a “name all the things to be protected” technique when the agent is unsure. One startup documented brokers making 200 LLM calls in 10 minutes, burning $50–$200 earlier than anybody observed. One other noticed prices spike 1,700% throughout a supplier outage as retry logic spiralled uncontrolled.

Context bloat is the downstream outcome. Large software outputs are pasted instantly into the context window: uncooked JSON, repeated intermediate summaries, rising reminiscence till the mannequin’s consideration is unfold too skinny to comply with directions. Analysis constantly reveals that fashions pay much less consideration to data buried in the course of lengthy contexts. Stanford and Meta’s “Misplaced within the Center” examine discovered efficiency drops of 20+ share factors when essential data sits mid-context. In a single check, accuracy on multi-document QA truly fell beneath closed-book efficiency with 20 paperwork included, that means including retrieved context actively made the reply worse.

The basis causes: no per-tool budgets or price limits, no compression technique for software outputs, and “stuff all the things” retrieval configurations that deal with top-20 as an affordable default.

Find out how to Detect These Failures Early

You’ll be able to catch all three failure modes with a small set of indicators. The aim is to make silent failures seen earlier than they seem in your bill.

Quantitative indicators to trace from day one:

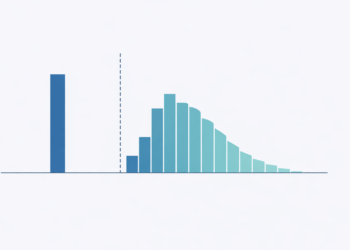

- Software calls per job (common and p95): spikes point out software storms. Examine above 10 calls; hard-kill above 30.

- Retrieval iterations per question: if the median is 1–2 however p95 is 6+, you’ve got a thrash downside on arduous queries.

- Context size progress price: what number of tokens are added per iteration? If context grows quicker than helpful proof, you’ve got bloat.

- p95 latency: tail latency is the place agentic failures conceal, as a result of most queries end quick whereas a number of spiral.

- Value per profitable job: essentially the most trustworthy metric. It penalises wasted makes an attempt, not simply common value per run.

Qualitative traces: drive the agent to justify every loop. At each iteration, log two issues: “What new proof was gained?” and “Why is that this not enough to reply?” If the justifications are imprecise or repetitive, the loop is thrashing.

How every failure maps to sign spikes: retrieval thrash reveals as iterations climbing whereas reply high quality stays flat. Software storms present as name counts spiking alongside timeouts and value jumps. Context bloat reveals as context tokens climbing whereas instruction-following degrades.

Tripwire guidelines (set as arduous caps): max 3 retrieval iterations; max 10–15 software calls per job; a context token ceiling relative to your mannequin’s efficient window (not its claimed most); and a wall-clock timebox on each run. When a tripwire fires, the agent stops cleanly and returns its greatest reply with specific uncertainty, no more retries.

Mitigations and Choice Framework

Every failure mode maps to particular mitigations.

For retrieval thrash: cap iterations at three. Add a “new proof threshold”: if the newest retrieval doesn’t floor meaningfully completely different content material (measured by similarity to prior outcomes), cease and reply. Constrain reformulation so the agent should goal a particular recognized hole quite than simply rewording.

For software storms: set per-tool budgets and price limits. Deduplicate outcomes throughout software calls. Add fallbacks: if a software occasions out twice, use a cached outcome or skip it. Manufacturing groups utilizing intent-based routing (classifying question complexity earlier than selecting the retrieval path) report 40% value reductions and 35% latency enhancements.

For context bloat: summarise software outputs earlier than injecting them into context. A 5,000-token API response can compress to 200 tokens of structured abstract with out shedding sign. Cap top-k at 5–10 outcomes. Deduplicate chunks aggressively: if two chunks share 80%+ semantic overlap, preserve one. Microsoft’s LLMLingua achieves as much as 20× immediate compression with minimal reasoning loss, which instantly addresses bloat in agentic pipelines.

Management insurance policies that apply in every single place: timebox each run. Add a “closing reply required” mode that prompts when any finances is hit, forcing the agent to reply with no matter proof it has, together with specific uncertainty markers and steered subsequent steps.

The choice rule is straightforward: use agentic RAG solely when question complexity is excessive and the price of being flawed is excessive. For FAQs, doc lookups, and simple extraction, basic RAG is quicker, cheaper, and much simpler to debug. If single-pass retrieval routinely fails to your hardest queries, add a managed second cross earlier than going full agentic.

Agentic RAG will not be a greater RAG. It’s RAG plus a management loop. And management loops demand budgets, cease guidelines, and traces. With out them, you’re delivery a distributed workflow with out telemetry, and the primary signal of failure might be your cloud invoice.