In half 1 of this sequence we spoke about creating re-usable code property that may be deployed throughout a number of tasks. Leveraging a centralised repository of widespread information science steps ensures that experiments will be carried out faster and with higher confidence within the outcomes. A streamlined experimentation section is vital in making certain that you simply ship worth to the enterprise as rapidly as attainable.

On this article I need to deal with how one can enhance the speed at which you’ll be able to experiment. You might have 10s–100s of concepts for various setups that you simply need to strive, and carrying them out effectively will drastically enhance your productiveness. Finishing up a full retraining when mannequin efficiency decays and exploring the inclusion of latest options once they grow to be out there are just a few conditions the place with the ability to rapidly iterate over experiments turns into a fantastic boon.

We Want To Speak About Notebooks (Once more)

Whereas Jupyter Notebooks are a good way to show your self about libraries and ideas, they’ll simply be misused and grow to be a crutch that actively stands in the way in which of quick mannequin growth. Take into account the case of a knowledge scientist shifting onto a brand new venture. The primary steps are usually to open up a brand new pocket book and start some exploratory information evaluation. Understanding what sort of information you may have out there to you, doing a little easy abstract statistics, understanding your consequence and eventually some easy visualisations to grasp the connection between the options and consequence. These steps are a helpful endeavour as higher understanding your information is vital earlier than you start the experimentation course of.

The difficulty with this isn’t within the EDA itself, however what comes after. What usually occurs is the info scientist strikes on and immediately opens a brand new pocket book to start writing their experiment framework, usually beginning with information transformations. That is usually finished by way of re-using code snippets from their EDA pocket book by copying from one to the opposite. As soon as they’ve their first pocket book prepared, it’s then executed and the outcomes are both saved regionally or written to an exterior location. This information is then picked up by one other pocket book and processed additional, akin to by function choice after which written again out. This course of repeats itself till your experiment pipeline is shaped of 5-6 notebooks which must be triggered sequentially by a knowledge scientist to ensure that a single experiment to be run.

With such a guide strategy to experimentation, iterating over concepts and attempting out completely different eventualities turns into a labour intensive job. You find yourself with parallelization on the human-level, the place entire groups of information scientists commit themselves to working experiments by having native copies of the notebooks and diligently enhancing their code to strive completely different setups. The outcomes are then added to a report, the place as soon as experimentation has completed the perfect performing setup is discovered amongst all others.

All of this isn’t sustainable. Crew members going off sick or taking holidays, working experiments in a single day hoping the pocket book doesn’t crash and forgetting what experimental setups you may have finished and are nonetheless to do. These shouldn’t be worries that you’ve when working an experiment. Fortunately there’s a higher means that includes with the ability to iterate over concepts in a structured and methodical method at scale. All of this may drastically simplify the experimentation section of your venture and drastically lower its time to worth.

Embrace Scripting To Create Your Experimental Pipeline

Step one in accelerating your potential to experiment is to maneuver past notebooks and begin scripting. This must be the best half within the course of, you merely put your code right into a .py file versus the cellblocks of a .ipynb. From there you possibly can invoke your script from the command line, for instance:

python src/principal.py

if __name__ == "__main__":

input_data = ""

output_loc = ""

dataprep_config = {}

featureselection_config = {}

hyperparameter_config = {}

information = DataLoader().load(input_data)

data_train, data_val = DataPrep().run(information, dataprep_config)

features_to_keep = FeatureSelection().run(data_train, data_val, featureselection_config)

model_hyperparameters = HyperparameterTuning().run(data_train, data_val, features_to_keep, hyperparameter_config)

evaluation_metrics = Analysis().run(data_train, data_val, features_to_keep, model_hyperparameters)

ArtifactSaver(output_loc).save([data_train, data_val, features_to_keep, model_hyperparameters, evaluation_metrics])Word that adhering to the precept of controlling your workflow by passing arguments into features can drastically simplify the format of your experimental pipeline. Having a script like this has already improved your potential to run experiments. You now solely want a single script invocation versus the stop-start nature of working a number of notebooks in sequence.

It’s possible you’ll need to add some enter arguments to this script, akin to with the ability to level to a selected information location, or specifying the place to retailer output artefacts. You might simply prolong your script to take some command line arguments:

python src/main_with_arguments.py --input_data

if __name__ == "__main__":

input_data, output_loc = parse_input_arguments()

dataprep_config = {}

featureselection_config = {}

hyperparameter_config = {}

information = DataLoader().load(input_data)

data_train, data_val = DataPrep().run(information, dataprep_config)

features_to_keep = FeatureSelection().run(data_train, data_val, featureselection_config)

model_hyperparameters = HyperparameterTuning().run(data_train, data_val, features_to_keep, hyperparameter_config)

evaluation_metrics = Analysis().run(data_train, data_val, features_to_keep, model_hyperparameters)

ArtifactSaver(output_loc).save([data_train, data_val, features_to_keep, model_hyperparameters, evaluation_metrics])At this level you may have the beginning of a superb pipeline; you possibly can set the enter and output location and invoke your script with a single command. Nevertheless, attempting out new concepts remains to be a comparatively guide endeavour, it is advisable go into your codebase and make adjustments. As beforehand talked about, switching between completely different experiment setups ought to ideally be so simple as modifying the enter argument to a wrapper perform that controls what must be carried out. We are able to convey all of those completely different arguments right into a single location to make sure that modifying your experimental setup turns into trivial. The only means of implementing that is with a configuration file.

Configure Your Experiments With a Separate File

Storing your whole related perform arguments in a separate file comes with a number of advantages. Splitting the configuration from the primary codebase makes it simpler to check out completely different experimental setups. You merely edit the related fields with no matter your new concept is and you’re able to go. You’ll be able to even swap out total configuration information with ease. You even have full oversight over what precisely your experimental setup was. For those who keep a separate file per experiment then you possibly can return to earlier experiments and see precisely what was carried out.

So what does a configuration file appear like and the way does it interface with the experiment pipeline script you may have created? A easy implementation of a config file is to make use of yaml notation and set it up within the following method:

- Prime stage boolean flags to activate and off the completely different components of your pipeline

- For every step in your pipeline, outline what calculations you need to perform

file_locations:

input_data: ""

output_loc: ""

pipeline_steps:

data_prep: True

feature_selection: False

hyperparameter_tuning: True

analysis: True

data_prep:

nan_treatment: "drop"

numerical_scaling: "normalize"

categorical_encoding: "ohe"This can be a versatile and light-weight means of controlling how your experiments are run. You’ll be able to then modify your script to load on this configuration and use it to regulate the workflow of your pipeline:

python src/main_with_config –config_loc

if __name__ == "__main__":

config_loc = parse_input_arguments()

config = load_config(config_loc)

information = DataLoader().load(config["file_locations"]["input_data"])

if config["pipeline_steps"]["data_prep"]:

data_train, data_val = DataPrep().run(information,

config["data_prep"])

if config["pipeline_steps"]["feature_selection"]:

features_to_keep = FeatureSelection().run(data_train,

data_val,

config["feature_selection"])

if config["pipeline_steps"]["hyperparameter_tuning"]:

model_hyperparameters = HyperparameterTuning().run(data_train,

data_val,

features_to_keep,

config["hyperparameter_tuning"])

if config["pipeline_steps"]["evaluation"]:

evaluation_metrics = Analysis().run(data_train,

data_val,

features_to_keep,

model_hyperparameters)

ArtifactSaver(config["file_locations"]["output_loc"]).save([data_train,

data_val,

features_to_keep,

model_hyperparameters,

evaluation_metrics])We have now now utterly decoupled the setup of our experiment from the code that executes it. What experimental setup we need to strive is now utterly decided by the configuration file, making it trivial to check out new concepts. We are able to even management what steps we need to perform, permitting eventualities like:

- Operating information preparation and have choice solely to generate an preliminary processed dataset that may type the idea of a extra detailed experimentation on attempting out completely different fashions and associated hyperparameters

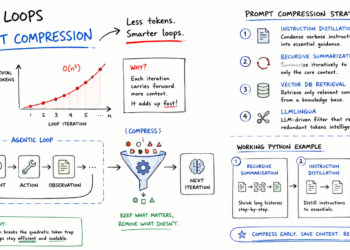

Leverage automation and parallelism

We now have the flexibility to configure completely different experimental setups by way of a configuration file and launch full end-to-end experiment with a single command line invocation. All that’s left to do is scale the aptitude to iterate over completely different experiment setups as rapidly as attainable. The important thing to that is:

- Automation to programatically modify the configuration file

- Parallel execution of experiments

Step 1) is comparatively trivial. We are able to write a shell script or perhaps a secondary python script whose job is to iterative over completely different experimental setups that the person provides after which launch a pipeline with every new setup.

#!/bin/bash

for nan_treatment in drop impute_zero impute_mean

do

update_config_file($nan_treatment, )

python3 ./src/main_with_config.py --config_loc

finished; Step 2) is a extra fascinating proposition and could be very a lot state of affairs dependent. The entire experiments that you simply run are self contained and don’t have any dependency on one another. Which means we are able to theoretically launch all of them on the identical time. Virtually it depends on you accessing exterior compute, both in-house or although a cloud service supplier. If that is so then every experiment will be launched as a separate job in your compute, assuming that you’ve entry to utilizing these sources. This does contain different issues nevertheless, akin to deploying docker photos to make sure a constant atmosphere throughout experiments and determining the way to embed your code inside the exterior compute. Nevertheless as soon as that is solved you at the moment are ready to launch as many experiments as you would like, you’re solely restricted by the sources of your compute supplier.

Embed Loggers and Experiment Trackers for Straightforward Oversight

Being able to launch 100’s of parallel experiments on exterior compute is a transparent victory on the trail to lowering the time to worth of information science tasks. Nevertheless abstracting out this course of comes with the price of it not being as straightforward to interrogate, particularly if one thing goes mistaken. The interactive nature of notebooks made it attainable to execute a cellblock and immediately take a look at the outcome.

Monitoring the progress of your pipeline will be realised through the use of a logger in your experiment. You’ll be able to seize key outcomes such because the options chosen by the choice course of, or use it to signpost what what’s presently executing within the pipeline. If one thing had been to go mistaken you possibly can reference the log entries you may have created to determine the place the problem occurred, after which presumably embed extra logs to higher perceive and resolve the problem.

logger.data("Splitting information into prepare and validation set")

df_train, df_val = create_data_split(df, technique = 'random')

logger.data(f"coaching information dimension: {df_train.form[0]}, validation information dimension: {df_val.form[0]}")

logger.data(f"treating lacking information by way of: {missing_method}")

df_train = treat_missing_data(df_train, technique = missing_method)

logger.data(f"scaling numerical information by way of: {scale_method}")

df_train = scale_numerical_features(df_train, technique = scale_method)

logger.data(f"encoding categorical information by way of: {encode_method}")

df_train = encode_categorical_features(df_train, technique = encode_method)

logger.data(f"variety of options after encoding: {df_train.form[1]}")The ultimate side of launching massive scale parallel experiments is discovering environment friendly methods of analysing them to rapidly discover the perfect performing setup. Studying via occasion logs or having to open up efficiency information for every experiment individually will rapidly undo all of the arduous work you may have finished in making certain a streamlined experimental course of.

The best factor to do is to embed an experiment tracker into your pipeline script. There are a selection of 1st and threerd social gathering tooling out there to you that allows you to arrange a venture area after which log the essential efficiency metrics of each experimental setup you take into account. They usually come a configurable entrance finish that permit customers to create easy plots for comparability. It will make discovering the perfect performing experiment a a lot less complicated endeavour.

Conclusion

On this article now we have explored the way to create pipelines that facilitates the flexibility to effortlessly perform the Experimentation course of. This has concerned shifting out of notebooks and changing your experiment course of right into a single script. This script is then backed by a configuration file that controls the setup of your experiment, making it trivial to hold out completely different setups. Exterior compute is then leveraged in an effort to parallelize the execution of the experiments. Lastly, we spoke about utilizing loggers and experiment trackers in an effort to keep oversight of your experiments and extra simply observe their outcomes. All of this may permit information scientists to drastically speed up their potential to run experiments, enabling them to scale back the time to worth of their tasks and ship outcomes to the enterprise faster.