rush to combine giant language fashions (LLMs) into customer support brokers, inside copilots, and code era helpers, there’s a blind spot rising: safety. Whereas we concentrate on the continual technological developments and hype round AI, the underlying dangers and vulnerabilities typically go unaddressed. I see many firms dealing with a double commonplace on the subject of safety. OnPrem IT set-ups are subjected to intense scrutiny, however using cloud AI providers like Azure OpenAI studio, or Google Gemini are adopted shortly with the press of a button.

I understand how simple it’s to simply construct a wrapper answer round hosted LLM APIs, however is it actually the fitting selection for enterprise use instances? In case your AI agent is leaking firm secrets and techniques to OpenAI or getting hijacked by way of a cleverly worded immediate, that’s not innovation however a breach ready to occur. Simply because we’re indirectly confronted with safety decisions that concern the precise fashions when leveraging these exterior API’s, shouldn’t imply that we will neglect that the businesses behind these fashions made these decisions for us.

On this article I wish to discover the hidden dangers and make the case for a extra safety conscious path: self-hosted LLMs and acceptable danger mitigation methods.

LLMs aren’t secure by default

Simply because an LLM sounds very good with its outputs doesn’t imply that they’re inherently secure to combine into your programs. A latest research by Yoao et al. explored the twin position of LLMs in safety [1]. Whereas LLMs open up loads of potentialities and might typically even assist with safety practices, additionally they introduce new vulnerabilities and avenues for assault. Commonplace practices nonetheless have to evolve to have the ability to sustain with the brand new assault surfaces being created by AI powered options.

Let’s take a look at a few vital safety dangers that have to be handled when working with LLMs.

Information Leakage

Information Leakage occurs when delicate info (like consumer knowledge or IP) is unintentionally uncovered, accessed or misused throughout mannequin coaching or inference. With the typical value of a knowledge breach reaching $5 million in 2025 [2], and 33% of workers repeatedly sharing delicate knowledge with AI instruments [3], knowledge leakage poses a really actual danger that ought to be taken critically.

Even when these third celebration LLM firms are promising to not practice in your knowledge, it’s laborious to confirm what’s logged, cached, or saved downstream. This leaves firms with little management over GDPR and HIPAA compliance.

Immediate injection

An attacker doesn’t want root entry to your AI programs to do hurt. A easy chat interface already supplies loads of alternative. Immediate Injection is a technique the place a hacker methods an LLM into offering unintended outputs and even executing unintended instructions. OWASP notes immediate injection because the primary safety danger for LLMs [4].

An instance situation:

A consumer employs an LLM to summarize a webpage containing hidden directions that trigger the LLM to leak chat info to an attacker.

The extra company your LLM has the larger the vulnerability for immediate injection assaults [5].

Opaque provide chains

LLMs like GPT-4, Claude, and Gemini are closed-source. Subsequently you received’t know:

- What knowledge they have been educated on

- Once they have been final up to date

- How weak they’re to zero-day exploits

Utilizing them in manufacturing introduces a blind spot in your safety.

Slopsquatting

With extra LLMs getting used as coding assistants a brand new safety risk has emerged: slopsquatting. You could be conversant in the time period typesquatting the place hackers use widespread typos in code or URLs to create assaults. In slopsquatting, hackers don’t depend on human typos, however on LLM hallucinations.

LLMs are inclined to hallucinate non-existing packages when producing code snippets, and if these snippets are used with out correct checks, this supplies hackers with an ideal alternative to contaminate your programs with malware and the likes [6]. Typically these hallucinated packages will sound very acquainted to actual packages, making it tougher for a human to select up on the error.

Correct mitigation methods assist

I do know most LLMs appear very good, however they don’t perceive the distinction between a traditional consumer interplay and a cleverly disguised assault. Counting on them to self-detect assaults is like asking autocomplete to set your firewall guidelines. That’s why it’s so vital to have correct processes and tooling in place to mitigate the dangers round LLM based mostly programs.

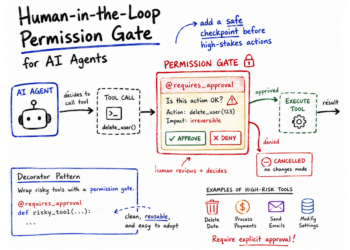

Mitigation methods for a primary line of defence

There are methods to scale back danger when working with LLMs:

- Enter/output sanitization (like regex filters). Similar to it proved to be vital in front-end growth, it shouldn’t be forgotten in AI programs.

- System prompts with strict boundaries. Whereas system prompts should not a catch-all, they may help to set a very good basis of boundaries

- Utilization of AI guardrails frameworks to stop malicious utilization and implement your utilization insurance policies. Frameworks like Guardrails AI make it simple to arrange one of these safety [7].

Ultimately these mitigation methods are solely a primary wall of defence. In the event you’re utilizing third celebration hosted LLMs you’re nonetheless sending knowledge exterior your safe setting, and also you’re nonetheless depending on these LLM firms to appropriately deal with safety vulnerabilities.

Self-hosting your LLMs for extra management

There are many highly effective open-source alternate options which you can run domestically in your personal environments, by yourself phrases. Latest developments have even resulted in performant language fashions that may run on modest infrastructure [8]! Contemplating open-source fashions is not only about value or customization (which arguably are good bonusses as effectively). It’s about management.

Self-hosting offers you:

- Full knowledge possession, nothing leaves your chosen setting!

- Customized fine-tuning potentialities with non-public knowledge, which permits for higher efficiency in your use instances.

- Strict community isolation and runtime sandboxing

- Auditability. You already know what mannequin model you’re utilizing and when it was modified.

Sure, it requires extra effort: orchestration (e.g. BentoML, Ray Serve), monitoring, scaling. I’m additionally not saying that self-hosting is the reply for every thing. Nonetheless, once we’re speaking about use instances dealing with delicate knowledge, the trade-off is value it.

Deal with GenAI programs as a part of your assault floor

In case your chatbot could make selections, entry paperwork, or name APIs, it’s successfully an unvetted exterior marketing consultant with entry to your programs. So deal with it equally from a safety perspective: govern entry, monitor rigorously, and don’t outsource delicate work to them. Hold the vital AI programs in home, in your management.

References

[1] Y. Yoao et al., A survey on giant language mannequin (LLM) safety and privateness: The Good, The Unhealthy, and The Ugly (2024), ScienceDirect

[2] Y. Mulayam, Information Breach Forecast 2025: Prices & Key Cyber Dangers (2025), Certbar

[3] S. Dobrontei and J. Nurse, Oh, Behave! The Annual Cybersecurity Attitudes and Behaviors Report 2024–2025 — CybSafe (2025), Cybsafe and the Nationwide Cybersecurity Alliance

[4] 2025 Prime 10 Threat & Mitigations for LLMs and Gen AI Apps (2025), OWASP

[5] Okay. Greshake et al., Not what you’ve signed up for: Compromising Actual-World LLM-Built-in Purposes with Oblique Immediate Injection(2023), Affiliation for Computing Equipment

[6] J. Spracklen et al. We Have a Package deal for You! A Complete Evaluation of Package deal Hallucinations by Code Producing LLMs(2025), USENIX 2025

[7] Guardrails AI, GitHub — guardrails-ai/guardrails: Including guardrails to giant language fashions.

[8] E. Shittu, Google’s Gemma 3 can run on a single TPU or GPU (2025), TechTarget