I learn, I like to spotlight stuff (I exploit a Kindle). I really feel like by studying I don’t get to retain greater than 10% of the data I devour but it surely’s by way of re-reading the highlights or summarizing the e-book utilizing them is what makes me really perceive what I learn.

The issue is that, typically, I find yourself highlighting quite a bit.

And by quite a bit I imply A LOT. We can’t even name them “key notes.”

So in these instances, after studying the e-book, I find yourself both losing lots of time summarizing or simply stop doing it (the latter is the extra frequent).

I not too long ago learn a e-book that I loved quite a bit and want to absolutely retain what struck me probably the most. However, once more, it was a type of books I over-highlighted.

And I didn’t need to use lots of my scarce free time on it. So I made a decision to automate the method and use my tech/information expertise. As a result of I’m proud of the consequence, I assumed I’d share it so anybody can benefit from this instrument as properly.

Disclaimer: my Kindle is sort of previous so this could work on new ones as properly. In actual fact, there’s a barely higher method for brand new Kindle variations (defined additionally on this publish).

The Undertaking

Let’s outline the purpose: generate a abstract from our Kindle highlights.

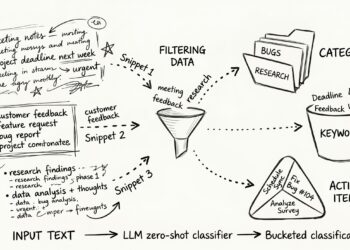

As I thought of it, I imagined the next easy pipeline for a single e-book:

- Get the e-book highlights

- Create a RAG or one thing comparable

- Export the abstract

The result’s completely different on the primary half, however all as a result of preprocessing wanted taking into consideration how the info is structured.

So I’ll construction this publish into two major sections:

- Information retrieval and processing

- AI mannequin and output

1. Information Retrieval and Processing

My instinct advised me there was a option to extract highlights from my Kindle. Ultimately, they’re saved there, so I simply want a option to get them out.

There are a number of methods to do it however I needed an method that labored with each books purchased on the official Kindle retailer but in addition PDFs or recordsdata I despatched from my laptop computer.

And I additionally determined I wouldn’t use any current software program to extract the info. Simply my e book and my laptop computer (and a USB connecting them each).

Fortunately for us, no jailbreak is required and there are two methods of doing so relying in your Kindle model:

- All Kindles (presumably) have a file within the paperwork folder named My Clippings.txt. It actually accommodates any clipping you’ve made at any level and any e-book.

- New Kindles even have a SQLite file within the system listing named annotations.db. This has your highlights in a extra structured method.

On this publish I’ll be utilizing technique 1 (My Clippings.txt) primarily as a result of my Kindle doesn’t have the annotations.db database. However if you happen to’re fortunate sufficient to have the DB, use it because it’ll be extra easy and better high quality (many of the preprocessing we’ll be seeing subsequent received’t in all probability be wanted).

So getting the clippings is as straightforward as studying the TXT. Listed here are some key features and issues I encountered utilizing this technique:

- All books are on the identical file.

- I’m undecided in regards to the precise “clipping” definition on Amazon’s aspect however the best way I’ve seen it’s: something you spotlight at any level. Even if you happen to delete it or increase it, the unique will stay within the TXT. I assume that is like that as a result of, certainly, we’re working with a TXT file and it’s very laborious to delete stuff that’s not listed in any method.

- There’s a restrict to clipping: I’m not conscious of the precise threshold however as soon as we cross it, we will’t retrieve any extra clippings. That is completed as a result of somebody might in any other case spotlight the complete e-book, extract it and share it illegally.

And that is the anatomy of a clipping:

==========

Guide Title (Writer Title)

- Your Spotlight on web page 145 | Location 2212-2212 | Added on Sunday, August 30, 2020 11:25:29 PM

transparency drawback results in the identical place as

==========So step one is parsing the highlights, and that is the place we begin seeing Python code:

def parse_clippings(file_path):

uncooked = Path(file_path).read_text(encoding="utf-8")

entries = uncooked.break up("==========")

highlights = []

for entry in entries:

traces = [l.strip() for l in entry.strip().split("n") if l.strip()]

if len(traces) < 3:

proceed

e-book = traces[0]

if "Spotlight" not in traces[1]:

proceed

location_match = re.search(r"Location (d+)", traces[1])

if not location_match:

proceed

location = int(location_match.group(1))

textual content = " ".be a part of(traces[2:]).strip()

highlights.append(

{

"e-book": e-book,

"location": location,

"textual content": textual content

}

)

return highlightsGiven the trail of the clippings file, all this operate does is break up the textual content into the completely different entries after which loop by way of them. For every entry, it extracts the title identify, the placement and the highlighted textual content.

This closing construction (an inventory of dictionaries) makes it straightforward to filter by e-book:

[

h for h in highlights

if book_name.lower() in h["book"].decrease()

]As soon as filtered, we should order the highlights. Since clippings are appended to the TXT file, the order is predicated on after we spotlight, not on the textual content’s location.

And I personally need my outcomes to seem as they do within the e-book, so ordering is critical:

sorted(highlights, key=lambda x: x["location"])Now, if you happen to examine your clippings file, you would possibly discover duplicated clippings (or duplicated subclippings). This occurs as a result of any time you edit a spotlight (that you simply’ve failed to incorporate all of the phrases you aimed toward, for instance), it’s accounted as a brand new one. So there shall be two very comparable clippings within the TXT. Or much more if you happen to edit it lots of instances.

We have to deal with this by making use of a deduplication one way or the other. It’s simpler than anticipated:

def deduplicate(highlights):

clear = []

for h in highlights:

textual content = h["text"]

duplicate = False

for c in clear:

if textual content == c["text"]:

duplicate = True

break

if textual content in c["text"]:

duplicate = True

break

if c["text"] in textual content:

c["text"] = textual content

duplicate = True

break

if not duplicate:

clear.append(h)

return clearIt’s quite simple and may very well be perfected, however we principally examine if there are consecutive clippings with the identical textual content (or a part of it) and preserve the longest.

Proper now we now have the e-book highlights correctly sorted, and we might cease the pre-processing right here. However I can’t try this. I like to spotlight titles each time as a result of, when summarizing, I get to correctly assign a bit to every spotlight.

However our code isn’t capable of differ between an actual spotlight and a bit title… But. See under:

def is_probable_title(textual content):

textual content = textual content.strip()

if len(textual content) > 120:

return False

if textual content.endswith("."):

return False

phrases = textual content.break up()

if len(phrases) > 12:

return False

# chapter fashion prefix

if has_chapter_prefix(textual content):

return True

# capitalization ratio

capitalized = sum(

1 for w in phrases if w[0].isupper()

)

cap_ratio = capitalized / len(phrases)

# stopword ratio

stopword_count = sum(

1 for w in phrases if w.decrease() in STOPWORDS

)

stop_ratio = stopword_count / len(phrases)

rating = 0

if cap_ratio > 0.6:

rating += 1

if stop_ratio < 0.3:

rating += 1

if len(phrases) <= 6:

rating += 1

return rating >= 2It will possibly appear fairly arbitrary, and it’s not one of the best answer to this drawback, but it surely does work fairly properly. It makes use of a heuristic based mostly on capitalization, size, stopwords and prefixes.

This operate is named inside a loop by way of all of the highlights, as we’ve seen in earlier capabilities, to examine if a spotlight is a title or not. The result’s a “sections” checklist of dictionaries the place the dictionary has two keys:

- Title: the part title.

- Highlights: the part’s highlights.

Proper now, sure, we’re able to summarize.

AI Mannequin and Output

I needed this to be a free venture, so we want an AI mannequin that’s open supply.

I assumed that Ollama [1] was the most effective choices to run a venture like this (at the very least regionally). Plus, our information all the time stay ours with them and we will run the fashions offline.

As soon as put in, the code was straightforward. I’m not a immediate engineer so anybody with the know-how would get even higher outcomes, however that is what works for me:

def summarize_with_ollama(textual content, mannequin):

immediate = f"""

You're summarizing a e-book from reader highlights.

Produce a structured abstract with:

- Primary thesis

- Temporary abstract

- Key concepts

- Necessary ideas

- Sensible takeaways

Highlights:

{textual content}

"""

consequence = subprocess.run(

["ollama", "run", model],

enter=immediate,

textual content=True,

capture_output=True

)

return consequence.stdoutEasy, I do know. But it surely works partly as a result of the info preprocessing has been intense but in addition as a result of we’re already leveraging the fashions constructed on the market.

However what can we do with the abstract? I like utilizing Obsidian [2] so exporting a Markdown file is what makes extra sense. Right here you could have it:

def export_markdown(e-book, sections, abstract, output):

md = f"# {e-book}nn"

for part in sections:

md += f"## {part['title']}nn"

for h in part["highlights"]:

md += f"- {h}n"

md += "n"

md += "n---nn"

md += "## Guide Summarynn"

md += abstract

output_path = Path(output)

output_path.father or mother.mkdir(dad and mom=True, exist_ok=True)

output_path.write_text(md, encoding="utf-8")

print(f"nSaved to {output_path}")Et voilà.

And that is how I am going from highlights to a full Markdown abstract (straight to Obsidian if I need to) with lower than 300 traces of Python code!

Full Code and Check

Right here’s the complete code, simply in case you need to copy-paste it. It accommodates what we’ve seen plus some helper capabilities and argument parsing:

import re

import argparse

from pathlib import Path

import subprocess

# ---------- PARSE CLIPPINGS ----------

def parse_clippings(file_path):

uncooked = Path(file_path).read_text(encoding="utf-8")

entries = uncooked.break up("==========")

highlights = []

for entry in entries:

traces = [l.strip() for l in entry.strip().split("n") if l.strip()]

if len(traces) < 3:

proceed

e-book = traces[0]

if "Spotlight" not in traces[1]:

proceed

location_match = re.search(r"Location (d+)", traces[1])

if not location_match:

proceed

location = int(location_match.group(1))

textual content = " ".be a part of(traces[2:]).strip()

highlights.append(

{

"e-book": e-book,

"location": location,

"textual content": textual content

}

)

return highlights

# ---------- FILTER BOOK ----------

def filter_book(highlights, book_name):

return [

h for h in highlights

if book_name.lower() in h["book"].decrease()

]

# ---------- SORT ----------

def sort_by_location(highlights):

return sorted(highlights, key=lambda x: x["location"])

# ---------- DEDUPLICATE ----------

def deduplicate(highlights):

clear = []

for h in highlights:

textual content = h["text"]

duplicate = False

for c in clear:

if textual content == c["text"]:

duplicate = True

break

if textual content in c["text"]:

duplicate = True

break

if c["text"] in textual content:

c["text"] = textual content

duplicate = True

break

if not duplicate:

clear.append(h)

return clear

# ---------- TITLE DETECTION ----------

STOPWORDS = {

"the","and","or","however","of","in","on","at","for","to",

"is","are","was","have been","be","been","being",

"that","this","with","as","by","from"

}

def has_chapter_prefix(textual content):

return bool(

re.match(

r"^(chapter|half|part)s+d+|^d+[.)]|^[ivxlcdm]+.",

textual content.decrease()

)

)

def is_probable_title(textual content):

textual content = textual content.strip()

if len(textual content) > 120:

return False

if textual content.endswith("."):

return False

phrases = textual content.break up()

if len(phrases) > 12:

return False

# chapter fashion prefix

if has_chapter_prefix(textual content):

return True

# capitalization ratio

capitalized = sum(

1 for w in phrases if w[0].isupper()

)

cap_ratio = capitalized / len(phrases)

# stopword ratio

stopword_count = sum(

1 for w in phrases if w.decrease() in STOPWORDS

)

stop_ratio = stopword_count / len(phrases)

rating = 0

if cap_ratio > 0.6:

rating += 1

if stop_ratio < 0.3:

rating += 1

if len(phrases) <= 6:

rating += 1

return rating >= 2

# ---------- GROUP SECTIONS ----------

def group_by_sections(highlights):

sections = []

present = {

"title": "Introduction",

"highlights": []

}

for h in highlights:

textual content = h["text"]

if is_probable_title(textual content):

sections.append(present)

present = {

"title": textual content,

"highlights": []

}

else:

present["highlights"].append(textual content)

sections.append(present)

return sections

# ---------- SUMMARY ----------

# ---------- EXPORT MARKDOWN ----------

def export_markdown(e-book, sections, abstract, output):

md = f"# {e-book}nn"

for part in sections:

md += f"## {part['title']}nn"

for h in part["highlights"]:

md += f"- {h}n"

md += "n"

md += "n---nn"

md += "## Guide Summarynn"

md += abstract

output_path = Path(output)

output_path.father or mother.mkdir(dad and mom=True, exist_ok=True)

output_path.write_text(md, encoding="utf-8")

print(f"nSaved to {output_path}")

# ---------- MAIN ----------

def major():

parser = argparse.ArgumentParser()

parser.add_argument("--book", required=True)

parser.add_argument("--output", required=False, default=None)

parser.add_argument(

"--clippings",

default="Information/My Clippings.txt"

)

parser.add_argument(

"--model",

default="mistral"

)

args = parser.parse_args()

highlights = parse_clippings(args.clippings)

highlights = filter_book(highlights, args.e-book)

highlights = sort_by_location(highlights)

highlights = deduplicate(highlights)

sections = group_by_sections(highlights)

all_text = "n".be a part of(

h["text"] for h in highlights

)

abstract = summarize_with_ollama(all_text, args.mannequin)

if args.output:

export_markdown(

args.e-book,

sections,

abstract,

args.output

)

else:

print("n---- HIGHLIGHTS ----n")

for h in highlights:

print(f"{h['text']}n")

print("n---- SUMMARY ----n")

print(abstract)

if __name__ == "__main__":

major()However let’s see the way it works! The code itself is helpful however I wager you’re prepared to see the outcomes. It’s an extended one so I made a decision to delete the primary half as all it does is simply copy-paste the highlights.

I randomly selected a e-book I learn like 6 years in the past (2020) known as Speaking to Strangers by Malcolm Gladwell (a bestseller, fairly pleasing learn). See the mannequin’s printed output (not the Markdown):

$ python3 kindle_summary.py --book "Speaking to Strangers"

---- HIGHLIGHTS ----

...

---- SUMMARY ----

Title: Speaking to Strangers: What We Ought to Know About Human Interplay

Primary Thesis: The e-book explores the complexities and paradoxes of human

interplay, significantly in conversations with strangers, and emphasizes

the significance of warning, humility, and understanding the context in

which these interactions happen.

Temporary Abstract: The creator delves into the misconceptions and shortcomings

in our dealings with strangers, specializing in how we frequently make incorrect

assumptions about others based mostly on restricted data or preconceived

notions. The e-book provides insights into why this occurs, its penalties,

and methods for bettering our skill to know and talk

successfully with individuals we do not know.

Key Concepts:

1. The transparency drawback and the default-to-truth drawback: Individuals usually

assume that others are open books, sharing their true feelings and

intentions, when in actuality this isn't all the time the case.

2. Coupling: Behaviors are strongly linked to particular circumstances and

situations, making it important to know the context during which a

stranger operates.

3. Limitations of understanding strangers: There isn't a good mechanism

for peering into the minds of these we have no idea, emphasizing the necessity

for restraint and humility when interacting with strangers.

Necessary Ideas:

1. Emotional responses falling outdoors expectations

2. Defaulting to reality

3. Transparency as an phantasm

4. Contextual understanding in coping with strangers

5. The paradox of speaking to strangers (want versus terribleness)

6. The phenomenon of coupling and its affect on habits

7. Blaming the stranger when issues go awry

Sensible Takeaways:

1. Acknowledge that folks might not all the time seem as they appear, each

emotionally and behaviorally.

2. Perceive the significance of context in decoding strangers'

behaviors and intentions.

3. Be cautious and humble when interacting with strangers, acknowledging

our limitations in understanding them absolutely.

4. Keep away from leaping to conclusions about strangers based mostly on restricted

data or preconceived notions.

5. Settle for that there'll all the time be a point of ambiguity and

complexity in coping with strangers.

6. Keep away from penalizing others for defaulting to reality as a protection mechanism.

7. When interactions with strangers go awry, take into account the position one would possibly

have performed in contributing to the state of affairs somewhat than solely blaming

the stranger.And all this inside just a few seconds. Fairly cool for my part.

Conclusion

And that’s principally how I’m now saving lots of free time (that I can use to write down posts like this one) by leveraging my information expertise and AI.

I hope you loved the learn and felt motivated to present it a attempt! It received’t be higher than the abstract you’d write with your individual notion of the e-book… But it surely received’t be removed from that!

Thanks to your consideration, be happy to remark if in case you have any concepts or recommendations!

Assets

[1] Ollama. (n.d.). Ollama. https://ollama.com

[2] Obsidian. (n.d.). Obsidian. https://obsidian.md