in AI for some time, you’re in all probability an LLM/Agent/Chat person, however have you ever ever requested your self how these instruments will likely be skilled within the close to future, and what if we’ve got already used up the info we have to practice fashions? Many theories say that we’re operating out of high-quality, human-generated knowledge to coach our fashions.

New content material goes up day-after-day, that’s a actuality, however an rising share of what will get added every day is itself AI-generated. So when you hold coaching on public internet knowledge, you’re finally coaching on the outputs of your personal predecessors. The snake consuming its tail. Researchers name this phenomenon Mannequin Collapse, the place AI fashions begin studying from the errors of their predecessors till the entire system degrades into nonsense.

However what if I informed you we aren’t really operating out of information? We’ve simply been wanting within the mistaken place.

On this article, I’m going to interrupt down the important thing insights from this good paper.

The Net We Already use and the Net That Issues

Most of us take into account the online as a novel supply of knowledge. In actuality, there are not less than two.

There’s the Floor Net: the listed, public world like what we discover on Reddit, Wikipedia, and information websites. That is what we’ve already scraped and overused for years to coach the mainstream AI fashions of right this moment. Then, there’s what we name the Deep Net, and right here I’m not speaking in regards to the “Darkish Net” or something unlawful.

The Deep Net is solely the whole lot behind a login or a firewall. It refers to something on-line that isn’t publicly listed. It may very well be your hospital’s affected person portal, your financial institution’s inner dashboard, enterprise doc archives, non-public databases, and years of e mail sitting behind a login display. Regular, boring, however extremely priceless knowledge.

Many research counsel the Deep Net is orders of magnitude bigger than the floor internet. Extra importantly, it’s crucially higher high quality knowledge. In comparison with floor internet content material, which could be noisy, stuffed with misinformation, and strongly search engine optimisation optimized. Additionally, it more and more accommodates content material intentionally designed to mislead or poison AI fashions. Deep internet knowledge, like medical information or verified monetary paperwork or others inner databases, tends to be clear, authenticated, and arranged by individuals who care about its high quality.

The issue? I believe you may guess it, it’s non-public. You possibly can’t simply extract one million medical information with out contemplating all of the authorized and moral catastrophes you’ll trigger.

The PROPS Framework

That is the place a brand new framework known as PROPS (Protected Pipelines) is available in. Launched by Ari Juels (Cornell Tech), Farinaz Koushanfar (UCSD), and Laurence Moroney (former Google AI Lead), PROPS acts as a bridge between this delicate knowledge and the AI fashions that want it.

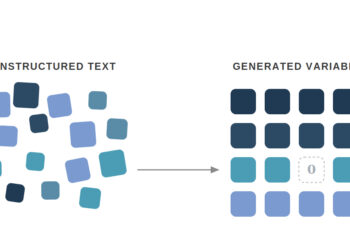

The brilliance of PROPS is that it doesn’t ask you to “hand over” your knowledge. As a substitute, it makes use of Privateness-Preserving Oracles. Consider an oracle as a “trusted intermediary” that may have a look at your knowledge, confirm it’s actual, after which inform the AI mannequin what it must know with out ever displaying the mannequin the uncooked info.

These ideas of props can sounds magical as it may resolve a variety of points associated to knowledge availability that AI fashions face right this moment. However how does this work precisely? Let’s take an instance of a medical firm that desires to coach a diagnostic software on actual well being information. Below the PROPS framework:

- Permission: As a person, you log into your personal well being portal and authorize a particular use to your knowledge.

- The Oracle: Consider the Oracle as a digital notary. It goes to your non-public portal (like your hospital database) to confirm that your knowledge is actual. As a substitute of copying your recordsdata, it merely tells the AI system: “I’ve seen the unique paperwork, and I testify they’re genuine.” It gives proof of the reality with out ever handing over the non-public knowledge itself. Instruments exist already for this, like DECO. It’s a protocol that lets customers show that they pulled a particular piece of information from an internet server over a safe TLS channel.

- The Safe Enclave: It is a “black field” inside the pc’s {hardware} the place the precise coaching occurs. We put the AI mannequin and your non-public knowledge inside and “lock the door.” No human or developer can see what is going on inside. The AI “research” the info and leaves with solely the mannequin weights. The uncooked knowledge stays locked inside till the session is over.

- The Outcome: The mannequin trains on the info inside that field. Solely the up to date “weights” (the educational) come out. The uncooked knowledge isn’t seen by human eyes.

The contributor is aware of precisely what they’re agreeing to, and they are often rewarded for collaborating in a means that’s calibrated to how priceless their particular knowledge really is. It’s a genuinely totally different relationship between knowledge house owners and AI methods.

However why hassle with this as an alternative of Artificial Knowledge?

Some may ask: “Why hassle with this complicated setup after we can simply generate artificial knowledge?”

The reply is that artificial knowledge is a variety killer. By definition, artificial knowledge era reinforces the center of the bell curve. You probably have a uncommon medical situation that impacts solely 0.01% of the inhabitants, an artificial knowledge generator will doubtless easy you out as “noise.”

Fashions skilled on artificial knowledge grow to be progressively worse at serving outliers. PROPS solves this by making a safe means for actual folks with uncommon circumstances or distinctive backgrounds to “opt-in.” It turns knowledge sharing from a privateness threat right into a “knowledge market.” the place priceless knowledge will get the compensation it deserves.

It’s not nearly coaching, inference issues too

Most discussions give attention to coaching, however PROPS has an equally fascinating software on the inference facet.

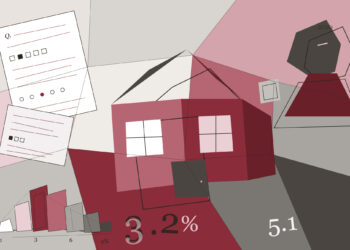

For instance, getting a mortgage right this moment includes a variety of doc submission: financial institution statements, pay stubs, and tax returns. In a PROPS-based system, they counsel the usage of a Mortgage Resolution Mannequin (LDM):

- You authorize the LDM to speak on to your financial institution.

- The financial institution confirms your stability by way of a privacy-preserving oracle.

- The LDM decides.

- The outcome? The lender will get a verified “Sure” or “No” with out ever touching your non-public paperwork. This eliminates the chance of information leaks and makes it practically inconceivable for folks to make use of fraudulent, photoshopped paperwork.

What’s really stopping this from taking place in 2026?

It merely comes all the way down to scale and infrastructure.

Probably the most strong model of PROPS requires coaching to occur inside a hardware-backed safe enclave (like Intel SGX or NVIDIA’s H100 TEEs). These work nicely at a small scale, however getting them to work for the large GPU clusters wanted for frontier LLMs continues to be an open engineering downside. It requires large clusters to work in good, encrypted sync.

The researchers are clear: PROPS isn’t a completed product but. It’s a persuasive proof-of-concept. Nevertheless, a lighter-weight model is deployable right this moment. Even with out full {hardware} ensures, you may construct methods that give customers significant assurance, which is already an enchancment over asking somebody to e mail you a PDF.

My Personal Ultimate Ideas

PROPS isn’t actually a “new” expertise; it’s a brand new software of current instruments. Privateness-preserving oracles have been used within the blockchain and Web3 house (like Chainlink) for years. The perception right here is recognizing that the identical instruments can resolve the AI knowledge disaster.

The “knowledge disaster” isn’t a lack of awareness; it’s a scarcity of belief. We’ve got greater than sufficient knowledge to construct the subsequent era of AI, but it surely’s locked behind the doorways of the Deep Net. The snake doesn’t should eat its tail; it simply must discover a higher backyard.

👉 LinkedIn: Sabrine Bendimerad

👉 Medium: https://medium.com/@sabrine.bendimerad1

👉 Instagram: https://tinyurl.com/datailearn