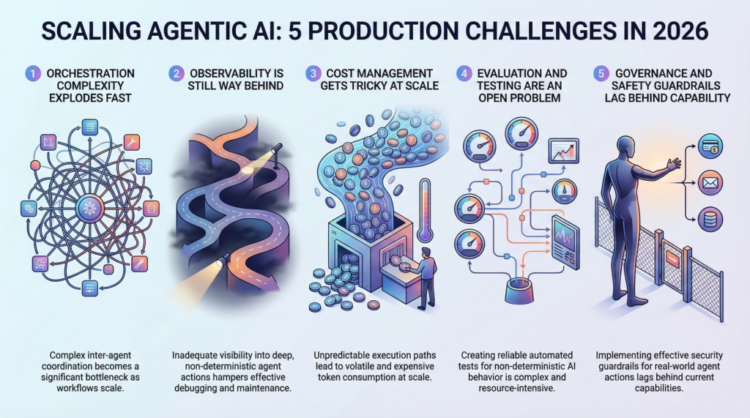

On this article, you’ll study 5 main challenges groups face when scaling agentic AI programs from prototype to manufacturing in 2026.

Subjects we are going to cowl embrace:

- Why orchestration complexity grows quickly in multi-agent programs.

- How observability, analysis, and price management stay tough in manufacturing environments.

- Why governance and security guardrails have gotten important as agentic programs take real-world actions.

Let’s not waste any extra time.

5 Manufacturing Scaling Challenges for Agentic AI in 2026

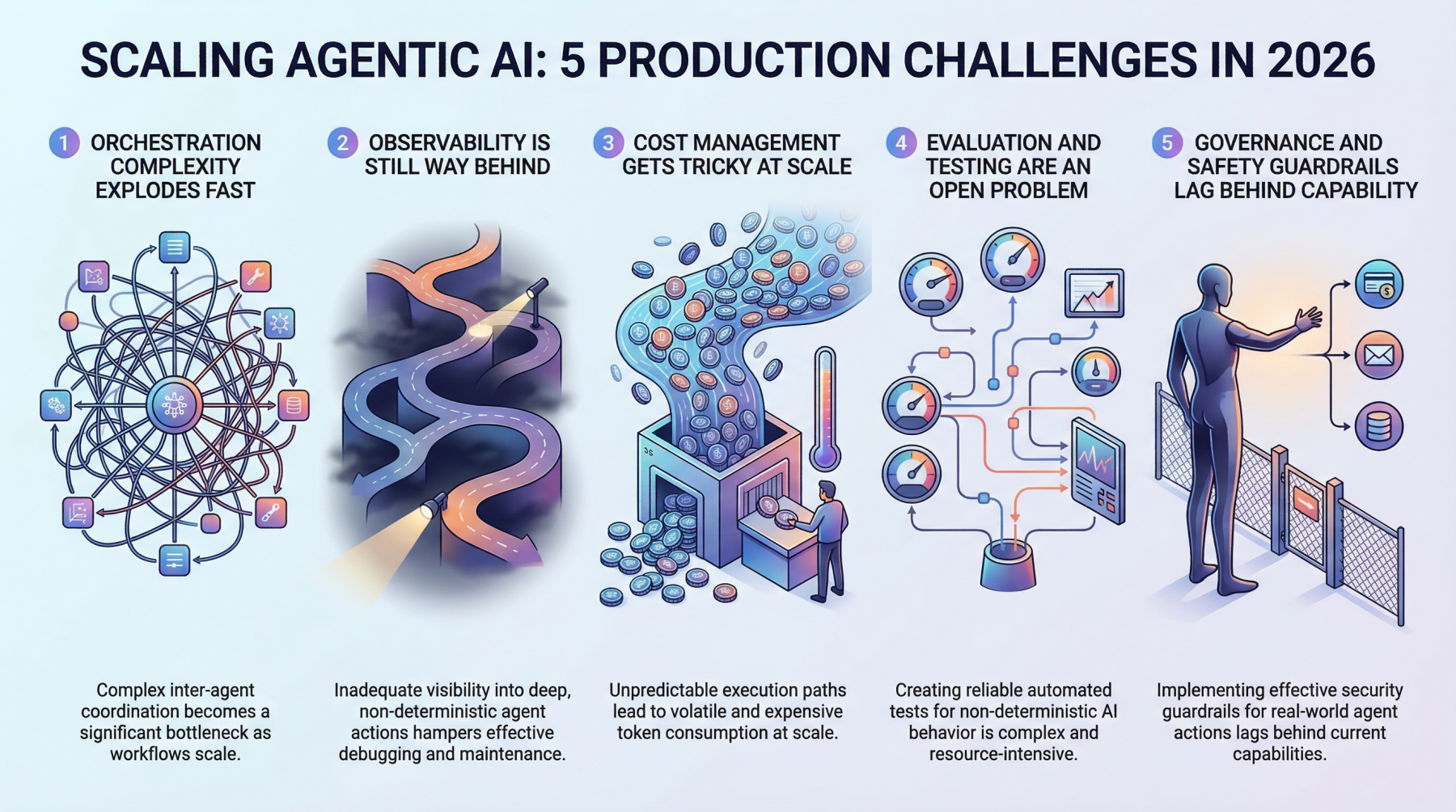

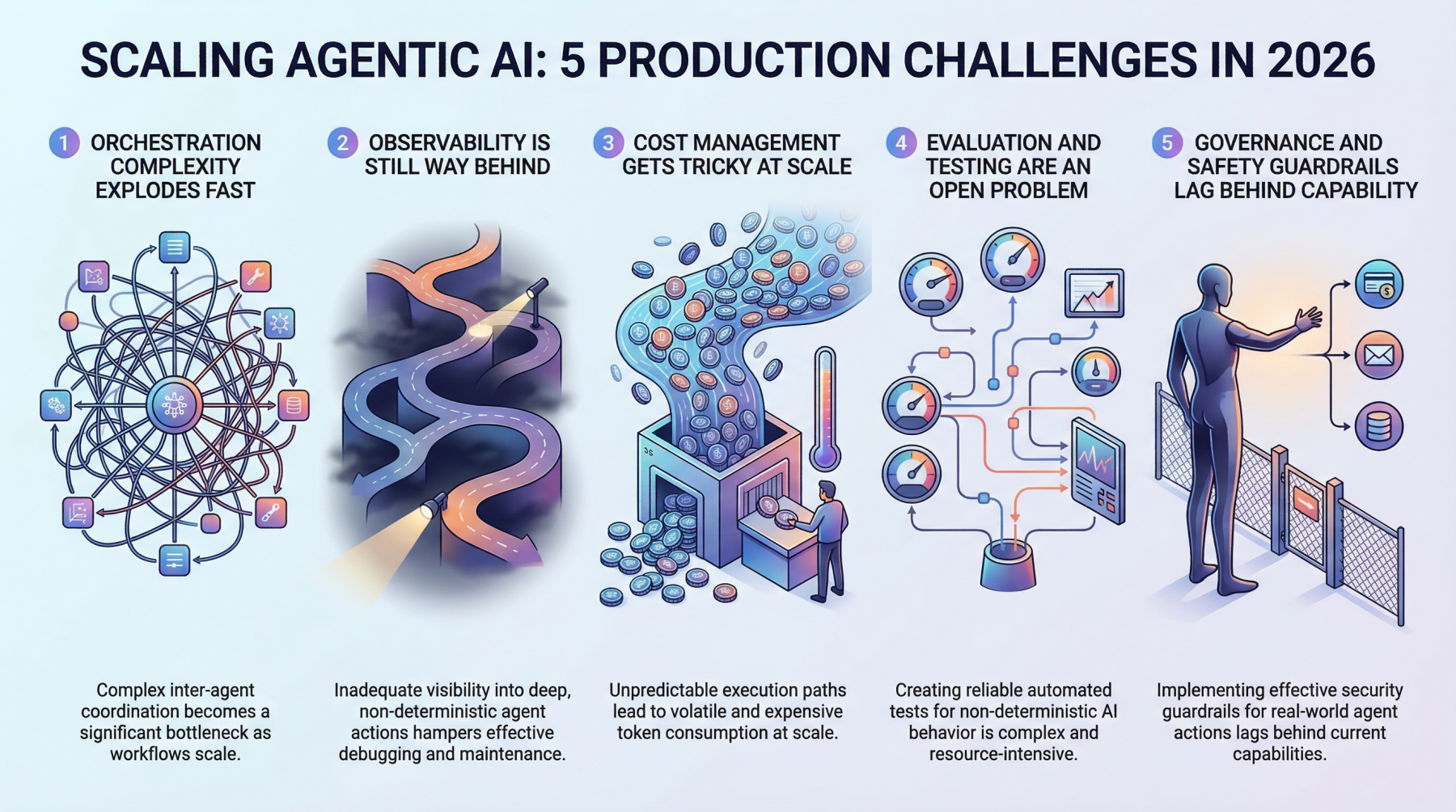

Picture by Editor

Introduction

Everybody’s constructing agentic AI programs proper now, for higher or for worse. The demos look unbelievable, the prototypes really feel magical, and the pitch decks virtually write themselves.

However right here’s what no person’s tweeting about: getting this stuff to truly work at scale, in manufacturing, with actual customers and actual stakes, is a very totally different recreation. The hole between a slick demo and a dependable manufacturing system has at all times existed in machine studying, however agentic AI stretches it wider than something we’ve seen earlier than.

These programs make choices, take actions, and chain collectively advanced workflows autonomously. That’s highly effective, and it’s additionally terrifying when issues go sideways at scale. So let’s discuss in regards to the 5 largest complications groups are working into as they attempt to scale agentic AI in 2026.

1. Orchestration Complexity Explodes Quick

If you’ve acquired a single agent dealing with a slim activity, orchestration feels manageable. You outline a workflow, set some guardrails, and issues principally behave. However manufacturing programs hardly ever keep that straightforward. The second you introduce multi-agent architectures during which brokers delegate to different brokers, retry failed steps, or dynamically select which instruments to name, you’re coping with orchestration complexity that grows virtually exponentially.

Groups are discovering that the coordination overhead between brokers turns into the bottleneck, not the person mannequin calls. You’ve acquired brokers ready on different brokers, race circumstances popping up in async pipelines, and cascading failures which might be genuinely arduous to breed in staging environments. Conventional workflow engines weren’t designed for this stage of dynamic decision-making, and most groups find yourself constructing customized orchestration layers that shortly develop into the toughest a part of your entire stack to keep up.

The actual kicker is that these programs behave in another way beneath load. An orchestration sample that works fantastically at 100 requests per minute can fully collapse at 10,000. Debugging that hole requires a sort of programs considering that almost all machine studying groups are nonetheless creating.

2. Observability Is Nonetheless Approach Behind

You’ll be able to’t repair what you possibly can’t see, and proper now, most groups can’t see almost sufficient of what their agentic programs are doing in manufacturing. Conventional machine studying monitoring tracks issues like latency, throughput, and mannequin accuracy. These metrics nonetheless matter, however they barely scratch the floor of agentic workflows.

When an agent takes a 12-step journey to reply a consumer question, you must perceive each choice level alongside the best way. Why did it select Device A over Device B? Why did it retry step 4 3 times? Why did the ultimate output fully miss the mark, regardless of each intermediate step trying wonderful? The tracing infrastructure for this sort of deep observability continues to be immature. Most groups cobble collectively some mixture of LangSmith, customized logging, and a variety of hope.

What makes it more durable is that agentic conduct is non-deterministic by nature. The identical enter can produce wildly totally different execution paths, which implies you possibly can’t simply snapshot a failure and replay it reliably. Constructing strong observability for programs which might be inherently unpredictable stays one of many largest unsolved issues within the house.

3. Value Administration Will get Difficult at Scale

Right here’s one thing that catches a variety of groups off guard: agentic programs are costly to run. Every agent motion sometimes entails a number of LLM calls, and when brokers are chaining collectively dozens of steps per request, the token prices add up shockingly quick. A workflow that prices $0.15 per execution sounds wonderful till you’re processing 500,000 requests a day.

Sensible groups are getting artistic with price optimization. They’re routing less complicated sub-tasks to smaller, cheaper fashions whereas reserving the heavy hitters for advanced reasoning steps. They’re caching intermediate outcomes aggressively and constructing kill switches that terminate runaway agent loops earlier than they burn by way of price range. However there’s a continuing rigidity between price effectivity and output high quality, and discovering the correct steadiness requires ongoing experimentation.

The billing unpredictability is what actually stresses out engineering leads. Not like conventional APIs, the place you possibly can estimate prices fairly precisely, agentic programs have variable execution paths that make price forecasting genuinely tough. One edge case can set off a sequence of retries that prices 50 instances greater than the conventional path.

4. Analysis and Testing Are an Open Downside

How do you take a look at a system that may take a distinct path each time it runs? That’s the query conserving machine studying engineers up at evening. Conventional software program testing assumes deterministic conduct, and conventional machine studying analysis assumes a hard and fast input-output mapping. Agentic AI breaks each assumptions concurrently.

Groups are experimenting with a variety of approaches. Some are constructing LLM-as-a-judge pipelines during which a separate mannequin evaluates the agent’s outputs. Others are creating scenario-based take a look at suites that examine for behavioral properties somewhat than precise outputs. A couple of are investing in simulation environments the place brokers might be stress-tested in opposition to hundreds of artificial situations earlier than hitting manufacturing.

However none of those approaches feels really mature but. The analysis tooling is fragmented, benchmarks are inconsistent, and there’s no trade consensus on what “good” even appears to be like like for a posh agentic workflow. Most groups find yourself relying closely on human assessment, which clearly doesn’t scale.

5. Governance and Security Guardrails Lag Behind Functionality

Agentic AI programs can take actual actions in the true world. They’ll ship emails, modify databases, execute transactions, and work together with exterior companies. The protection implications of that autonomy are important, and governance frameworks haven’t stored tempo with how shortly these capabilities are being deployed.

The problem is implementing guardrails which might be strong sufficient to forestall dangerous actions with out being so restrictive that they kill the usefulness of the agent. It’s a fragile steadiness, and most groups are studying by way of trial and error. Permission programs, motion approval workflows, and scope limitations all add friction that may undermine the entire level of getting an autonomous agent within the first place.

Regulatory stress is mounting too. As agentic programs begin making choices that have an effect on prospects instantly, questions on accountability, auditability, and compliance develop into pressing. Groups that aren’t fascinated with governance now are going to hit painful partitions when rules catch up.

Last Ideas

Agentic AI is genuinely transformative, however the path from prototype to manufacturing at scale is suffering from challenges that the trade continues to be determining in actual time.

The excellent news is that the ecosystem is maturing shortly. Higher tooling, clearer patterns, and hard-won classes from early adopters are making the trail a bit of smoother each month.

In the event you’re scaling agentic programs proper now, simply know that the ache you’re feeling is common. The groups that put money into fixing these foundational issues early are those that can construct programs that really maintain up when it issues.

On this article, you’ll study 5 main challenges groups face when scaling agentic AI programs from prototype to manufacturing in 2026.

Subjects we are going to cowl embrace:

- Why orchestration complexity grows quickly in multi-agent programs.

- How observability, analysis, and price management stay tough in manufacturing environments.

- Why governance and security guardrails have gotten important as agentic programs take real-world actions.

Let’s not waste any extra time.

5 Manufacturing Scaling Challenges for Agentic AI in 2026

Picture by Editor

Introduction

Everybody’s constructing agentic AI programs proper now, for higher or for worse. The demos look unbelievable, the prototypes really feel magical, and the pitch decks virtually write themselves.

However right here’s what no person’s tweeting about: getting this stuff to truly work at scale, in manufacturing, with actual customers and actual stakes, is a very totally different recreation. The hole between a slick demo and a dependable manufacturing system has at all times existed in machine studying, however agentic AI stretches it wider than something we’ve seen earlier than.

These programs make choices, take actions, and chain collectively advanced workflows autonomously. That’s highly effective, and it’s additionally terrifying when issues go sideways at scale. So let’s discuss in regards to the 5 largest complications groups are working into as they attempt to scale agentic AI in 2026.

1. Orchestration Complexity Explodes Quick

If you’ve acquired a single agent dealing with a slim activity, orchestration feels manageable. You outline a workflow, set some guardrails, and issues principally behave. However manufacturing programs hardly ever keep that straightforward. The second you introduce multi-agent architectures during which brokers delegate to different brokers, retry failed steps, or dynamically select which instruments to name, you’re coping with orchestration complexity that grows virtually exponentially.

Groups are discovering that the coordination overhead between brokers turns into the bottleneck, not the person mannequin calls. You’ve acquired brokers ready on different brokers, race circumstances popping up in async pipelines, and cascading failures which might be genuinely arduous to breed in staging environments. Conventional workflow engines weren’t designed for this stage of dynamic decision-making, and most groups find yourself constructing customized orchestration layers that shortly develop into the toughest a part of your entire stack to keep up.

The actual kicker is that these programs behave in another way beneath load. An orchestration sample that works fantastically at 100 requests per minute can fully collapse at 10,000. Debugging that hole requires a sort of programs considering that almost all machine studying groups are nonetheless creating.

2. Observability Is Nonetheless Approach Behind

You’ll be able to’t repair what you possibly can’t see, and proper now, most groups can’t see almost sufficient of what their agentic programs are doing in manufacturing. Conventional machine studying monitoring tracks issues like latency, throughput, and mannequin accuracy. These metrics nonetheless matter, however they barely scratch the floor of agentic workflows.

When an agent takes a 12-step journey to reply a consumer question, you must perceive each choice level alongside the best way. Why did it select Device A over Device B? Why did it retry step 4 3 times? Why did the ultimate output fully miss the mark, regardless of each intermediate step trying wonderful? The tracing infrastructure for this sort of deep observability continues to be immature. Most groups cobble collectively some mixture of LangSmith, customized logging, and a variety of hope.

What makes it more durable is that agentic conduct is non-deterministic by nature. The identical enter can produce wildly totally different execution paths, which implies you possibly can’t simply snapshot a failure and replay it reliably. Constructing strong observability for programs which might be inherently unpredictable stays one of many largest unsolved issues within the house.

3. Value Administration Will get Difficult at Scale

Right here’s one thing that catches a variety of groups off guard: agentic programs are costly to run. Every agent motion sometimes entails a number of LLM calls, and when brokers are chaining collectively dozens of steps per request, the token prices add up shockingly quick. A workflow that prices $0.15 per execution sounds wonderful till you’re processing 500,000 requests a day.

Sensible groups are getting artistic with price optimization. They’re routing less complicated sub-tasks to smaller, cheaper fashions whereas reserving the heavy hitters for advanced reasoning steps. They’re caching intermediate outcomes aggressively and constructing kill switches that terminate runaway agent loops earlier than they burn by way of price range. However there’s a continuing rigidity between price effectivity and output high quality, and discovering the correct steadiness requires ongoing experimentation.

The billing unpredictability is what actually stresses out engineering leads. Not like conventional APIs, the place you possibly can estimate prices fairly precisely, agentic programs have variable execution paths that make price forecasting genuinely tough. One edge case can set off a sequence of retries that prices 50 instances greater than the conventional path.

4. Analysis and Testing Are an Open Downside

How do you take a look at a system that may take a distinct path each time it runs? That’s the query conserving machine studying engineers up at evening. Conventional software program testing assumes deterministic conduct, and conventional machine studying analysis assumes a hard and fast input-output mapping. Agentic AI breaks each assumptions concurrently.

Groups are experimenting with a variety of approaches. Some are constructing LLM-as-a-judge pipelines during which a separate mannequin evaluates the agent’s outputs. Others are creating scenario-based take a look at suites that examine for behavioral properties somewhat than precise outputs. A couple of are investing in simulation environments the place brokers might be stress-tested in opposition to hundreds of artificial situations earlier than hitting manufacturing.

However none of those approaches feels really mature but. The analysis tooling is fragmented, benchmarks are inconsistent, and there’s no trade consensus on what “good” even appears to be like like for a posh agentic workflow. Most groups find yourself relying closely on human assessment, which clearly doesn’t scale.

5. Governance and Security Guardrails Lag Behind Functionality

Agentic AI programs can take actual actions in the true world. They’ll ship emails, modify databases, execute transactions, and work together with exterior companies. The protection implications of that autonomy are important, and governance frameworks haven’t stored tempo with how shortly these capabilities are being deployed.

The problem is implementing guardrails which might be strong sufficient to forestall dangerous actions with out being so restrictive that they kill the usefulness of the agent. It’s a fragile steadiness, and most groups are studying by way of trial and error. Permission programs, motion approval workflows, and scope limitations all add friction that may undermine the entire level of getting an autonomous agent within the first place.

Regulatory stress is mounting too. As agentic programs begin making choices that have an effect on prospects instantly, questions on accountability, auditability, and compliance develop into pressing. Groups that aren’t fascinated with governance now are going to hit painful partitions when rules catch up.

Last Ideas

Agentic AI is genuinely transformative, however the path from prototype to manufacturing at scale is suffering from challenges that the trade continues to be determining in actual time.

The excellent news is that the ecosystem is maturing shortly. Higher tooling, clearer patterns, and hard-won classes from early adopters are making the trail a bit of smoother each month.

In the event you’re scaling agentic programs proper now, simply know that the ache you’re feeling is common. The groups that put money into fixing these foundational issues early are those that can construct programs that really maintain up when it issues.