Picture by Editor

# Introduction

Artificial information, because the identify suggests, is created artificially somewhat than being collected from real-world sources. It appears like actual information however avoids privateness points and excessive information assortment prices. This lets you simply check software program and fashions whereas operating experiments to simulate efficiency after launch.

Whereas libraries like Faker, SDV, and SynthCity exist — and even giant language fashions (LLMs) are extensively used for producing artificial information — my focus on this article is to keep away from counting on these exterior libraries or AI instruments. As a substitute, you’ll discover ways to obtain the identical outcomes by writing your individual Python scripts. This gives a greater understanding of the right way to form a dataset and the way biases or errors are launched. We’ll begin with easy toy scripts to grasp the accessible choices. When you grasp these fundamentals, you’ll be able to comfortably transition to specialised libraries.

# 1. Producing Easy Random Information

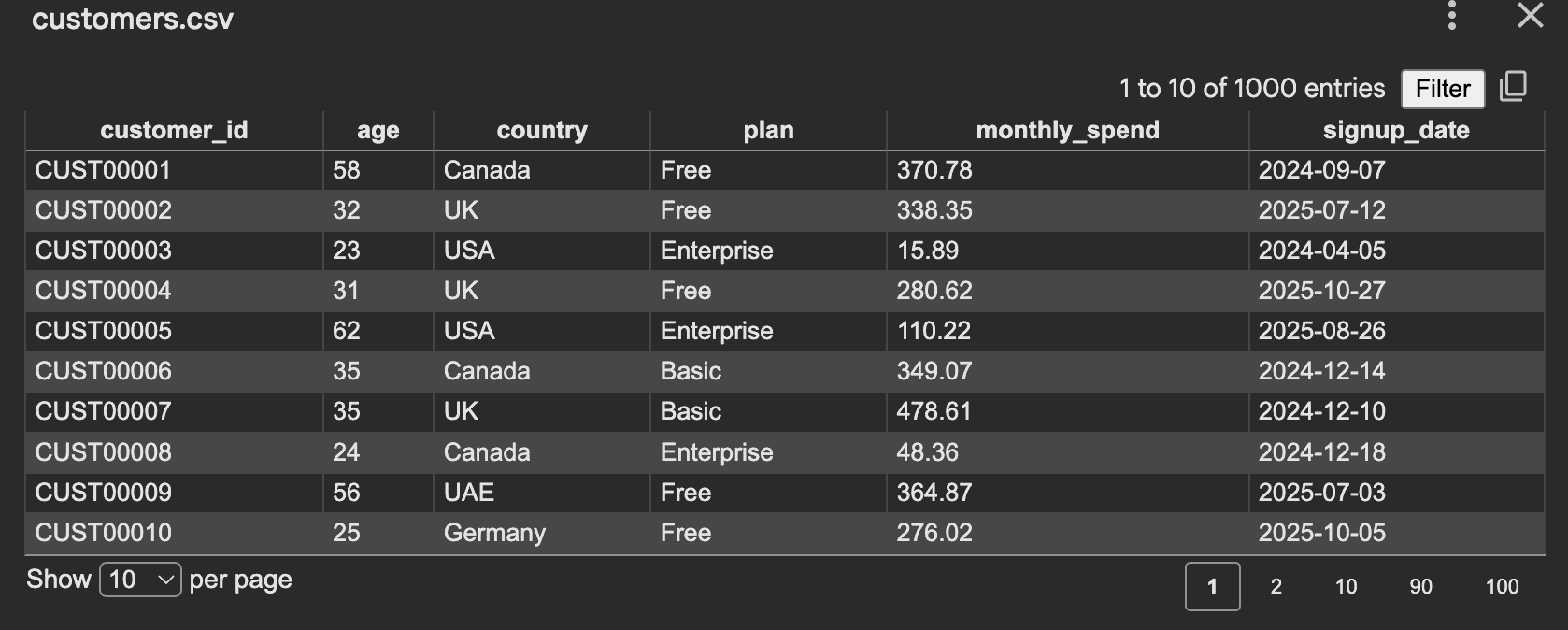

The best place to start out is with a desk. For instance, in the event you want a faux buyer dataset for an inner demo, you’ll be able to run a script to generate comma-separated values (CSV) information:

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

international locations = ["Canada", "UK", "UAE", "Germany", "USA"]

plans = ["Free", "Basic", "Pro", "Enterprise"]

def random_signup_date():

begin = datetime(2024, 1, 1)

finish = datetime(2026, 1, 1)

delta_days = (finish - begin).days

return (begin + timedelta(days=random.randint(0, delta_days))).date().isoformat()

rows = []

for i in vary(1, 1001):

age = random.randint(18, 70)

nation = random.selection(international locations)

plan = random.selection(plans)

monthly_spend = spherical(random.uniform(0, 500), 2)

rows.append({

"customer_id": f"CUST{i:05d}",

"age": age,

"nation": nation,

"plan": plan,

"monthly_spend": monthly_spend,

"signup_date": random_signup_date()

})

with open("prospects.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=rows[0].keys())

author.writeheader()

author.writerows(rows)

print("Saved prospects.csv")

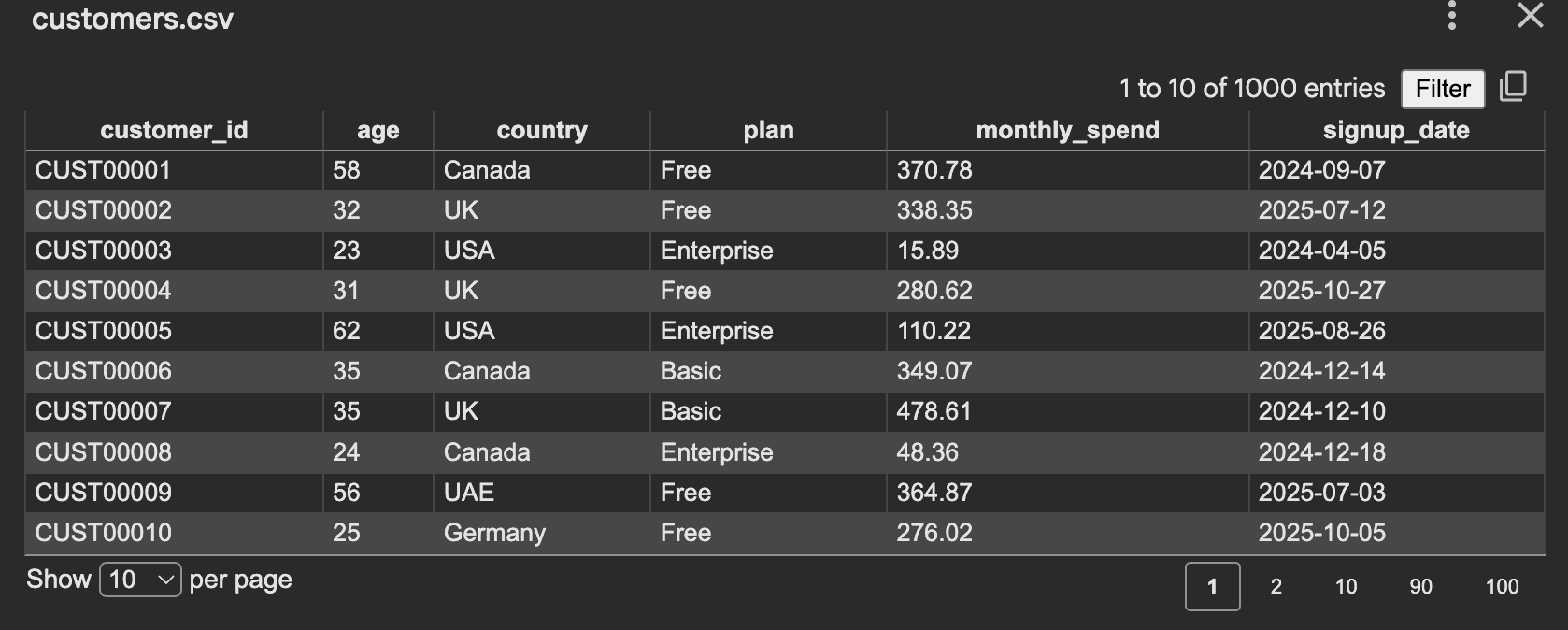

Output:

This script is simple: you outline fields, select ranges, and write rows. The random module helps integer era, floating-point values, random selection, and sampling. The csv module is designed to learn and write row-based tabular information. This type of dataset is appropriate for:

- Frontend demos

- Dashboard testing

- API growth

- Studying Structured Question Language (SQL)

- Unit testing enter pipelines

Nonetheless, there’s a main weak point to this strategy: every part is totally random. This usually leads to information that appears flat or unnatural. Enterprise prospects would possibly spend solely 2 {dollars}, whereas “Free” customers would possibly spend 400. Older customers behave precisely like youthful ones as a result of there isn’t any underlying construction.

In real-world situations, information hardly ever behaves this manner. As a substitute of producing values independently, we will introduce relationships and guidelines. This makes the dataset really feel extra sensible whereas remaining absolutely artificial. As an illustration:

- Enterprise prospects ought to virtually by no means have zero spend

- Spending ranges ought to depend upon the chosen plan

- Older customers would possibly spend barely extra on common

- Sure plans must be extra frequent than others

Let’s add these controls to the script:

import csv

import random

random.seed(42)

plans = ["Free", "Basic", "Pro", "Enterprise"]

def choose_plan():

roll = random.random()

if roll < 0.45:

return "Free"

if roll < 0.75:

return "Fundamental"

if roll < 0.93:

return "Professional"

return "Enterprise"

def generate_spend(age, plan):

if plan == "Free":

base = random.uniform(0, 10)

elif plan == "Fundamental":

base = random.uniform(10, 60)

elif plan == "Professional":

base = random.uniform(50, 180)

else:

base = random.uniform(150, 500)

if age >= 40:

base *= 1.15

return spherical(base, 2)

rows = []

for i in vary(1, 1001):

age = random.randint(18, 70)

plan = choose_plan()

spend = generate_spend(age, plan)

rows.append({

"customer_id": f"CUST{i:05d}",

"age": age,

"plan": plan,

"monthly_spend": spend

})

with open("controlled_customers.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=rows[0].keys())

author.writeheader()

author.writerows(rows)

print("Saved controlled_customers.csv")

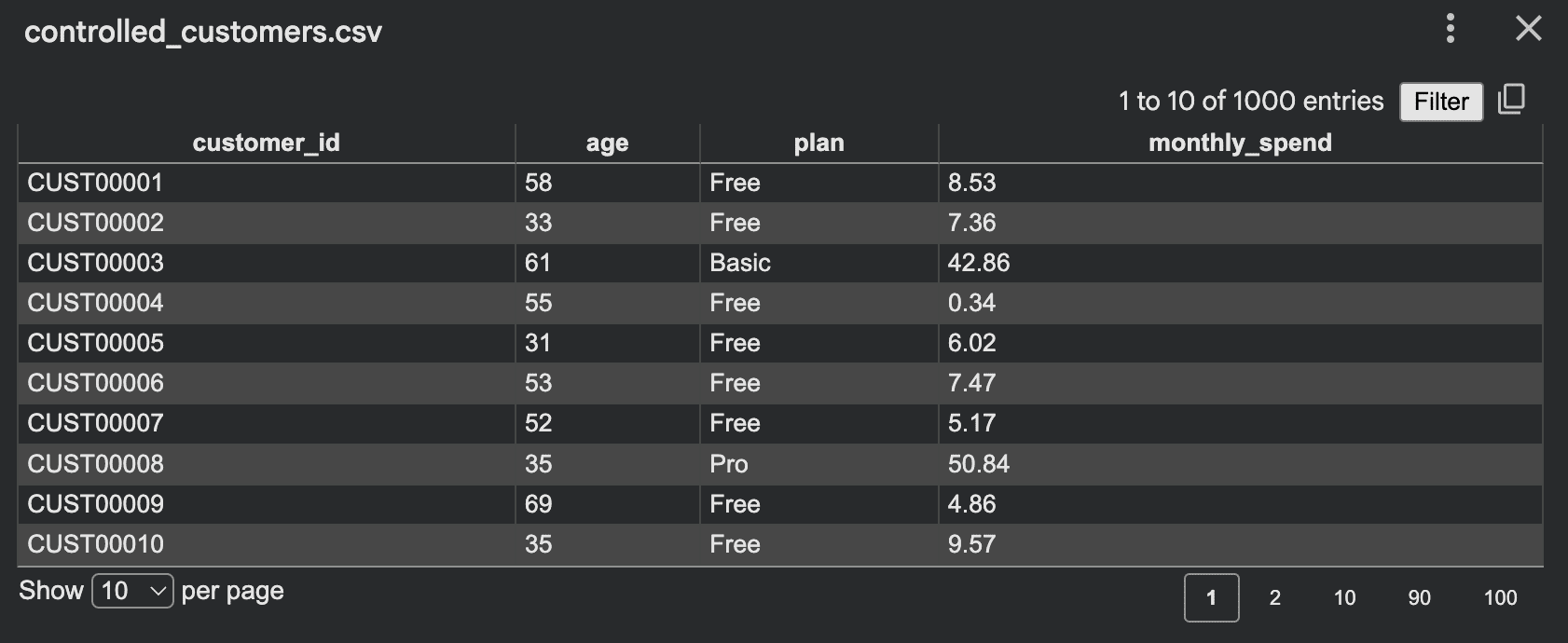

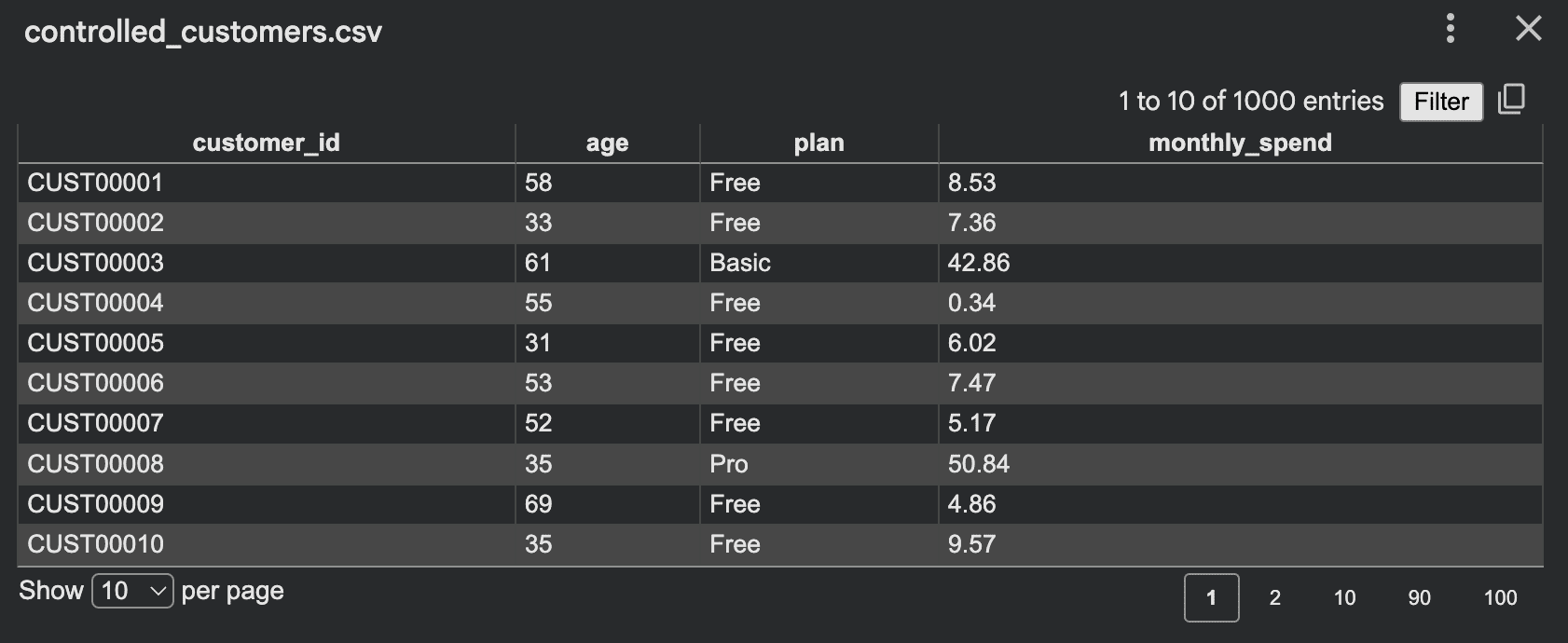

Output:

Now the dataset preserves significant patterns. Fairly than producing random noise, you might be simulating behaviors. Efficient controls could embrace:

- Weighted class choice

- Reasonable minimal and most ranges

- Conditional logic between columns

- Deliberately added uncommon edge circumstances

- Lacking values inserted at low charges

- Correlated options as an alternative of unbiased ones

# 2. Simulating Processes for Artificial Information

Simulation-based era is among the finest methods to create sensible artificial datasets. As a substitute of immediately filling columns, you simulate a course of. For instance, take into account a small warehouse the place orders arrive, inventory decreases, and low inventory ranges set off backorders.

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

stock = {

"A": 120,

"B": 80,

"C": 50

}

rows = []

current_time = datetime(2026, 1, 1)

for day in vary(30):

for product in stock:

daily_orders = random.randint(0, 12)

for _ in vary(daily_orders):

qty = random.randint(1, 5)

earlier than = stock

if stock >= qty:

stock -= qty

standing = "fulfilled"

else:

standing = "backorder"

rows.append({

"time": current_time.isoformat(),

"product": product,

"qty": qty,

"stock_before": earlier than,

"stock_after": stock,

"standing": standing

})

if stock < 20:

restock = random.randint(30, 80)

stock += restock

rows.append({

"time": current_time.isoformat(),

"product": product,

"qty": restock,

"stock_before": stock - restock,

"stock_after": stock,

"standing": "restock"

})

current_time += timedelta(days=1)

with open("warehouse_sim.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=rows[0].keys())

author.writeheader()

author.writerows(rows)

print("Saved warehouse_sim.csv")

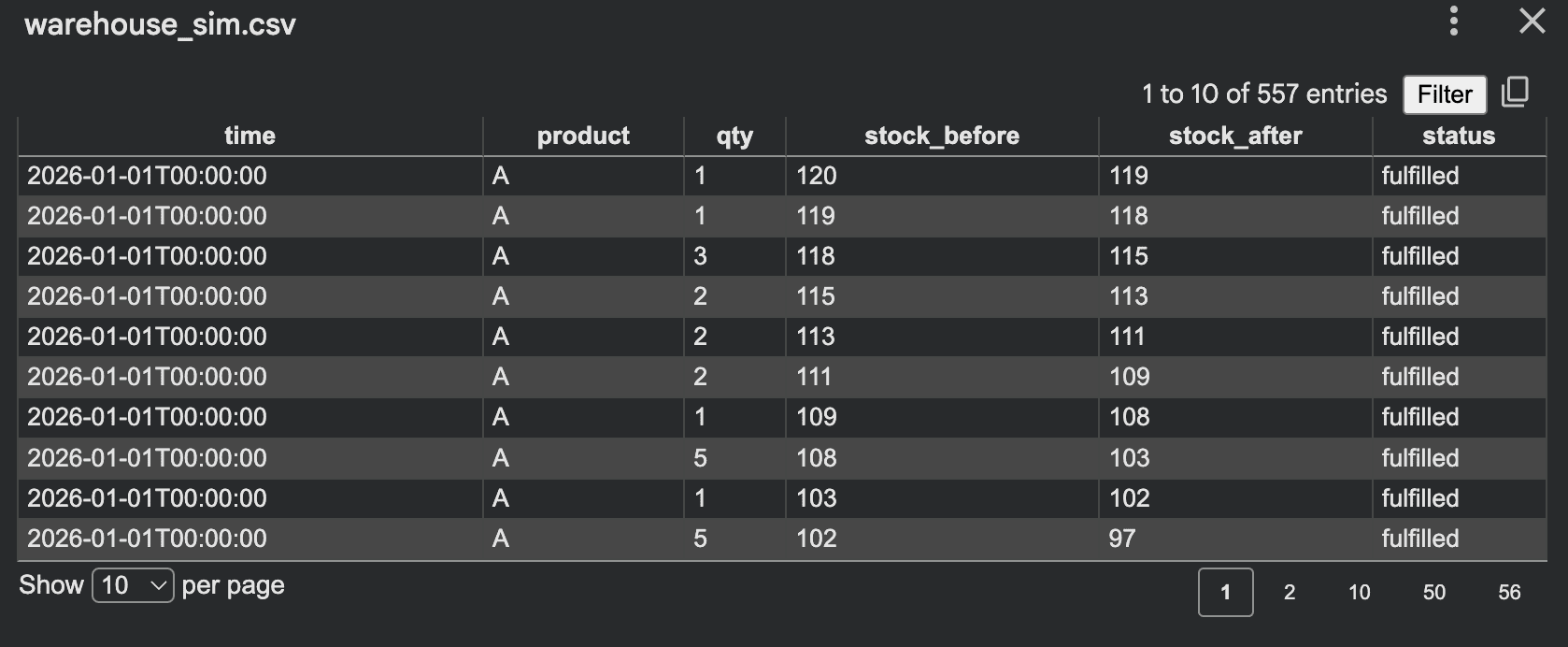

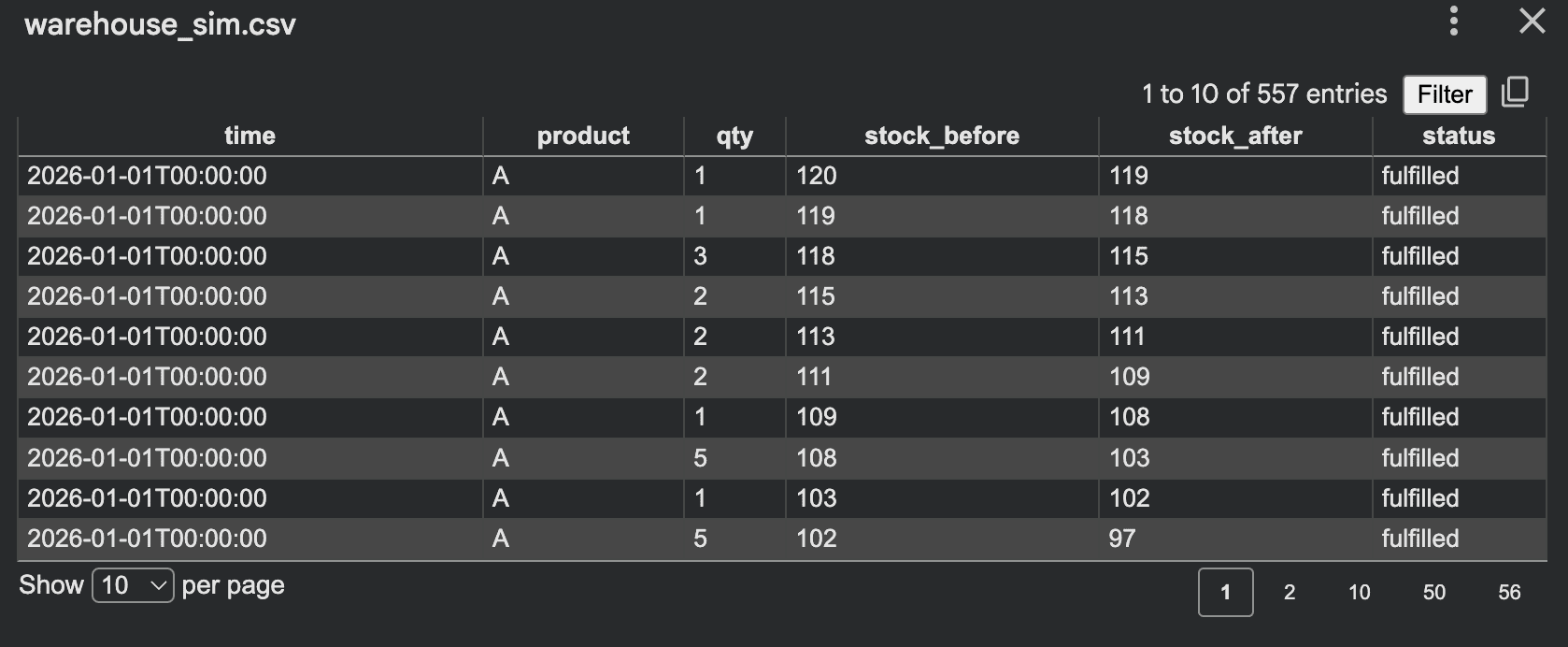

Output:

This technique is superb as a result of the information is a byproduct of system habits, which generally yields extra sensible relationships than direct random row era. Different simulation concepts embrace:

- Name middle queues

- Experience requests and driver matching

- Mortgage purposes and approvals

- Subscriptions and churn

- Affected person appointment flows

- Web site site visitors and conversion

# 3. Producing Time Collection Artificial Information

Artificial information is not only restricted to static tables. Many programs produce sequences over time, comparable to app site visitors, sensor readings, orders per hour, or server response occasions. Right here is a straightforward time sequence generator for hourly web site visits with weekday patterns.

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

begin = datetime(2026, 1, 1, 0, 0, 0)

hours = 24 * 30

rows = []

for i in vary(hours):

ts = begin + timedelta(hours=i)

weekday = ts.weekday()

base = 120

if weekday >= 5:

base = 80

hour = ts.hour

if 8 <= hour <= 11:

base += 60

elif 18 <= hour <= 21:

base += 40

elif 0 <= hour <= 5:

base -= 30

visits = max(0, int(random.gauss(base, 15)))

rows.append({

"timestamp": ts.isoformat(),

"visits": visits

})

with open("traffic_timeseries.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=["timestamp", "visits"])

author.writeheader()

author.writerows(rows)

print("Saved traffic_timeseries.csv")

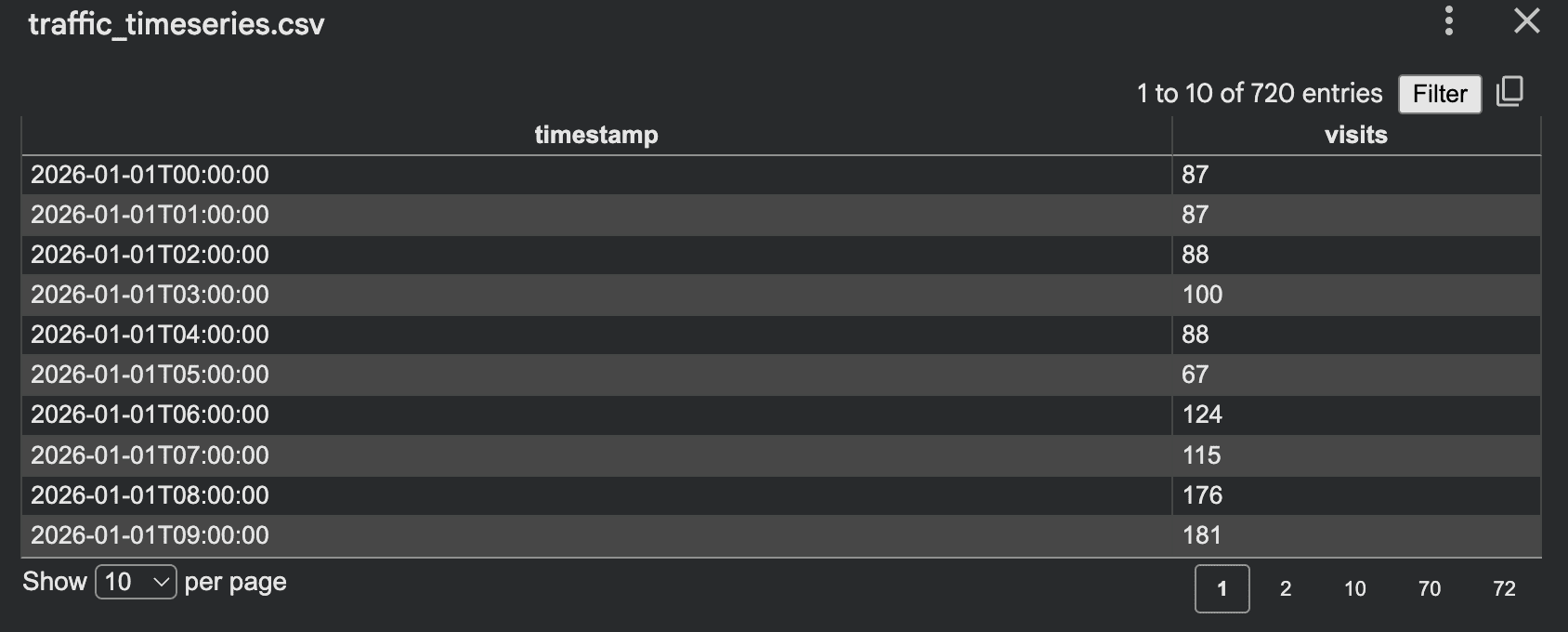

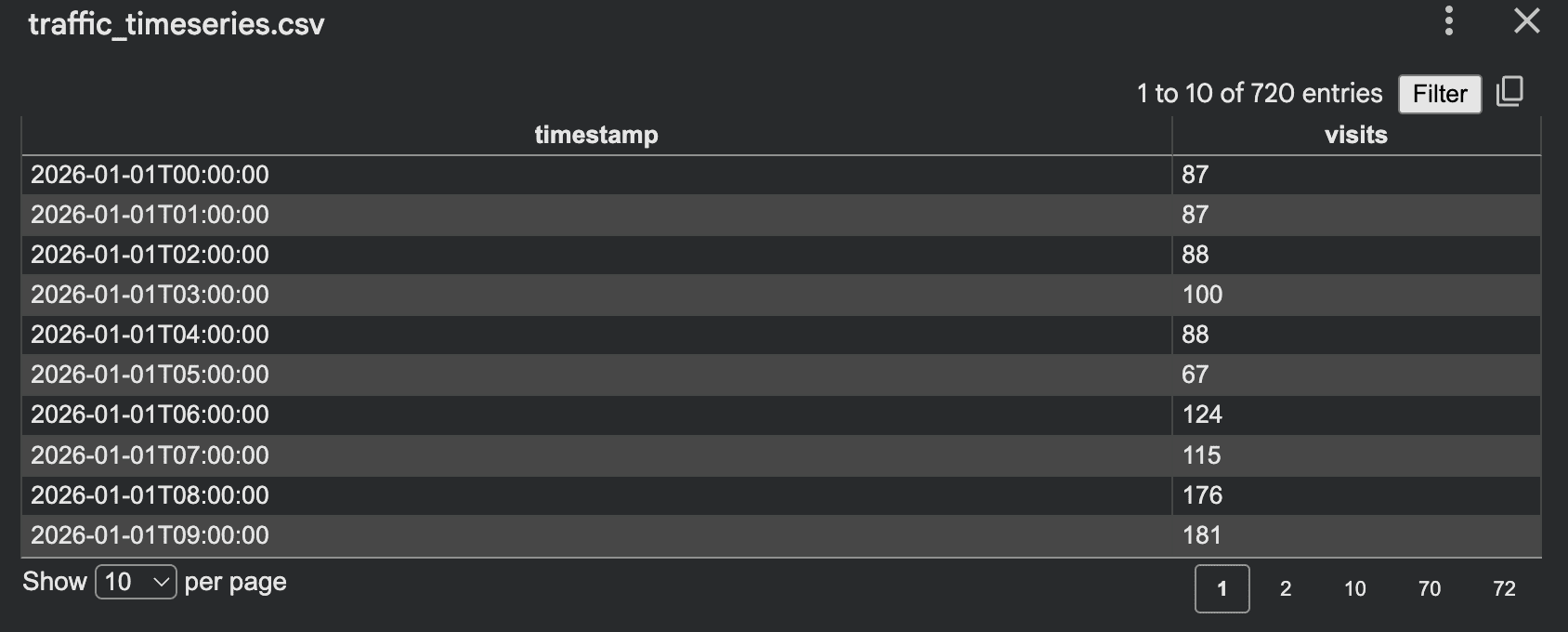

Output:

This strategy works properly as a result of it incorporates traits, noise, and cyclic habits whereas remaining straightforward to clarify and debug.

# 4. Creating Occasion Logs

Occasion logs are one other helpful script fashion, preferrred for product analytics and workflow testing. As a substitute of 1 row per buyer, you create one row per motion.

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

occasions = ["signup", "login", "view_page", "add_to_cart", "purchase", "logout"]

rows = []

begin = datetime(2026, 1, 1)

for user_id in vary(1, 201):

event_count = random.randint(5, 30)

current_time = begin + timedelta(days=random.randint(0, 10))

for _ in vary(event_count):

occasion = random.selection(occasions)

if occasion == "buy" and random.random() < 0.6:

worth = spherical(random.uniform(10, 300), 2)

else:

worth = 0.0

rows.append({

"user_id": f"USER{user_id:04d}",

"event_time": current_time.isoformat(),

"event_name": occasion,

"event_value": worth

})

current_time += timedelta(minutes=random.randint(1, 180))

with open("event_log.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=rows[0].keys())

author.writeheader()

author.writerows(rows)

print("Saved event_log.csv")

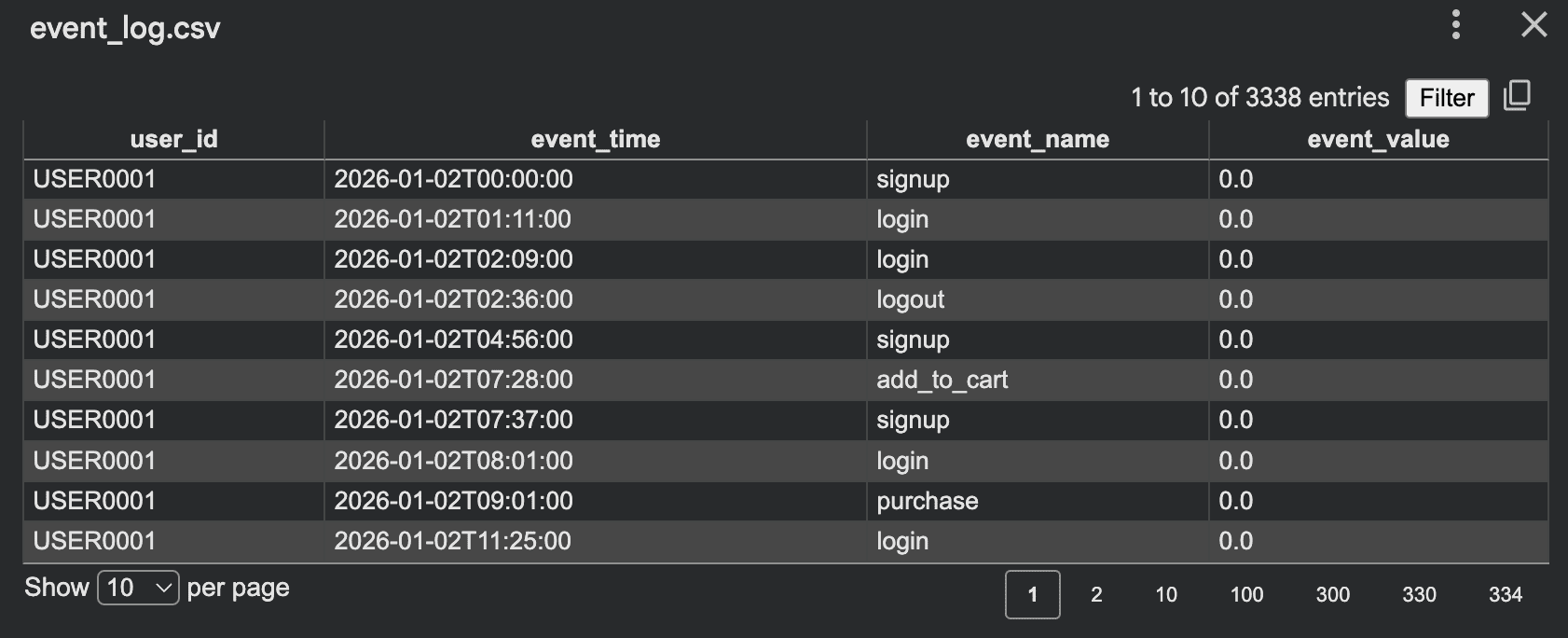

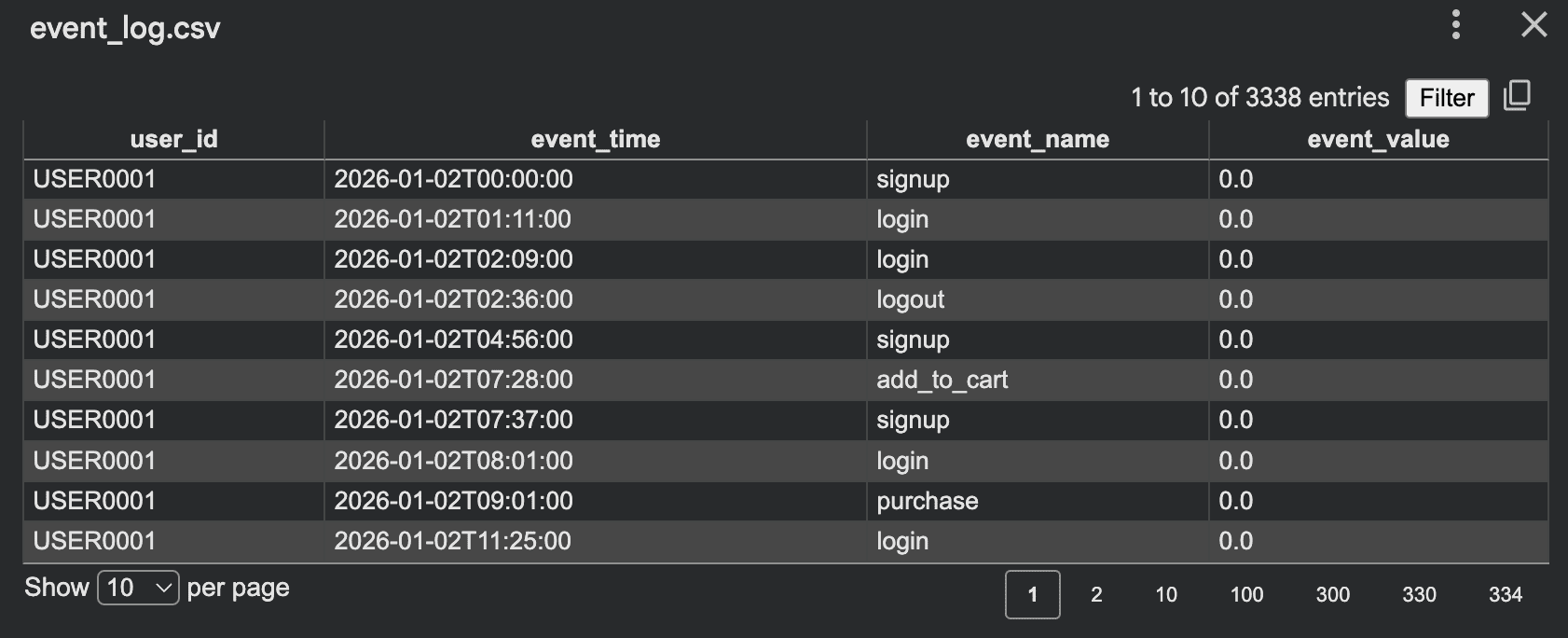

Output:

This format is helpful for:

- Funnel evaluation

- Analytics pipeline testing

- Enterprise intelligence (BI) dashboards

- Session reconstruction

- Anomaly detection experiments

A helpful approach right here is to make occasions depending on earlier actions. For instance, a purchase order ought to usually observe a login or a web page view, making the artificial log extra plausible.

# 5. Producing Artificial Textual content Information with Templates

Artificial information can also be priceless for pure language processing (NLP). You don’t all the time want an LLM to start out; you’ll be able to construct efficient textual content datasets utilizing templates and managed variation. For instance, you’ll be able to create help ticket coaching information:

import json

import random

random.seed(42)

points = [

("billing", "I was charged twice for my subscription"),

("login", "I cannot log into my account"),

("shipping", "My order has not arrived yet"),

("refund", "I want to request a refund"),

]

tones = ["Please help", "This is urgent", "Can you check this", "I need support"]

data = []

for _ in vary(100):

label, message = random.selection(points)

tone = random.selection(tones)

textual content = f"{tone}. {message}."

data.append({

"textual content": textual content,

"label": label

})

with open("support_tickets.jsonl", "w", encoding="utf-8") as f:

for merchandise in data:

f.write(json.dumps(merchandise) + "n")

print("Saved support_tickets.jsonl")

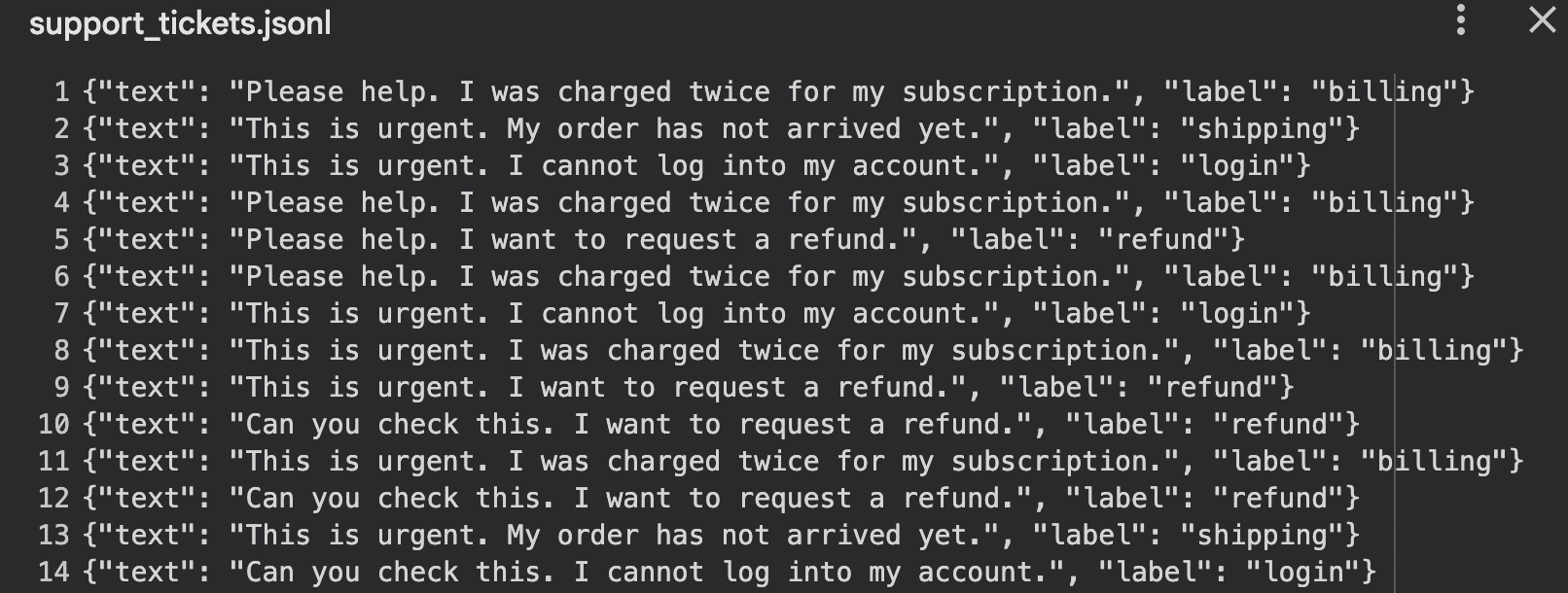

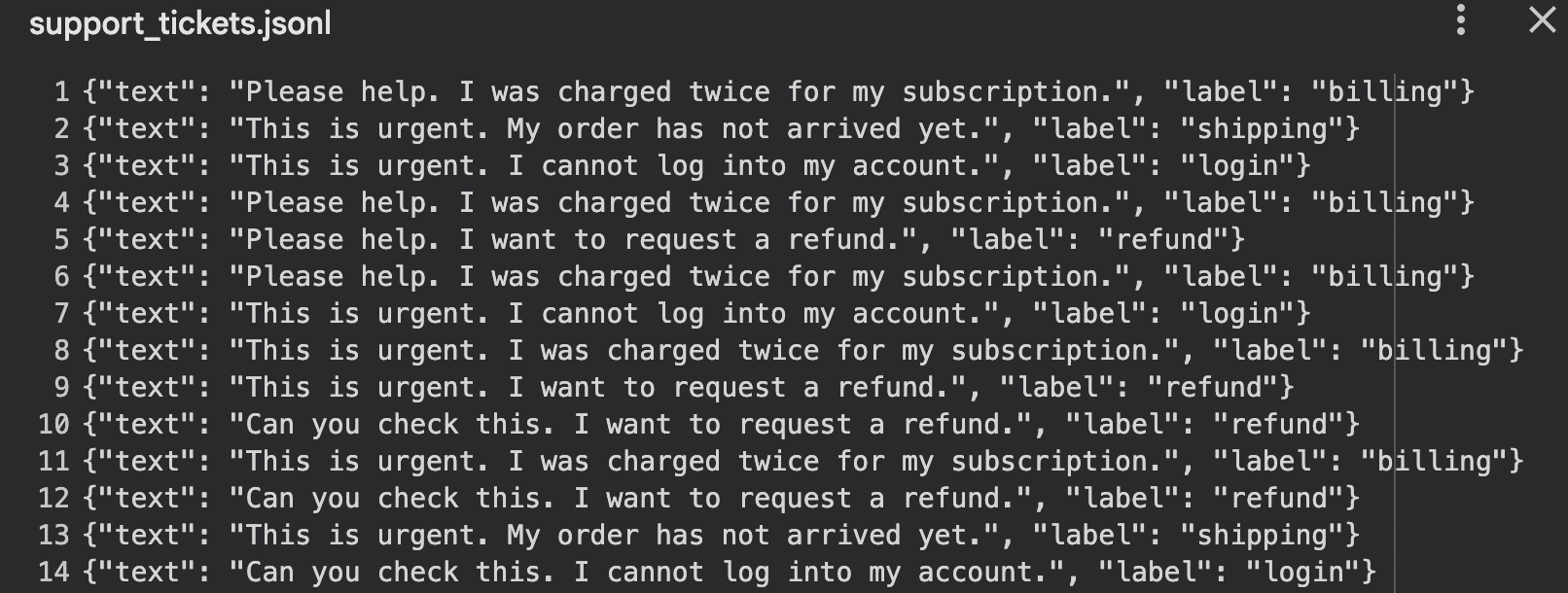

Output:

This strategy works properly for:

- Textual content classification demos

- Intent detection

- Chatbot testing

- Immediate analysis

# Last Ideas

Artificial information scripts are highly effective instruments, however they are often applied incorrectly. You should definitely keep away from these frequent errors:

- Making all values uniformly random

- Forgetting dependencies between fields

- Producing values that violate enterprise logic

- Assuming artificial information is inherently secure by default

- Creating information that’s too “clear” to be helpful for testing real-world edge circumstances

- Utilizing the identical sample so regularly that the dataset turns into predictable and unrealistic

Privateness stays probably the most important consideration. Whereas artificial information reduces publicity to actual data, it’s not risk-free. If a generator is simply too carefully tied to unique delicate information, leakage can nonetheless happen. Because of this privacy-preserving strategies, comparable to differentially non-public artificial information, are important.

Kanwal Mehreen is a machine studying engineer and a technical author with a profound ardour for information science and the intersection of AI with medication. She co-authored the book “Maximizing Productiveness with ChatGPT”. As a Google Era Scholar 2022 for APAC, she champions variety and tutorial excellence. She’s additionally acknowledged as a Teradata Variety in Tech Scholar, Mitacs Globalink Analysis Scholar, and Harvard WeCode Scholar. Kanwal is an ardent advocate for change, having based FEMCodes to empower girls in STEM fields.

Picture by Editor

# Introduction

Artificial information, because the identify suggests, is created artificially somewhat than being collected from real-world sources. It appears like actual information however avoids privateness points and excessive information assortment prices. This lets you simply check software program and fashions whereas operating experiments to simulate efficiency after launch.

Whereas libraries like Faker, SDV, and SynthCity exist — and even giant language fashions (LLMs) are extensively used for producing artificial information — my focus on this article is to keep away from counting on these exterior libraries or AI instruments. As a substitute, you’ll discover ways to obtain the identical outcomes by writing your individual Python scripts. This gives a greater understanding of the right way to form a dataset and the way biases or errors are launched. We’ll begin with easy toy scripts to grasp the accessible choices. When you grasp these fundamentals, you’ll be able to comfortably transition to specialised libraries.

# 1. Producing Easy Random Information

The best place to start out is with a desk. For instance, in the event you want a faux buyer dataset for an inner demo, you’ll be able to run a script to generate comma-separated values (CSV) information:

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

international locations = ["Canada", "UK", "UAE", "Germany", "USA"]

plans = ["Free", "Basic", "Pro", "Enterprise"]

def random_signup_date():

begin = datetime(2024, 1, 1)

finish = datetime(2026, 1, 1)

delta_days = (finish - begin).days

return (begin + timedelta(days=random.randint(0, delta_days))).date().isoformat()

rows = []

for i in vary(1, 1001):

age = random.randint(18, 70)

nation = random.selection(international locations)

plan = random.selection(plans)

monthly_spend = spherical(random.uniform(0, 500), 2)

rows.append({

"customer_id": f"CUST{i:05d}",

"age": age,

"nation": nation,

"plan": plan,

"monthly_spend": monthly_spend,

"signup_date": random_signup_date()

})

with open("prospects.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=rows[0].keys())

author.writeheader()

author.writerows(rows)

print("Saved prospects.csv")

Output:

This script is simple: you outline fields, select ranges, and write rows. The random module helps integer era, floating-point values, random selection, and sampling. The csv module is designed to learn and write row-based tabular information. This type of dataset is appropriate for:

- Frontend demos

- Dashboard testing

- API growth

- Studying Structured Question Language (SQL)

- Unit testing enter pipelines

Nonetheless, there’s a main weak point to this strategy: every part is totally random. This usually leads to information that appears flat or unnatural. Enterprise prospects would possibly spend solely 2 {dollars}, whereas “Free” customers would possibly spend 400. Older customers behave precisely like youthful ones as a result of there isn’t any underlying construction.

In real-world situations, information hardly ever behaves this manner. As a substitute of producing values independently, we will introduce relationships and guidelines. This makes the dataset really feel extra sensible whereas remaining absolutely artificial. As an illustration:

- Enterprise prospects ought to virtually by no means have zero spend

- Spending ranges ought to depend upon the chosen plan

- Older customers would possibly spend barely extra on common

- Sure plans must be extra frequent than others

Let’s add these controls to the script:

import csv

import random

random.seed(42)

plans = ["Free", "Basic", "Pro", "Enterprise"]

def choose_plan():

roll = random.random()

if roll < 0.45:

return "Free"

if roll < 0.75:

return "Fundamental"

if roll < 0.93:

return "Professional"

return "Enterprise"

def generate_spend(age, plan):

if plan == "Free":

base = random.uniform(0, 10)

elif plan == "Fundamental":

base = random.uniform(10, 60)

elif plan == "Professional":

base = random.uniform(50, 180)

else:

base = random.uniform(150, 500)

if age >= 40:

base *= 1.15

return spherical(base, 2)

rows = []

for i in vary(1, 1001):

age = random.randint(18, 70)

plan = choose_plan()

spend = generate_spend(age, plan)

rows.append({

"customer_id": f"CUST{i:05d}",

"age": age,

"plan": plan,

"monthly_spend": spend

})

with open("controlled_customers.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=rows[0].keys())

author.writeheader()

author.writerows(rows)

print("Saved controlled_customers.csv")

Output:

Now the dataset preserves significant patterns. Fairly than producing random noise, you might be simulating behaviors. Efficient controls could embrace:

- Weighted class choice

- Reasonable minimal and most ranges

- Conditional logic between columns

- Deliberately added uncommon edge circumstances

- Lacking values inserted at low charges

- Correlated options as an alternative of unbiased ones

# 2. Simulating Processes for Artificial Information

Simulation-based era is among the finest methods to create sensible artificial datasets. As a substitute of immediately filling columns, you simulate a course of. For instance, take into account a small warehouse the place orders arrive, inventory decreases, and low inventory ranges set off backorders.

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

stock = {

"A": 120,

"B": 80,

"C": 50

}

rows = []

current_time = datetime(2026, 1, 1)

for day in vary(30):

for product in stock:

daily_orders = random.randint(0, 12)

for _ in vary(daily_orders):

qty = random.randint(1, 5)

earlier than = stock

if stock >= qty:

stock -= qty

standing = "fulfilled"

else:

standing = "backorder"

rows.append({

"time": current_time.isoformat(),

"product": product,

"qty": qty,

"stock_before": earlier than,

"stock_after": stock,

"standing": standing

})

if stock < 20:

restock = random.randint(30, 80)

stock += restock

rows.append({

"time": current_time.isoformat(),

"product": product,

"qty": restock,

"stock_before": stock - restock,

"stock_after": stock,

"standing": "restock"

})

current_time += timedelta(days=1)

with open("warehouse_sim.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=rows[0].keys())

author.writeheader()

author.writerows(rows)

print("Saved warehouse_sim.csv")

Output:

This technique is superb as a result of the information is a byproduct of system habits, which generally yields extra sensible relationships than direct random row era. Different simulation concepts embrace:

- Name middle queues

- Experience requests and driver matching

- Mortgage purposes and approvals

- Subscriptions and churn

- Affected person appointment flows

- Web site site visitors and conversion

# 3. Producing Time Collection Artificial Information

Artificial information is not only restricted to static tables. Many programs produce sequences over time, comparable to app site visitors, sensor readings, orders per hour, or server response occasions. Right here is a straightforward time sequence generator for hourly web site visits with weekday patterns.

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

begin = datetime(2026, 1, 1, 0, 0, 0)

hours = 24 * 30

rows = []

for i in vary(hours):

ts = begin + timedelta(hours=i)

weekday = ts.weekday()

base = 120

if weekday >= 5:

base = 80

hour = ts.hour

if 8 <= hour <= 11:

base += 60

elif 18 <= hour <= 21:

base += 40

elif 0 <= hour <= 5:

base -= 30

visits = max(0, int(random.gauss(base, 15)))

rows.append({

"timestamp": ts.isoformat(),

"visits": visits

})

with open("traffic_timeseries.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=["timestamp", "visits"])

author.writeheader()

author.writerows(rows)

print("Saved traffic_timeseries.csv")

Output:

This strategy works properly as a result of it incorporates traits, noise, and cyclic habits whereas remaining straightforward to clarify and debug.

# 4. Creating Occasion Logs

Occasion logs are one other helpful script fashion, preferrred for product analytics and workflow testing. As a substitute of 1 row per buyer, you create one row per motion.

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

occasions = ["signup", "login", "view_page", "add_to_cart", "purchase", "logout"]

rows = []

begin = datetime(2026, 1, 1)

for user_id in vary(1, 201):

event_count = random.randint(5, 30)

current_time = begin + timedelta(days=random.randint(0, 10))

for _ in vary(event_count):

occasion = random.selection(occasions)

if occasion == "buy" and random.random() < 0.6:

worth = spherical(random.uniform(10, 300), 2)

else:

worth = 0.0

rows.append({

"user_id": f"USER{user_id:04d}",

"event_time": current_time.isoformat(),

"event_name": occasion,

"event_value": worth

})

current_time += timedelta(minutes=random.randint(1, 180))

with open("event_log.csv", "w", newline="", encoding="utf-8") as f:

author = csv.DictWriter(f, fieldnames=rows[0].keys())

author.writeheader()

author.writerows(rows)

print("Saved event_log.csv")

Output:

This format is helpful for:

- Funnel evaluation

- Analytics pipeline testing

- Enterprise intelligence (BI) dashboards

- Session reconstruction

- Anomaly detection experiments

A helpful approach right here is to make occasions depending on earlier actions. For instance, a purchase order ought to usually observe a login or a web page view, making the artificial log extra plausible.

# 5. Producing Artificial Textual content Information with Templates

Artificial information can also be priceless for pure language processing (NLP). You don’t all the time want an LLM to start out; you’ll be able to construct efficient textual content datasets utilizing templates and managed variation. For instance, you’ll be able to create help ticket coaching information:

import json

import random

random.seed(42)

points = [

("billing", "I was charged twice for my subscription"),

("login", "I cannot log into my account"),

("shipping", "My order has not arrived yet"),

("refund", "I want to request a refund"),

]

tones = ["Please help", "This is urgent", "Can you check this", "I need support"]

data = []

for _ in vary(100):

label, message = random.selection(points)

tone = random.selection(tones)

textual content = f"{tone}. {message}."

data.append({

"textual content": textual content,

"label": label

})

with open("support_tickets.jsonl", "w", encoding="utf-8") as f:

for merchandise in data:

f.write(json.dumps(merchandise) + "n")

print("Saved support_tickets.jsonl")

Output:

This strategy works properly for:

- Textual content classification demos

- Intent detection

- Chatbot testing

- Immediate analysis

# Last Ideas

Artificial information scripts are highly effective instruments, however they are often applied incorrectly. You should definitely keep away from these frequent errors:

- Making all values uniformly random

- Forgetting dependencies between fields

- Producing values that violate enterprise logic

- Assuming artificial information is inherently secure by default

- Creating information that’s too “clear” to be helpful for testing real-world edge circumstances

- Utilizing the identical sample so regularly that the dataset turns into predictable and unrealistic

Privateness stays probably the most important consideration. Whereas artificial information reduces publicity to actual data, it’s not risk-free. If a generator is simply too carefully tied to unique delicate information, leakage can nonetheless happen. Because of this privacy-preserving strategies, comparable to differentially non-public artificial information, are important.

Kanwal Mehreen is a machine studying engineer and a technical author with a profound ardour for information science and the intersection of AI with medication. She co-authored the book “Maximizing Productiveness with ChatGPT”. As a Google Era Scholar 2022 for APAC, she champions variety and tutorial excellence. She’s additionally acknowledged as a Teradata Variety in Tech Scholar, Mitacs Globalink Analysis Scholar, and Harvard WeCode Scholar. Kanwal is an ardent advocate for change, having based FEMCodes to empower girls in STEM fields.