10 Widespread Misconceptions About Massive Language Fashions

Picture by Editor | ChatGPT

Introduction

Massive language fashions (LLMs) have quickly built-in into our every day workflows. From coding brokers that write practical code to easy chat classes serving to us brainstorm concepts, LLMs have turn out to be important productiveness instruments throughout industries.

Regardless of this widespread adoption, elementary misconceptions persist amongst each present customers and builders planning to construct LLM-powered functions. These misunderstandings usually stem from the hole between advertising guarantees and technical actuality, resulting in poor architectural selections, misallocated sources, and challenge timelines that don’t account for the fashions’ precise capabilities and constraints.

Whether or not you’re integrating an LLM API into your present product or constructing a completely new AI-powered software, understanding what these fashions can and can’t do is important for fulfillment. Clear expectations about LLM capabilities instantly affect the way you design programs, construction your improvement course of, and talk practical outcomes to stakeholders.

This text covers the ten commonest myths about LLMs that each developer ought to perceive earlier than their subsequent AI integration.

1. LLMs Truly Perceive Language Like People Do

The fact: LLMs function as superior statistical engines that match enter queries to discovered textual patterns. Whereas their outputs seem clever, they lack the conceptual understanding that characterizes human comprehension.

When an LLM processes “The cat sat on the mat,” it’s not visualizing a feline on a chunk of material. As a substitute, it’s leveraging statistical relationships discovered from billions of textual content examples. The mannequin acknowledges that sure token sequences generally seem collectively and generates responses based mostly on these discovered patterns.

This distinction issues when constructing functions. An LLM would possibly completely deal with “What’s the capital of France?” however wrestle with prompts that require connecting disparate items of knowledge in ways in which may not have been widespread in coaching information.

So at all times design your prompts and system structure appropriately. Use express context and clear directions moderately than assuming the mannequin “will get it.”

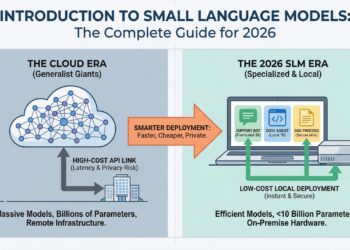

2. Extra Parameters At all times Imply Higher Efficiency

The fact: Parameter depend is only one think about mannequin functionality, and sometimes not an important one.

The trade’s narrative on giant parameter counts related to tremendous succesful language fashions shouldn’t be at all times right. Coaching information high quality, architectural enhancements, and fine-tuning approaches usually matter greater than uncooked dimension.

Small language fashions are proving this level dramatically. Fashions like Phi-3 with 3.8B parameters can really carry out higher than a lot bigger fashions on particular reasoning duties. Code-focused fashions like CodeT5+ obtain spectacular outcomes with comparatively modest parameter counts by coaching on high-quality, domain-specific information.

The effectivity features are substantial too. Smaller fashions require much less reminiscence, generate tokens quicker, and price considerably much less to run. For a lot of manufacturing use instances, a well-trained 7B parameter mannequin offers higher worth than a 70B parameter mannequin that requires costly GPU clusters.

Select fashions based mostly in your particular use case, not advertising claims about parameter counts. Benchmark smaller, specialised fashions in opposition to bigger general-purpose ones. Additionally take into account the overall value of possession, together with inference and infrastructure prices.

3. LLMs Are Simply Autocomplete on Steroids

The fact: Whereas autocompletion is a part of how LLMs work, they exhibit emergent behaviors that go far past easy textual content prediction.

Sure, language fashions predict the following token based mostly on earlier tokens. However this course of, when scaled up with transformer architectures and large datasets, produces capabilities that weren’t explicitly programmed: reasoning via multi-step issues, translating languages, writing code, and even demonstrating some types of mathematical reasoning.

These emergent skills come up from the advanced interactions between consideration mechanisms, discovered representations, and the sheer scale of coaching. The mannequin learns to symbolize ideas, relationships, and even summary reasoning patterns — not simply phrase sequences.

Proceed engaged on well-crafted and particular prompts. Experiment with chain-of-thought prompting, few-shot examples, and structured outputs to get probably the most out of LLMs.

4. LLMs Bear in mind All the pieces They’ve Realized

The fact: LLMs don’t have excellent recall and may exhibit stunning information gaps.

Throughout coaching, fashions see each bit of textual content only some instances, and there’s no assure they’ll retain particular details. Data is distributed throughout hundreds of thousands of parameters in ways in which don’t map neatly to human reminiscence. An LLM would possibly know obscure historic details whereas lacking fundamental data that was underrepresented in coaching information.

This creates the uncanny valley impact the place fashions appear omniscient in some areas whereas displaying obtrusive blind spots in others. The information can be “compressed” — particulars get misplaced, and the mannequin would possibly confidently generate plausible-sounding however incorrect data.

At all times confirm crucial data from LLM outputs. Implement retrieval-augmented era (RAG) for factual accuracy, particularly in domains the place precision issues.

5. High-quality-Tuning At all times Makes Fashions Higher

The fact: High-quality-tuning can enhance efficiency on particular duties however usually comes with vital tradeoffs.

High-quality-tuning sometimes improves efficiency on duties just like the fine-tuning information whereas doubtlessly degrading efficiency on different duties — a phenomenon known as catastrophic forgetting. If you happen to fine-tune a mannequin on authorized paperwork, it’d turn out to be worse at inventive writing or technical explanations.

Furthermore, fine-tuning requires cautious information curation, computational sources, and experience to keep away from overfitting. Many builders would profit extra from higher immediate engineering, retrieval programs, or utilizing pre-trained fashions that already align with their wants.

Contemplate immediate engineering, in-context studying, and RAG earlier than leaping to fine-tuning. If you fine-tune, preserve analysis benchmarks throughout completely different process varieties to catch efficiency degradation.

6. LLMs Are Deterministic: Identical Enter, Identical Output

The fact: LLMs are inherently probabilistic and introduce managed randomness throughout era.

Even with temperature set to 0, many LLMs nonetheless exhibit some non-determinism attributable to floating-point arithmetic, parallelization results, and implementation particulars. At increased temperatures, the mannequin samples from chance distributions, making outputs genuinely unpredictable.

This probabilistic nature is definitely a function, not a bug. It facilitates inventive functions, prevents overfitting to particular phrasings, and makes interactions really feel extra pure. Nonetheless, it may be problematic whenever you want constant outputs for testing or manufacturing programs.

Design programs that may deal with output variability. Use structured output codecs, implement output validation, and think about using decrease temperatures or constrained era when consistency issues.

7. Larger Context Home windows Are At all times Higher

The fact: Massive context home windows include computational prices, efficiency degradation, and sensible limitations.

A 128k token context window sounds spectacular, however including extra context doesn’t at all times result in higher outputs (counterintuitive as it could appear). When processing prolonged contexts, nevertheless, language fashions systematically underperform at accessing data positioned in center sections — an issue researchers name “misplaced within the center.” This limitation reveals elementary constraints in how these programs deal with prolonged textual inputs. Additionally, longer contexts require extra compute, improve latency, and price extra to course of.

For a lot of functions, sensible chunking methods, summarization, or retrieval programs present higher outcomes than stuffing every part into a large context window. The hot button is matching context size to your precise use case, not maximizing it.

Profile your functions to grasp the place data will get misplaced in lengthy contexts. Contemplate hybrid approaches that mix retrieval, summarization, and centered context home windows moderately than relying solely on giant contexts.

8. LLMs Can Substitute Conventional Machine Studying for All Language Duties

The fact: LLMs are nice at many duties however aren’t at all times the optimum resolution for each pure language process.

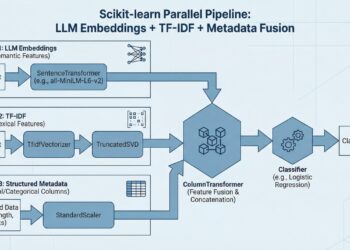

For top-throughput, low-latency functions like spam filtering or sentiment evaluation, smaller specialised fashions usually carry out higher and price much less. Conventional approaches like TF-IDF with logistic regression can nonetheless outperform LLMs on sure classification duties, particularly with restricted coaching information.

LLMs are helpful whenever you want flexibility, few-shot studying, or advanced reasoning. However you probably have a well-defined downside with loads of labeled information and strict latency necessities, conventional machine studying strategies could be the higher alternative.

Consider LLMs in opposition to easier baselines in your particular use case. Look past accuracy when evaluating efficiency; think about prices, response instances, and the way a lot work the system requires to keep up.

9. Immediate Engineering Is Simply Trial and Error

The fact: Efficient immediate engineering follows systematic ideas and measurable strategies.

Good immediate engineering includes understanding how fashions course of data, utilizing efficient prompting strategies like chain-of-thought and tree-of-thought reasoning, offering clear examples, and structuring inputs to match the mannequin’s coaching patterns. It’s a talent that mixes area information, understanding of mannequin habits, and systematic experimentation.

Utilizing strategies like few-shot prompting, defining clear roles, and setting particular output codecs provides you with a lot better outcomes. The perfect immediate engineers develop instinct about the right way to talk successfully with language fashions. It’s nearer to a talent than to random guessing.

Make investments time in studying immediate engineering systematically. You’ll want to doc what works and construct reusable immediate templates for widespread duties.

10. LLMs Will Quickly Substitute All Software program Builders

The fact: LLMs are highly effective coding assistants, however software program improvement includes way more than writing code.

Whereas LLMs can generate spectacular code snippets and even full packages, they usually wrestle with system design, understanding advanced enterprise necessities, debugging manufacturing points, and sustaining giant codebases over time. Additionally they can’t navigate organizational dynamics, make architectural selections, or perceive person wants.

Present LLMs work finest as productiveness multipliers — serving to with boilerplate code, documentation, take a look at era, and code rationalization. They’re glorious junior pair programmers however can’t exchange the judgment, creativity, and system-level pondering that skilled builders deliver.

Attempt utilizing LLMs as instruments for accelerating improvement workflows. Give attention to studying the right way to successfully collaborate with AI assistants and take a look at utilizing agentic AI workflows to hurry up improvement moderately than worrying about substitute.

Conclusion

These misconceptions have sensible penalties for improvement groups. Understanding each what LLMs can and can’t do results in higher structure selections, extra correct useful resource planning, and better challenge success charges.

Efficient LLM implementation requires treating these fashions as refined instruments with particular use instances moderately than common options. This implies designing programs that account for his or her probabilistic outputs, planning round their recognized limitations, and making use of them the place their strengths align together with your wants.

As LLM know-how continues to advance, basing your selections on the fashions’ precise capabilities moderately than advertising claims will end in extra dependable and maintainable functions. Give attention to matching the fitting device to the particular downside moderately than retrofitting each problem to suit an LLM method.

10 Widespread Misconceptions About Massive Language Fashions

Picture by Editor | ChatGPT

Introduction

Massive language fashions (LLMs) have quickly built-in into our every day workflows. From coding brokers that write practical code to easy chat classes serving to us brainstorm concepts, LLMs have turn out to be important productiveness instruments throughout industries.

Regardless of this widespread adoption, elementary misconceptions persist amongst each present customers and builders planning to construct LLM-powered functions. These misunderstandings usually stem from the hole between advertising guarantees and technical actuality, resulting in poor architectural selections, misallocated sources, and challenge timelines that don’t account for the fashions’ precise capabilities and constraints.

Whether or not you’re integrating an LLM API into your present product or constructing a completely new AI-powered software, understanding what these fashions can and can’t do is important for fulfillment. Clear expectations about LLM capabilities instantly affect the way you design programs, construction your improvement course of, and talk practical outcomes to stakeholders.

This text covers the ten commonest myths about LLMs that each developer ought to perceive earlier than their subsequent AI integration.

1. LLMs Truly Perceive Language Like People Do

The fact: LLMs function as superior statistical engines that match enter queries to discovered textual patterns. Whereas their outputs seem clever, they lack the conceptual understanding that characterizes human comprehension.

When an LLM processes “The cat sat on the mat,” it’s not visualizing a feline on a chunk of material. As a substitute, it’s leveraging statistical relationships discovered from billions of textual content examples. The mannequin acknowledges that sure token sequences generally seem collectively and generates responses based mostly on these discovered patterns.

This distinction issues when constructing functions. An LLM would possibly completely deal with “What’s the capital of France?” however wrestle with prompts that require connecting disparate items of knowledge in ways in which may not have been widespread in coaching information.

So at all times design your prompts and system structure appropriately. Use express context and clear directions moderately than assuming the mannequin “will get it.”

2. Extra Parameters At all times Imply Higher Efficiency

The fact: Parameter depend is only one think about mannequin functionality, and sometimes not an important one.

The trade’s narrative on giant parameter counts related to tremendous succesful language fashions shouldn’t be at all times right. Coaching information high quality, architectural enhancements, and fine-tuning approaches usually matter greater than uncooked dimension.

Small language fashions are proving this level dramatically. Fashions like Phi-3 with 3.8B parameters can really carry out higher than a lot bigger fashions on particular reasoning duties. Code-focused fashions like CodeT5+ obtain spectacular outcomes with comparatively modest parameter counts by coaching on high-quality, domain-specific information.

The effectivity features are substantial too. Smaller fashions require much less reminiscence, generate tokens quicker, and price considerably much less to run. For a lot of manufacturing use instances, a well-trained 7B parameter mannequin offers higher worth than a 70B parameter mannequin that requires costly GPU clusters.

Select fashions based mostly in your particular use case, not advertising claims about parameter counts. Benchmark smaller, specialised fashions in opposition to bigger general-purpose ones. Additionally take into account the overall value of possession, together with inference and infrastructure prices.

3. LLMs Are Simply Autocomplete on Steroids

The fact: Whereas autocompletion is a part of how LLMs work, they exhibit emergent behaviors that go far past easy textual content prediction.

Sure, language fashions predict the following token based mostly on earlier tokens. However this course of, when scaled up with transformer architectures and large datasets, produces capabilities that weren’t explicitly programmed: reasoning via multi-step issues, translating languages, writing code, and even demonstrating some types of mathematical reasoning.

These emergent skills come up from the advanced interactions between consideration mechanisms, discovered representations, and the sheer scale of coaching. The mannequin learns to symbolize ideas, relationships, and even summary reasoning patterns — not simply phrase sequences.

Proceed engaged on well-crafted and particular prompts. Experiment with chain-of-thought prompting, few-shot examples, and structured outputs to get probably the most out of LLMs.

4. LLMs Bear in mind All the pieces They’ve Realized

The fact: LLMs don’t have excellent recall and may exhibit stunning information gaps.

Throughout coaching, fashions see each bit of textual content only some instances, and there’s no assure they’ll retain particular details. Data is distributed throughout hundreds of thousands of parameters in ways in which don’t map neatly to human reminiscence. An LLM would possibly know obscure historic details whereas lacking fundamental data that was underrepresented in coaching information.

This creates the uncanny valley impact the place fashions appear omniscient in some areas whereas displaying obtrusive blind spots in others. The information can be “compressed” — particulars get misplaced, and the mannequin would possibly confidently generate plausible-sounding however incorrect data.

At all times confirm crucial data from LLM outputs. Implement retrieval-augmented era (RAG) for factual accuracy, particularly in domains the place precision issues.

5. High-quality-Tuning At all times Makes Fashions Higher

The fact: High-quality-tuning can enhance efficiency on particular duties however usually comes with vital tradeoffs.

High-quality-tuning sometimes improves efficiency on duties just like the fine-tuning information whereas doubtlessly degrading efficiency on different duties — a phenomenon known as catastrophic forgetting. If you happen to fine-tune a mannequin on authorized paperwork, it’d turn out to be worse at inventive writing or technical explanations.

Furthermore, fine-tuning requires cautious information curation, computational sources, and experience to keep away from overfitting. Many builders would profit extra from higher immediate engineering, retrieval programs, or utilizing pre-trained fashions that already align with their wants.

Contemplate immediate engineering, in-context studying, and RAG earlier than leaping to fine-tuning. If you fine-tune, preserve analysis benchmarks throughout completely different process varieties to catch efficiency degradation.

6. LLMs Are Deterministic: Identical Enter, Identical Output

The fact: LLMs are inherently probabilistic and introduce managed randomness throughout era.

Even with temperature set to 0, many LLMs nonetheless exhibit some non-determinism attributable to floating-point arithmetic, parallelization results, and implementation particulars. At increased temperatures, the mannequin samples from chance distributions, making outputs genuinely unpredictable.

This probabilistic nature is definitely a function, not a bug. It facilitates inventive functions, prevents overfitting to particular phrasings, and makes interactions really feel extra pure. Nonetheless, it may be problematic whenever you want constant outputs for testing or manufacturing programs.

Design programs that may deal with output variability. Use structured output codecs, implement output validation, and think about using decrease temperatures or constrained era when consistency issues.

7. Larger Context Home windows Are At all times Higher

The fact: Massive context home windows include computational prices, efficiency degradation, and sensible limitations.

A 128k token context window sounds spectacular, however including extra context doesn’t at all times result in higher outputs (counterintuitive as it could appear). When processing prolonged contexts, nevertheless, language fashions systematically underperform at accessing data positioned in center sections — an issue researchers name “misplaced within the center.” This limitation reveals elementary constraints in how these programs deal with prolonged textual inputs. Additionally, longer contexts require extra compute, improve latency, and price extra to course of.

For a lot of functions, sensible chunking methods, summarization, or retrieval programs present higher outcomes than stuffing every part into a large context window. The hot button is matching context size to your precise use case, not maximizing it.

Profile your functions to grasp the place data will get misplaced in lengthy contexts. Contemplate hybrid approaches that mix retrieval, summarization, and centered context home windows moderately than relying solely on giant contexts.

8. LLMs Can Substitute Conventional Machine Studying for All Language Duties

The fact: LLMs are nice at many duties however aren’t at all times the optimum resolution for each pure language process.

For top-throughput, low-latency functions like spam filtering or sentiment evaluation, smaller specialised fashions usually carry out higher and price much less. Conventional approaches like TF-IDF with logistic regression can nonetheless outperform LLMs on sure classification duties, particularly with restricted coaching information.

LLMs are helpful whenever you want flexibility, few-shot studying, or advanced reasoning. However you probably have a well-defined downside with loads of labeled information and strict latency necessities, conventional machine studying strategies could be the higher alternative.

Consider LLMs in opposition to easier baselines in your particular use case. Look past accuracy when evaluating efficiency; think about prices, response instances, and the way a lot work the system requires to keep up.

9. Immediate Engineering Is Simply Trial and Error

The fact: Efficient immediate engineering follows systematic ideas and measurable strategies.

Good immediate engineering includes understanding how fashions course of data, utilizing efficient prompting strategies like chain-of-thought and tree-of-thought reasoning, offering clear examples, and structuring inputs to match the mannequin’s coaching patterns. It’s a talent that mixes area information, understanding of mannequin habits, and systematic experimentation.

Utilizing strategies like few-shot prompting, defining clear roles, and setting particular output codecs provides you with a lot better outcomes. The perfect immediate engineers develop instinct about the right way to talk successfully with language fashions. It’s nearer to a talent than to random guessing.

Make investments time in studying immediate engineering systematically. You’ll want to doc what works and construct reusable immediate templates for widespread duties.

10. LLMs Will Quickly Substitute All Software program Builders

The fact: LLMs are highly effective coding assistants, however software program improvement includes way more than writing code.

Whereas LLMs can generate spectacular code snippets and even full packages, they usually wrestle with system design, understanding advanced enterprise necessities, debugging manufacturing points, and sustaining giant codebases over time. Additionally they can’t navigate organizational dynamics, make architectural selections, or perceive person wants.

Present LLMs work finest as productiveness multipliers — serving to with boilerplate code, documentation, take a look at era, and code rationalization. They’re glorious junior pair programmers however can’t exchange the judgment, creativity, and system-level pondering that skilled builders deliver.

Attempt utilizing LLMs as instruments for accelerating improvement workflows. Give attention to studying the right way to successfully collaborate with AI assistants and take a look at utilizing agentic AI workflows to hurry up improvement moderately than worrying about substitute.

Conclusion

These misconceptions have sensible penalties for improvement groups. Understanding each what LLMs can and can’t do results in higher structure selections, extra correct useful resource planning, and better challenge success charges.

Efficient LLM implementation requires treating these fashions as refined instruments with particular use instances moderately than common options. This implies designing programs that account for his or her probabilistic outputs, planning round their recognized limitations, and making use of them the place their strengths align together with your wants.

As LLM know-how continues to advance, basing your selections on the fashions’ precise capabilities moderately than advertising claims will end in extra dependable and maintainable functions. Give attention to matching the fitting device to the particular downside moderately than retrofitting each problem to suit an LLM method.